Next |

Prev |

Up |

Top

|

Index |

JOS Index |

JOS Pubs |

JOS Home |

Search

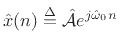

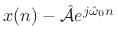

There are many ways to define ``optimal'' in signal modeling. Perhaps

the most elementary case is least squares estimation. Every

estimator tries to measure one or more parameters of some underlying

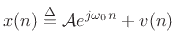

signal model. In the case of sinusoidal parameter estimation,

the simplest model consists of a single complex sinusoidal component

in additive white noise:

|

(6.32) |

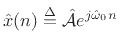

where

is the complex amplitude of the sinusoid,

and

is the complex amplitude of the sinusoid,

and  is white noise (defined in §C.3). Given

measurements of

is white noise (defined in §C.3). Given

measurements of  ,

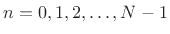

,

, we wish to estimate the

parameters

, we wish to estimate the

parameters

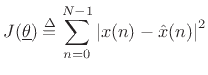

of this sinusoid. In the method of

least squares, we minimize the sum of squared errors between the data

and our model. That is, we minimize

of this sinusoid. In the method of

least squares, we minimize the sum of squared errors between the data

and our model. That is, we minimize

|

(6.33) |

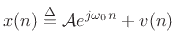

with respect to the parameter vector

![$\displaystyle \underline{\theta}\isdef \left[\begin{array}{c} A \\ [2pt] \phi \\ [2pt] \omega_0 \end{array}\right],$](img1028.png) |

(6.34) |

where

denotes our signal model:

denotes our signal model:

|

(6.35) |

Note that the error signal

is

linear in

is

linear in

but

nonlinear in the parameter

but

nonlinear in the parameter

. More significantly,

. More significantly,

is non-convex with respect to variations in

is non-convex with respect to variations in

. Non-convexity can make an optimization based on gradient

descent very difficult, while convex optimization problems can

generally be solved quite efficiently [22,86].

. Non-convexity can make an optimization based on gradient

descent very difficult, while convex optimization problems can

generally be solved quite efficiently [22,86].

Subsections

Next |

Prev |

Up |

Top

|

Index |

JOS Index |

JOS Pubs |

JOS Home |

Search

[How to cite this work] [Order a printed hardcopy] [Comment on this page via email]

![$\displaystyle \underline{\theta}\isdef \left[\begin{array}{c} A \\ [2pt] \phi \\ [2pt] \omega_0 \end{array}\right],$](img1028.png)