![\begin{eqnarray*}

\underline{s}_0 &\isdef & [1,1], \\

\underline{s}_1 &\isdef & [-1,-1].

\end{eqnarray*}](img909.png)

Now consider another example:

![\begin{eqnarray*}

\underline{s}_0 &\isdef & [1,1], \\

\underline{s}_1 &\isdef & [-1,-1].

\end{eqnarray*}](img909.png)

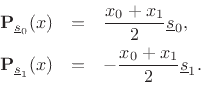

The projections of

![]() onto these vectors are

onto these vectors are

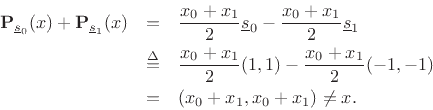

The sum of the projections is

Something went wrong, but what? It turns out that a set of ![]() vectors can be used to reconstruct an arbitrary vector in

vectors can be used to reconstruct an arbitrary vector in

![]() from

its projections only if they are linearly independent. In

general, a set of vectors is linearly independent if none of them can

be expressed as a linear combination of the others in the set. What

this means intuitively is that they must ``point in different

directions'' in

from

its projections only if they are linearly independent. In

general, a set of vectors is linearly independent if none of them can

be expressed as a linear combination of the others in the set. What

this means intuitively is that they must ``point in different

directions'' in ![]() -space. In this example

-space. In this example

![]() so that they

lie along the same line in

so that they

lie along the same line in ![]() -space. As a result, they are

linearly dependent: one is a linear combination of the other

(

-space. As a result, they are

linearly dependent: one is a linear combination of the other

(

![]() ).

).