Next |

Prev |

Up |

Top

|

Index |

JOS Index |

JOS Pubs |

JOS Home |

Search

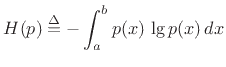

Among probability distributions  which are nonzero over a

finite range of values

which are nonzero over a

finite range of values ![$ x\in[a,b]$](img2795.png) , the maximum-entropy

distribution is the uniform distribution. To show this, we

must maximize the entropy,

, the maximum-entropy

distribution is the uniform distribution. To show this, we

must maximize the entropy,

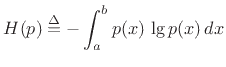

|

(D.33) |

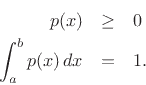

with respect to  , subject to the constraints

, subject to the constraints

Using the method of Lagrange multipliers for optimization in

the presence of constraints [86], we may form the

objective function

|

(D.34) |

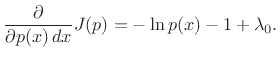

and differentiate with respect to  (and renormalize by dropping the

(and renormalize by dropping the  factor multiplying all terms) to obtain

factor multiplying all terms) to obtain

|

(D.35) |

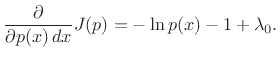

Setting this to zero and solving for  gives

gives

|

(D.36) |

(Setting the partial derivative with respect to  to zero

merely restates the constraint.)

to zero

merely restates the constraint.)

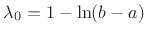

Choosing  to satisfy the constraint gives

to satisfy the constraint gives

, yielding

, yielding

![$\displaystyle p(x) = \left\{\begin{array}{ll} \frac{1}{b-a}, & a\leq x \leq b \\ [5pt] 0, & \hbox{otherwise}. \\ \end{array} \right.$](img2803.png) |

(D.37) |

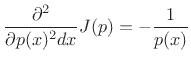

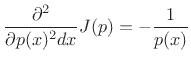

That this solution is a maximum rather than a minimum or inflection

point can be verified by ensuring the sign of the second partial

derivative is negative for all  :

:

|

(D.38) |

Since the solution spontaneously satisfied  , it is a maximum.

, it is a maximum.

Next |

Prev |

Up |

Top

|

Index |

JOS Index |

JOS Pubs |

JOS Home |

Search

[How to cite this work] [Order a printed hardcopy] [Comment on this page via email]

![]() which are nonzero over a

finite range of values

which are nonzero over a

finite range of values ![]() , the maximum-entropy

distribution is the uniform distribution. To show this, we

must maximize the entropy,

, the maximum-entropy

distribution is the uniform distribution. To show this, we

must maximize the entropy,

![]() to satisfy the constraint gives

to satisfy the constraint gives

![]() , yielding

, yielding

![$\displaystyle p(x) = \left\{\begin{array}{ll} \frac{1}{b-a}, & a\leq x \leq b \\ [5pt] 0, & \hbox{otherwise}. \\ \end{array} \right.$](img2803.png)