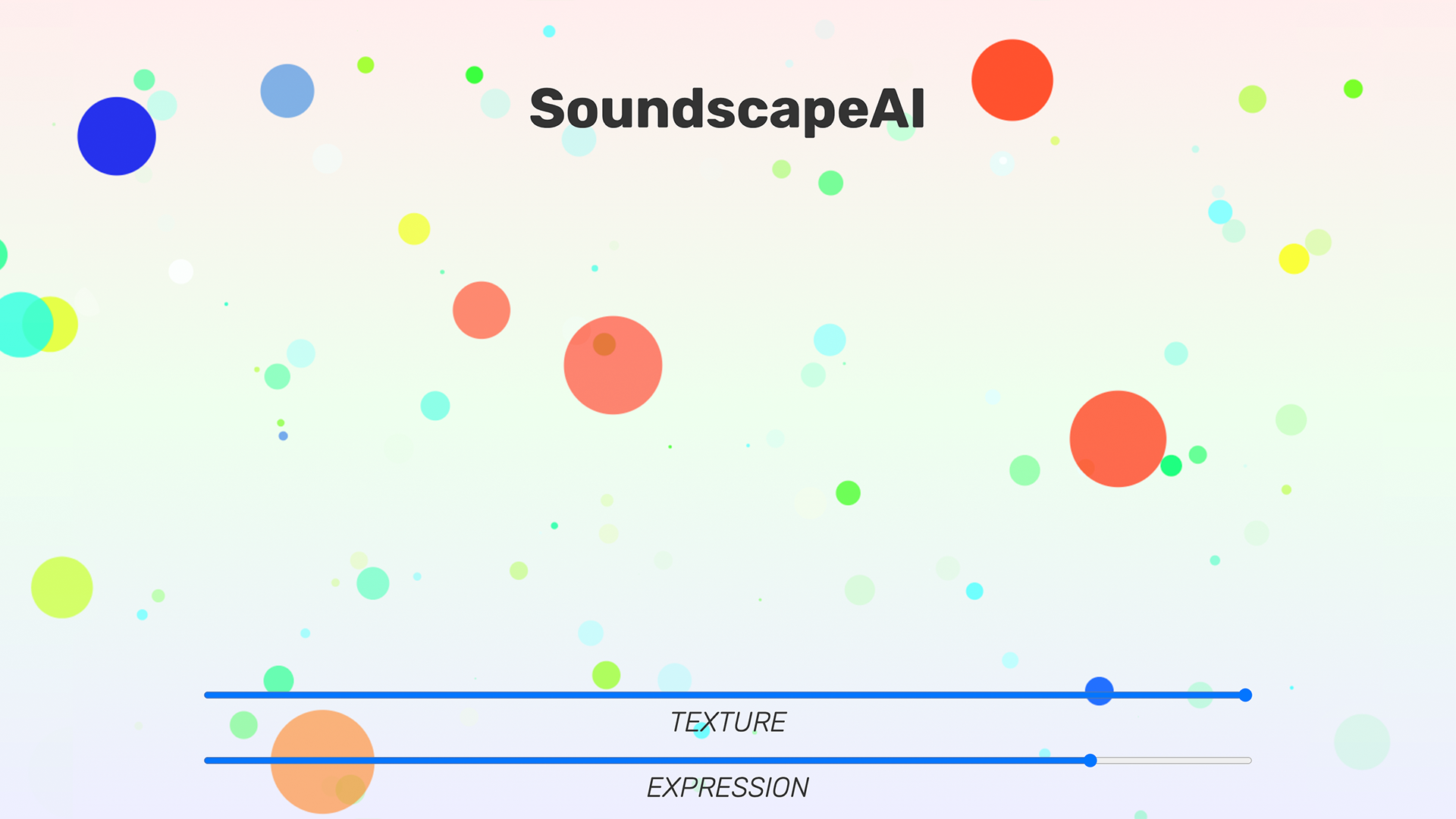

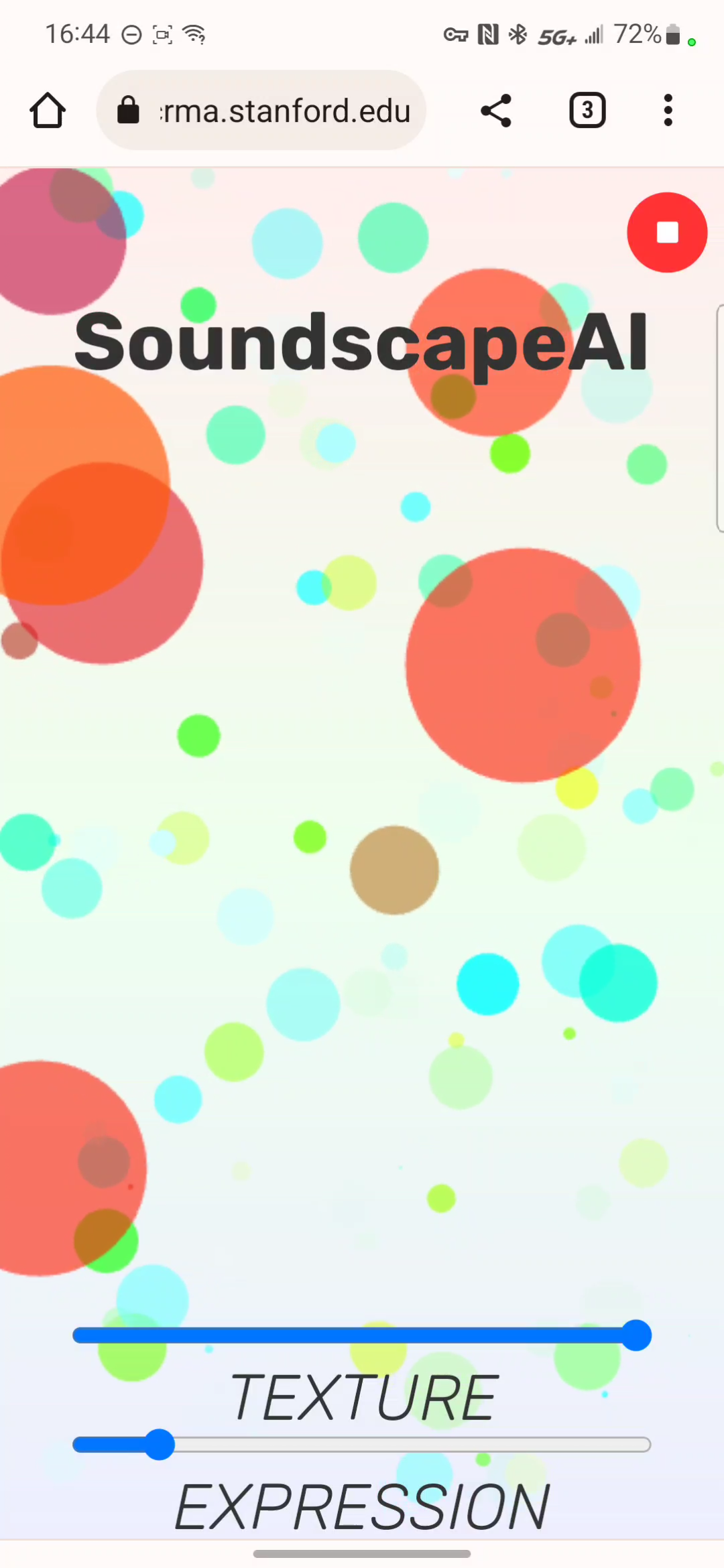

SoundscapeAI

Extracting environmental features for real-time soundscape generation with concatenative synthesis

Try SoundscapeAI: HERE!

Original "Featured Artist" assignment: here

Description:

SoundscapeAI uses concatenative synthesis to generate soundscapes from your environment. Trained on a 90 second piece that I wrote, SoundscapeAI endlessly generates and synthesizes a unique ambient soundscape. SoundscapeAI gives you texture and expression to control to fine-tune how it generates. With the flexibility of WebChucK and ChAI, you can take this on this go, outside, studying at the coffee shop, or even have it generate a soundscape for you walk

Reflection:

In creating SoundscapeAI, I really was struck by how you could concatenative synthesis could "re-imagine" a song and it's sonic atmostphere. In doing phase 3 of the project, I began with just messing around with sound files, namely the RickRoll. Playing around with an extremely high K value, I found that randomly synthesizing windows led to a very interesting soundscape, the sound of RickRoll without the original flow of RickRoll. It still sounded like the original song, but yet was completel different. This inspired me to really take advantage of the nature of concatenative synthesis, especially when it's "inaccurate" and can't be controlled well. The notion of creating soundscapes was really rewarding, you could do a lot with very little. Writing my own piece for training data, I was able to capitalize on how I could utilize multiple overlapping voices to create one homogeneous blend of sound. With this, I wanted to create not only a unique soundscape based on the environment, but also a unique experience of manipulating this soundscape in real-time. In thinking about how one was to listen to this soundscape, the context of use, this really challenged me to think of how AI can be an expressive tool. When it isn't perfect, it makes room for the human to explore, and to feel. As a tool, SoundscapeAI is an interface---an interface with sound and perhaps a lens upon one's environment. But it also an expression, a controlling of what you want to hear, where and when you make the choice to choose to hear it, and how you co-creatively react as both artist and the audience.

Acknowledgements

Huge thanks to Ge Wang and Yikai Li for making this project from concept to extensive starter code. Also thanks to people on ChucK R&D for bringing WebChucK to life. Shoutout to Celeste Betancur, rapid prototyping in the IDE with sliders was super easy and fast.

SoundscapeAI code: download here

Phase 3

Try it here: soundscapeAI - Phase 3

Building a webpage that will allow users to select a soundscape and sing, as well as be able to generate sound soundscape-esque visuals or static image to share. Currently displays the window of concatenative synthesis.

website code: download here

Phase 2

In phase 2, I was able to use WebChucK to process RickRoll audio and generate a RickRoll soundscape. I can now convert mic input into any soundscape for any trained and loaded song.

code: download here and read README.txt

Phase 1

In phase 1, I did music genre classification using the following 41 dimensions: Flux, ZeroX, RMS, RollOff, MFCC (13 dim), and SFM (24 dim). With these parameters, as well as an extract time of 25 seconds sliced into 5 windows, I was able to get a cross validation score of around 50%, compared to the baseline accuracy of 40%. For 10 categories, I thought that this was pretty good, allowing me to perform relatively accurate classification while maintaining a fast and low dimensional embedding space. The most difficult part was learning how all the unit analyzers in ChucK worked. After trying many different combinations of UAnas, I realized 50% for GOFAI was pretty good. Trying my classifier with real music in real time, made me think otherwise. But for phase 1, that was enough to get me started.

code: download here and read README.txt