The control scheme for Rabbit Ears is as follows:

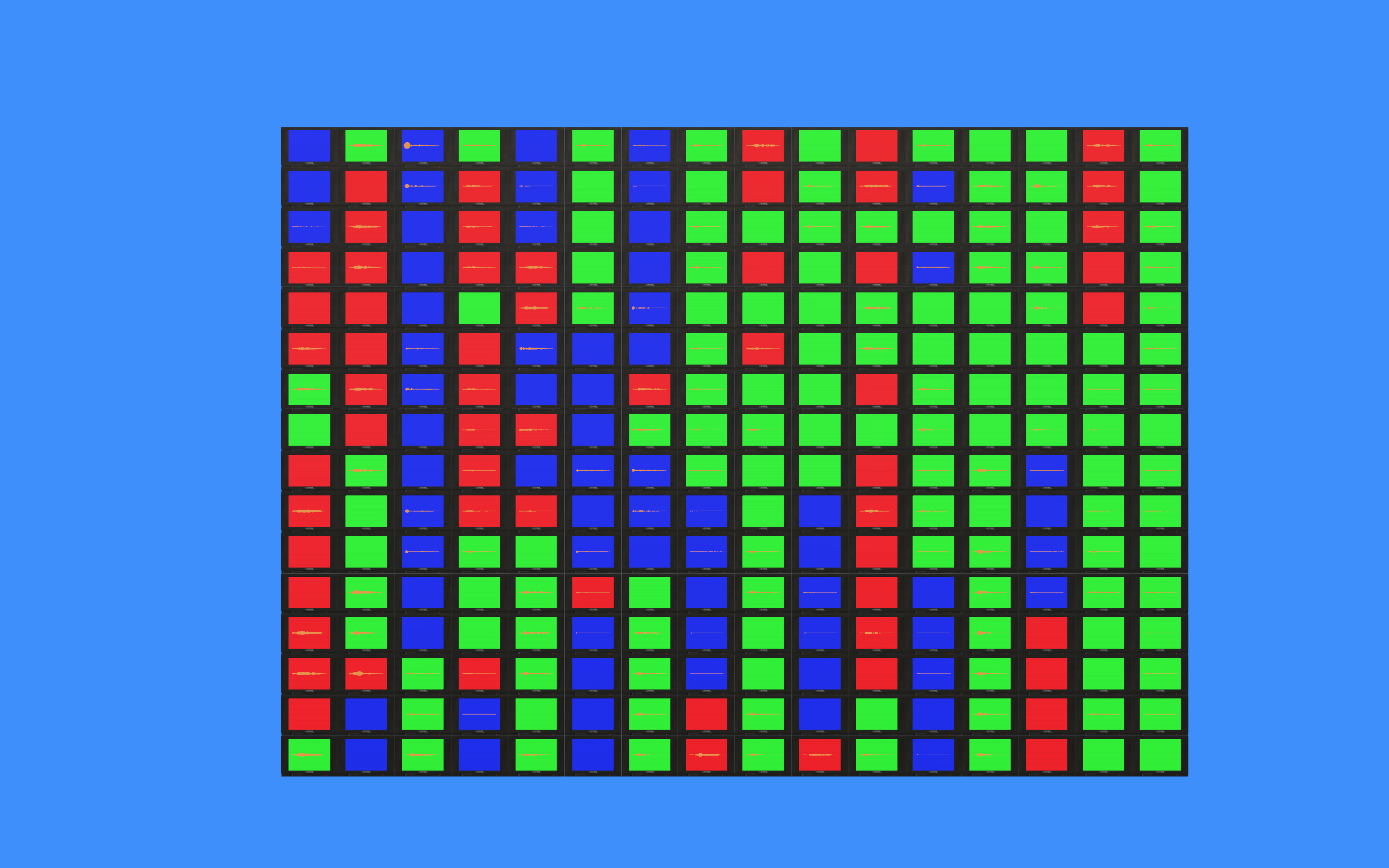

The original idea for this project came from my real-world experience in building glitch art installations using CRTs. The idea of using a repeated waveform as a stand-in for TV static came first, with the spectral ball representation emerging as a counterpoint. The system has gone through many iterations, but most of the changes you'll see compared to the version shown below stem from the advice "Less is more, but also more is more." Since I now had the blueprint for creating one of these TVs, I experimented with functionality to drastically increase the number of objects on the screen, and made adjustments to make the content of each screen less draw-heavy. Instead of trying to cram the spectral history on one screen, each screen now represents one frame in the spectral history, so its more of an emergent property of the system as it grows over time. Additionally, I liked the sort of analogy to cellular division, so each time the stack grows, the amount of static on each screen decreases as it "shares" among its descendents.

The difficulties along the way were plentiful and frequently frustrating. Learning to debug in Unity is a whole new skillset, and I certainly got plenty of practice with this project. The main overarching constraint that I had to operate around was laginess as the number of objects on the screen increased, and I navigated around that constraint using the ideas I described above.

I want to take a second here to thank Ge and Kunwoo for their knowledge and generosity in getting us started on the project, and especially Kunwoo for his continual and prompt assistance on the class Discord. I'd also like to thank the Unity documentation and forums for helping me get unstuck at countless points along the way.

Wow what a long this has come since the screenshot below, when I was simply trying to line up a CRT in the camera for visual effect hah! This has been a lot of fun to work on and super rewarding, but also super I-Want-To-Pull-My-Hair-Out at times. Figuring out how to get the action to play out "on screen" was one of the first big hurdles until I figured out the best way to find the corners of a plane. Also had to fight some battles with local vs global positioning and location, lots of number tweaking to find the right parameters, and last but not least, the keyboard input system that allows for each TV to be toggled (which is still not quite in perfect working condition, but has become one of those imperfect things that feels like it really fits).

Where do we go from here? The two big things I want to fix from this current iteration are the imperfect keyboard control and the terrible amount of lag that has developed with all the draw calls happening on screen. After those issues are handled, I was considering adding a "secret" feature where if every TV is turned to the same channel, they all merge into a more complicated variation of themselves (I'm thinking like a galaxy swirl for the static, maybe adding some fluid dynamics to the spectral stuff). After that, it's all narrative!

Here is my progress up to now! I successfully imported and remapped a CRT 3d model from the Unity Asset store, and experimented A LOT with the parameters to get the basic audio visualizer "displayed" on screen. There were several hiccups to troubleshoot along the way - the main one being that the model was built with a different rendering pipeline than the URP so the shaders/textures were broken on import. The parameters for the URP shader didn't align with what was originally used, so I had to guess and revise as to what parts of the shader each texture map should attach too. From there, it was a lot of parameter tweaking on scale and location for the tv and camera. I'm currently running into an issue where the scale is too large for the camera to render properly, so those parameters will be what I tweak next. From here I think I want to add a black "screen" to the TV to reduce the reflectance, and then group the visualizer/tv GameObject into a prefab, so I can script my custom displays and replicate the CRT model into a whole array.

I've had a really enjoyable time learning Chunity so far. Graphics and graphical interfaces have felt out of reach up to now because I had psyched myself out about not having the proper credentials or skills, like 3d modelling. While I do agree with Ge that my initial reaction to Unity was kind of like "Ew, gross", when I dove into the tutorials and realized that making things happen with graphics was exactly the same as coding anything else, it was actually kind of a euphoric moment. I had put this barrier up between myself and something that I knew would probably interest me, all because of preconceived notions I had about the thing - probably a good life lesson to be taken away here. Anyways, I'm having a lot of fun, and I'm excited to keep going, so thanks to Ge and Kunwoo for helping me get started!

I believe we are also supposed to mention where our thinking stands with the Visualizer project. I had an idea during class before our whole "Make it read" conversation that I no longer think really fits the parameters of the assignment, but I want to give it more thought to see how I could potentially at least take inspiration from the idea or rework it in a more applicable form. The idea was to make an iTunes style visualizer (so more abstract) by training a GAN on footage of glitch video art my friends and I have made to generate a fuzzier, more analog version of this, and then using the waveform and spectrum data to traverse the latent space of the model. I think it would be really visually interesting, and the GAN glitch model is something I've been thinking about building for a while, but at this point the idea needs some revision to see if there's a way to make it READ.