Deus in Machina is an exploratory audio-visual instrument where YOU are the god of the machine. Both the synth and visual engines have been designed to maximize your experience of all-mighty power. Feel the pounding drone synth respond to your slightest touch, and send down your holy light in an attempt to win converts - but you never know how the tides of history might turn. Keeping your pantheon close by your side is important, as each new deity causes chaos and division among the populace. The feedback between your heavenly choir and fleetingly mortal subjects encourages you to explore, finding new sounds that lead to new worlds and new worlds that lead to new sounds. The lack of visual feedback for auditory changes is intentional, and invites you to learn and master the symbolic language of the instrument.

The synth engine and visual history are controlled via multitouch with support for up to 5 fingers. The number of fingers currently active controls transitions through different automata settings (per mode), and the changes in your finger positions are mapped to different control parameters per mode. The modes are as follows:

This milestone was all about maximalism. With Milestone 1, I was able to define the basic audio visual components of the system and the interactions that would drive them. However, both the synth engine and the cellular automata were very skeletal, so this week I wanted to beef them up significantly. There are now 9 different control variations, each represented by a different gradient scheme and control set for the cellular automata (which now has more parameters). The synth engine is much deeper to allow for 9 different dimensions of control. The leftmost 9 keys on a QWERTY keyboard (QWEASDZXC) trigger the different modes which are as follows:

There are also several quality of life upgrades included as a part of Milestone 2. The performance of the system has been dramatically improved via the use of MaterialPropertyBlocks. The reset functionality is also more aligned with the core control scheme and is now triggered by a mouse click. There is also visual feedback now to tighten the audiovisual loop and observe what the control inputs are doing.

Now that the system is deep, my main goal is to make sure it has the nuance that I want. That the control schemes are mapped out properly to create the sounds I want, and that everything is attuned for crafting a compelling narrative at performance time.

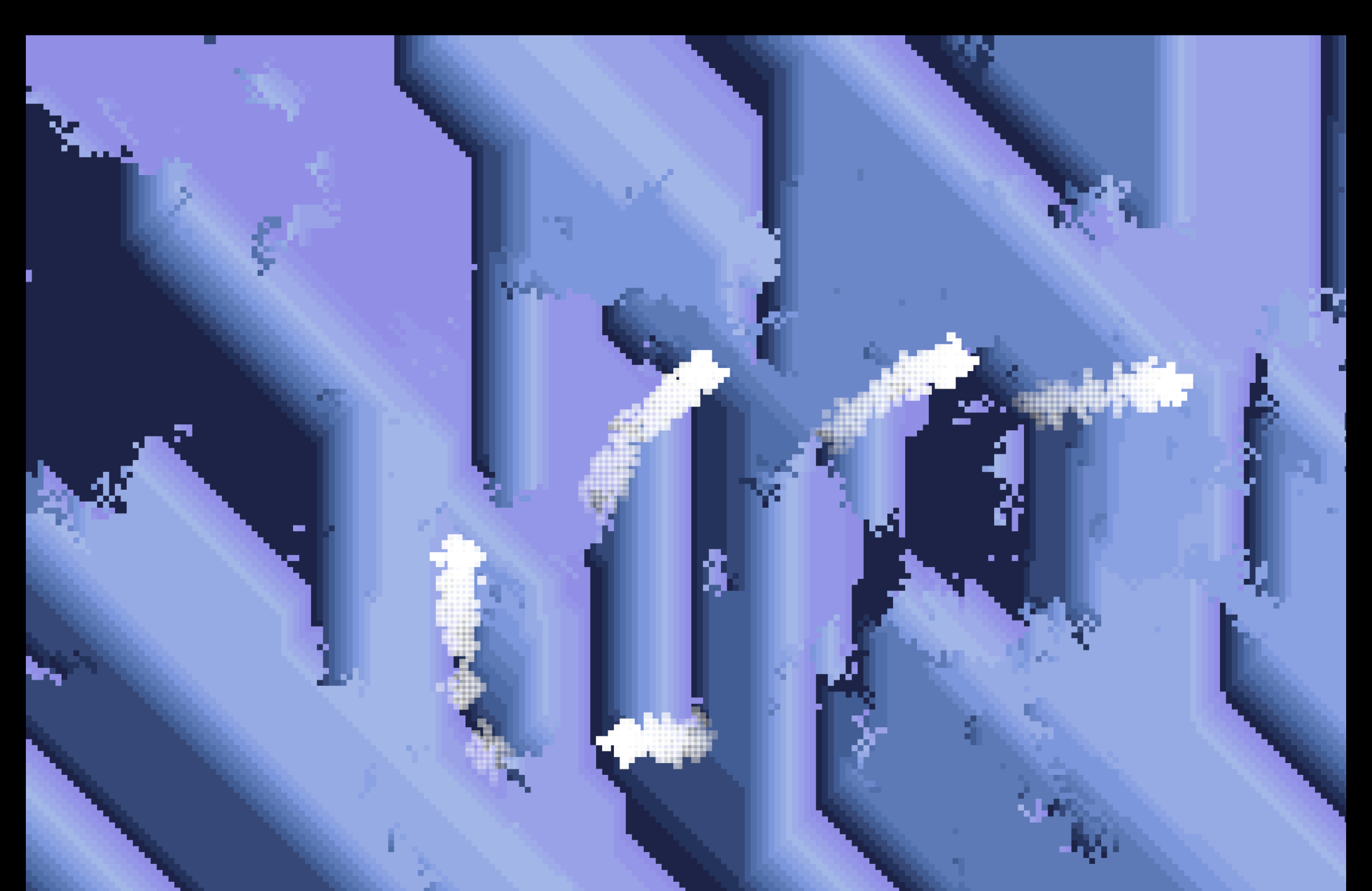

In thinking further about the idea (#1 below) that I wanted to develop, I really honed in on the experience of power that I found central to the interaction I wanted to decision. I had sort of just slapped a graphics idea on a mostly visual experience when originally brainstorming, but I knew the experiental loop needed to be tighter, so I thought about what sorts of graphics would reinforce this idea and settled on cellular automata. As a user of the system, you are not merely wielding this great sonic power, but you are also overseeing the lives of thousands of tiny cells. Now that I have a rough draft of the core experience and the interplay between touch and keyboard controls, it's all about making the system more nuanced - feedback loops between audio/visuals, more parametric control, a deeper synth engine, etc.

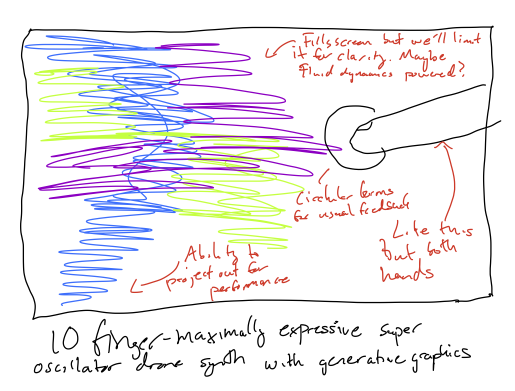

My first idea is inspired by an organismic drone synth called the Lyra-8. The interface would be multitouch (since my sketch, I've revised down from 10 to 5 so I can use a trackpad), and maximally expressive at the subtlest of gestures. The main thing I want the user to feel is raw power when they touch it, like hitting a 20 note chord on the worlds biggest pipe organ or something. The graphics would interact with the users gestures - I'm thinking fluid dynamics maybe.

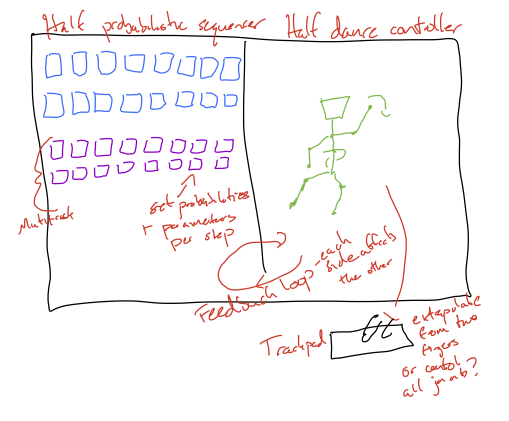

This idea revolves around a hybrid music and dance controller interface with a tight feedback loop between each side so that the things you do to the music affect the animation of the dancer on screen, and the gestures you used to control the dancer (again some sort of multitouch) also affect the sequence you've programmed. I'm thinking the sequencer will be a standard multitrack, 16 step, but with probabilistic control and per step parameter adjustments. One of the key questions I currently have with this design is how to orchestrate the controls for the dancer (do you use two fingers as the feet and extrapolate? Or something more complex?)

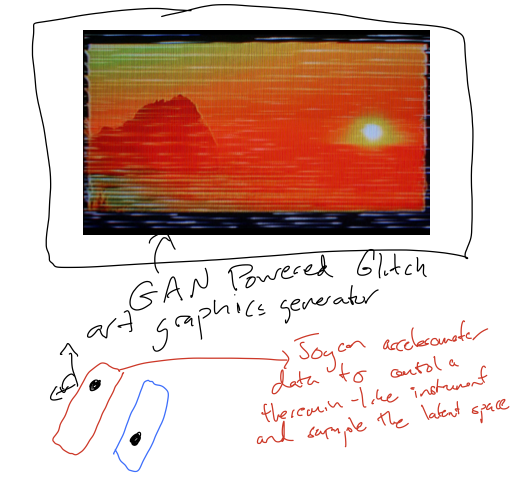

My final idea, and probably the one that I'm most interested in doing barring a little research to see if Unity even supports it, is to train a GAN model on glitch art visuals made by myself and some friends and use it to create generative graphics in Unity by traversing the latent space of the model using accelerometer data from a pair of switch controllers, which would also control a theremin-inspired instrument.