|

Rob Hamilton @ ccrma

Ph.D. Computer-based Music Theory and Acoustics

rob [at] ccrma [dot] stanford [dot] edu

|

Ph.D. Dissertation

My doctoral dissertation Perceptually Coherent Mapping Schemata for Virtual Space and Musical Method is available through the Stanford Library at this permanent URL.

Stanford Library pURL: [86.7 MB]

purl (.pdf)

File under "Wha???"

In an apparent attempt to appeal to the "You-Tube" generation, Stanford commissioned a series of "promotional" clips to be shown during football game televised broadcasts focusing on the technological achievements made by Stanford persons... unfortunately, CCRMA founder John Chowning's discovery of FM synthesis was one of the achievements selected.

download: [6.4 MB]

synthesizer.mp4

rob => RPI;

Starting in the Fall of 2015 I've accepted a position at Rensselaer Polytechnic Institute (RPI) as Assistant Professor of Music and Media in the Rensselaer Department of the Arts. I can be now found on the rpi-webs @ homepages.rpi.edu/~hamilr4, via email at hamilr4 [at] rpi [dot] edu and physically at my office at: 307 West Hall110 Eighth StreetTroy, New York, 12180

Carillon

Carillon was premiered by the Stanford Laptop Orchestra on June 30, 2015 at the Bing Concert Hall at Stanford University. Soloists engaged the virtual instrument wearing Oculus Rift Head Mounted Displays and controlling the Carillon with hand-gestures tracked using the Leap Motion. More info on the work can be found at https://ccrma.stanford.edu/~rob/carillon Carillon Video: https://youtu.be/N6Me8B7Wst8 Unreal Demo download: carillondemo.zip (255 MB) Demo Instructions: README.txt

Designing Musical Games Summer Workshop

Come learn how to design and develop musical games at our 2015 CCRMA Summer Workshop entitled Designing Musical Games::Gaming Musical Design is online. July 20, 2015 through July 24, the workshop takes an intensive approach at elements of gaming and dynamic musical systems that could be combined to create unique musical gaming experiences. Link Minecraft to Pure Data for a networked interactive performance, build game prototypes in Unreal using Open Sound Control, Oculus Rifts and Leap Motion. Embed libPD into a Unity project and compile that to an iOS device. Workshop micro-site: ~rob/workshops/2014/designingmusicalgames

GDC 2014

My talk from the 2014 Game Developer's Conference (GDC 2014) on Procedural Music, Virtual Choreographies and Avatar Physiologies is now available on the GDCVault. Link: http://www.gdcvault.com/play/1020752/Procedural-Music-Virtual-Choreographies-and

Ph.D. Dissertation

My doctoral dissertation is currently available through the Stanford Library and can be found at this permanent URL: pURL: http://purl.stanford.edu/ts761kv5081 title: Perceptually Coherent Mapping Schemata for Virtual Space and Musical Method abstract: Our expectations for how visual interactions sound are shaped in part by our own learned understandings of and experiences with objects and actions, and in part by the extent to which we perceive coherence between gestures which can be identified as "sound-generating" and their resultant sonic events. Even as advances in technology have made the creation of dynamic computer-generated audio-visual spaces not only possible but increasingly common, composers and sound designers have sought tighter integration between action and gest ... more » additional materials: http://purl.stanford.edu/yy758rc6782 oral defense slides: .pdf

OSCCraft

The Source code and a ready-to-use mod .jar can be downloaded from github. github: https://github.com/robertkhamilton/osccraft

ECHO::Canyon

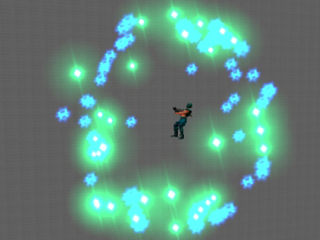

ECHO::Canyon was premiered at CCRMA's 2013 Music and Games concert, on April 25, 2013 in the CCRMA Stage. ECHO::Canyon is the first UDKOSC work to make use of UDKOSC's OSC player and pawn scripting features. All audio for this work is synthesized procedurally in real-time from data pulled from each virtual actor's gesture and motion through the virtual environment. Data sources for each synthesized instrument/voice range from coordinate position in 3D space to individual bone-locations and rotations on the avatar itself. In this manner, macro gestures (of the actor in relation to the environment) as well as micro gestures (comprised of gestures made through animation and motion by the actor's body parts) can be used to control sound and music. The fantastic artistic design, sculpting, modelling and animation are all the work of Chris Platz. Demo Capture Video: https://www.youtube.com/watch?v=0dZ3K8ClZII Concert Video: UStream Paper: Sonifying Game-Space Choreographies, from NIME 2013

Social Composition: Musical Data Systems for Expressive Mobile Music

In Leonardo Music Journal vol. 21 our paper Social Composition: Musical Data Systems for Expressive Mobile Music, written with Jeff Smith and Ge Wang, outlines the technologies and methodologies used across Smule's mobile applications. Links: - http://www.leonardo.info/isast/journal/toclmj21.html Abstract: This article explores the role of symbolic score data in the authors’ mobile music-making applications, as well as the social sharing and community-based content creation workflows currently in use on their on-line musical network. Web-based notation systems are discussed alongside in-app visual scoring methodologies for the display of pitch, timing and duration data for instrumental and vocal performance. User-generated content and community-driven ecosystems are considered alongside the role of cloud-based services for audio rendering and streaming of performance data.

Multi-modal musical environments for mixed-reality performance

Multi-modal musical environments for mixed-reality performance, written with Juan-Pablo Caceres, Chryssie Nanou and Chris Platz, is now available in the latest Journal on Multimodal User Interfaces (Springer). Links: - http://www.springerlink.com/openurl.asp?genre=article&id=doi:10.1007/s12193-011-0069-1 Abstract: This article describes a series of multi-modal networked musical performance environments designed and implemented for concert presentation at the Torino-Milano (MiTo) Festival (Settembre musica, 2009, http://www.mitosettembremusica.it/en/home.html) between 2009 and 2010. Musical works, controlled by motion and gestures generated by in-engine performer avatars will be discussed with specific consideration given to the multi-modal presentation of mixed-reality works, combining both software-based and real-world traditional musical instruments.

Designing Musical Games Summer Workshop

Resources, schedule and information about our 2014 CCRMA Summer Workshop entitled Designing Musical Games::Gaming Musical Design is online. Held from July 28, 2014 through August 1, the workshop took an intensive approach at elements of gaming and dynamic musical systems that could be combined to create unique musical gaming experiences. Attendees linked Minecraft to Pure Data for a networked interactive performance, built game prototypes in Unity3D using OSCSharp and even succeeded in embedding libPD into a Unity project and compiling that to an iOS device. Workshop micro-site: ~rob/workshops/2014/designingmusicalgames

In C Variations for MadPad (iPhone and iPad)

To explore the concepts of crowd/cloud-sourcing musical performance content and inspiration I started the In C Variations project using Nick Kruge's MadPad app for iPhone and iPad. Users can generate their own sets of cells, and share them in the MadPad app. The first sets we used for the Sept. 13 UNO Mobile Phone performance can be downloaded here:

- Ukelele: http://smule.com/mix/hUgwZ Video Link:

DAFx-11 @ IRCAM

I presented an overview of work from the Music in Virtual Worlds group (encompassing projects realized in UDKOSC, q3osc, and Sirikata+) for DAFx-11's "Versatile Sound Models for Interaction in Audio–Graphic Virtual Environments: Control of Audio-graphic Sound Synthesis" satellite workshop at IRCAM, in Paris on Sept. 23. Slides and video from the session are being hosted on the Topohonie website. Links:

Virtual Music Week 2011: University of Nebraska, Omaha After a year off, I again served as the Artist-in-Residence for the University of Nebraska, Omaha's 2011 Virtual Music Week (September 9 - 14) giving a series of concerts and lectures to the great UNO students. This year focused on recent work on UDKOSC and the impact of social music making as seen through the lens of Smule. Highlights included:

Video Links: Special thanks to Jeremy Baguyos, The Spire Foundation, the Nebraska Arts Council, the UNO Department of Music, and the School of Interdisciplinary Informatics.

Advancements in Actuated Instruments

Our work with the Feedback Resonance Guitar was recently published in the Organized Sound Journal, vol. 16, issue 2 entitled Performance Ecosystems. Additionally, there was a nice write-up in New Scientist Magazine.

Links: - Direct Link to the article in Organised Sound 16/2 (account required): Advancements in Actuated Musical Instruments - New Scientist preview link: Augmented instruments add virtual input to live music

UDKOSC: Unreal-based environments for Musical Performance

UDKOSC is a modified Unreal Development Kit (UDK) based virtual environment with customized particle and user behaviors hooked into an implementation of OSCPACK for real-time OSC input and output.

Links: - Tele-Harmonium performance video (Milano, Italy): http://vimeo.com/15792555 - UDKOSC Development wiki @ ccrma: http://ccrma.stanford.edu/wiki/UDKOSC - Nice explanatory interview with UDKOSC user Graham Gatheral (sound designer): http://designingsound.org/2011/06/audio-implementation-greats-11/

MiTo Festival 2010 - Play Your Phone - An Interactive Concert Experience

CCRMA's Music in Virtual Worlds group presented a two-day concert event as part of the 2010 MiTo Festival of Music (Milano/Torino, IT) entitled "Play Your Phone", September 7 and 8 at the Polytechical University at Bovisa, Milano. The concert featured innovative new works utilizing air-quality sensor data, Twitter, Smule's Leaf Trombone, the Berdahl Resonance Guitar, and UT3OSC, as well as performances by pianist Chryssie Nanou and guitarist Robert Hamilton. New works were premiered by Chris Chafe, Juan-Pablo Caceres, Luke Dahl, Robert Hamilton, Chris Platz, Carr Wilkerson, Spencer Salazar, and Jieun Oh.

Some Press/Links: - MiTo Festival Video: Concert Recap Video - MiTo Flikr Set: Play Your Phone - Flikr - La Republica Photos: Play Your Phone: An Interactive Concert - 2LifeCast Flikr Set: 2LifeCast Photos

Resonance/Feedback Guitar

For my recent work with the Feedback/Resonance Guitar, designed by CCRMA's Ed Berdahl, I've been experimenting with an iPhone-based control interface, using multi-touch gestures to drive the guitar's electro-magnets, as well as an 8-channel Ambisonic spatialization patch (Max/MSP) to make use of the guitar's 6 string piezo pickups.

A few recent performance clips: - Siren Cloud pt. III (by Chris Chafe): 20100709_siren_cloud_III_MiTo.aiff - Siren Cloud pt. V: 20100709_siren_cloud_V_MiTo.aiff - ...of giants (duet with Jeremy Baguyos, doublebass; 8-channel Ambisonic spatialization): ofgiants.wav - ...of giants (solo; 8-channel Ambisonic spatialization): ofgiants-modulations.mp3 - Metaphysics of Notation (by Mark Applebaum; duet with Fernando Lopez-Lezcano): metaphysicsofnotation.mp3

MiTo Festival 2009 - Mixed Reality Performance: una serata in Sirikata

In collaboration with the Stanford Humanities Lab, the Sirikata project and the MiTo Festival of Music (Milano/Torino, IT) CCRMA's Music in Virtual Worlds group presented two concerts of networked musical works centered on and in the Sirikata Environment entitled Mixed Reality Performance: una serata in Sirikata, September 12 and 13. Performers on both acoustic and electronic instruments located in Stanford, CA and Milano, Italy met online in a custom Sirikata environment before a live audience in Milan. New works were presented by Juan-Pablo Caceres and Robert Hamilton with performances by pianist Chryssie Nanou, as well as a new realization of Terry Riley's In C performed online by members of the Stanford Laptop Orchestra featuring Chris Chafe, Charles Nichols and Debra Fong.

Some Press/Links: - Project overview page: MiTo @ CCRMA - MiTo Festival Video (Introduction): Intro + In C clips - Performance Video: Dei Due Mondi (9/13/2009) - Performance Video: Canned Bits Mechanics (9/13/2009) - Performance Video: In C (9/13/2009) - Stanford Musicians on Two Continents Meet in Second Life for Live Performance Jord og Himmel: for q3osc and Laptop Orchestra Jord og Himmel is the second work realized in q3osc and was presented recently on two concerts in two distinct forms: first, as a co-located networked duet between Robert Hamilton (Stanford) and Mario Mora (Chile) on June 3, 2009 as part of the net vs. net concert at CCRMA, and second as a large-scale work for 16 q3osc performers on a June 4th concert at Dinkelspiel Auditorium by the Stanford Laptop Orchestra. - net vs. net performance video: Jord og Himmel (vimeo) - slork performance video: Jord og Himmel (youtube)

Metaverse U Conferense: Music in the Metaverse

The Second Annual Metaverse U Conference was held at Stanford on May 29th and 30th. Juan-Pablo Caceres and I presented our ongoing work in networked musical performance in a lecture entitled Music in the Metaverse: Networked Musical Performance with Virtual Environments, touching on the Soundwire group's streaming audio solutions and q3osc, as well as our work with the Sirikata project. Download Presentation: (.mov)

Virtual Music Week: University of Nebraska, Omaha I served as the Artist-in-Residence for the University of Nebraska, Omaha's 2009 Virtual Music Week (March 6-12), giving a series of lectures on computer music, focusing more specifically on my work with q3osc, bioinformatic data as a driver for musical systems, CCRMA and Smule. As part of their 2-day concert festivities, saxophonist Austin Sailors performed my is the same... is not the same for alto-saxophone and computer, and I presented my latest composition ...of giants for 6-channel electro-magnetic resonance guitar and interactive computer (with special guest double-bassist Jeremy Baguyos).

AES 35i - Audio For Games: London, UK Building Interactive Networked Musical Environments Using q3osc was presented at the 35th International Audio Engineering Society Conference in London, UK at the Royal Academy of Engineering.

Smule's Ocarina I've contributed web and design time for Smule for Ocarina, a highly playable virtual instrument. The Online Score Generator I built for them allows Ocarina users to generate fingerhole tablatures online. There are already > 1000 user-generated scores posted online at the Ocarina.smule.com Forum.

For more information on Ocarina visit http://ocarina.smule.com.

ICMC 2008 - Belfast, Ireland The 2008 International Computer Music Conference (www.icmc2008.net) came and went: Roots, Routes and all: p>

iNc: a realization of Terry Riley's In C for lOrchestra iNc was realized in the ChucK language for the April 29, 2008 performance of In C at Stanford University's Dinkelspeil Auditorium for the premiere performance of the Stanford Laptop Orchestra, performing alongisde the Stanford New Ensemble, Chris Chafe (celetto), and musicians in Beijing across the internet at the University of Beijing (Beida). The concert was part of the 2008 Stanford Pan Asian Music Festival. Chuck Files: inC-maui.ck, server-MAUI.ck, instructions.pdf q3osc: fully-featured osc output for ioquake3 A heavily-modded ioquake3-based performance environment with a full Open Sound Control implementation (oscpack) compiled within. q3osc tracks not only individual game-client xyz data but also tracks individual projectiles as they move through the environment. Modded features like homing projectiles and bouncing projectiles allow for more compositional choices in using the environment. Official q3osc page with software (source + binaries), media and information: www.q3osc.org - q3osc Wiki page: q3osc development page on CCRMA's wiki - ioquake3.org interviewed me about the project: http://ioquake3.org/2008/06/01/interview-with-robert-hamilton-creator-of-q3osc/ ICMC 2007 - Copenhagen, Denmark International Computer Music Conference (www.icmc2007.net) is history. Copenhagen was great but very busy:

iPaq with Java-based OSC client Using the Mysaifu JVM on an HP iPaq rx1950, with a hacked-up version of the illposed.com JavaOSC classes and demo app, I put together this little mixer interface to play with. It comes in handy for mixing 8-channel Max/MSP or Supercollider patches from the center of the hall. This is more in the light-client-as-controller use of mobile devices (especially since this iPaq is a bit slow). download .jar v0.9: ipaqOSCcontrolv0.9.jar (source to follow) download Max/MSP txt file test patch: ipaqOSCcontrolv0.9-Max_Client download PD txt file test patch (requires zexy): ipaqOSCcontrolv0.9.pd

Sea Songs by Dexter Morrill - a software recreation Dexter Morrill's classic interactive work for Soprano and Radio Baton-controlled Digitech TSR-24 real-time effects processor re-purposed as a Max/MSP-based software emulation was performed by Maureen Chowning (Soprano/Radio Baton) at CCRMA's 2007 celebration of Max Mathews' 80th Birthday on April 29, 2007. For more information: ccrma concerts page Sea Songs was performed Friday, June 8 by Maureen Chowning at the Institut International de Musique Electroacoustique de Bourges as part of the Bourges Festival 2007's Friday evening concert honoring Max Mathew's 50 years of computer music [link en Francais].

Maps and Legends: an FPS-based interface for Composition and Improvisation An interactive multi-channel multi-user networked system for real-time composition and improvisation built using a modified version of the Quake III gaming engine. By tracking user's positional and action data within a virtual space, and by streaming that data over UDP using OSC messages to a multi-channel Pure Data (PD) patch, users' actions in virtual space are correlated to sonic actions in a physical space. Virtual environments designed as abstract compositional maps or representative models of the user's actual physical space are investigated as means to guide and shape compositional and performance choices. [ project link... ] SEAMUS 2007 Presentation (.html, formatted for Opera full-screen mode) external links: Julian Oliver's q3apd

Bioinformatic Response Data as a Compositional Driverp> A software system using bioinformatic data recorded from a performer in real-time as a probabilistic driver for the composition and subsequent real-time generation of traditionally notated musical scores. To facilitate the generation and presentation of musical scores to a performer, the system makes use of a custom LilyPond output parser, a set of Java classes running within Cycling '74's MAX environment for data analysis and score generation, and an Atmel AT-Mega16 micro-processor capable of converting analog bioinformatic sensor data into Open Sound Control (OSC) messages. ICMC 2006 Presentation (.html, formatted for Opera full-screen mode)

Triages for flute, clarinet, violin, cello, piano, electric guitar and computer Commissioned by Stanford University's CCRMA for the 2006 NewStage:CCRMA Festival. The piece was premiered on April 28th at CCRMA's Stage at the evening concert featuring works by John Chowning, Dexter Morrill and a number of CCRMA Alumni and friends. The performance was conducted by Christopher Jones and performed by Nicholas Ong (piano), Graeme Jennings (violin), Stephen Harrison (cello), Sam Williams (electric guitar), Matt Ingals (clarinet) and Emma Moon (flute).

2006 CCRMA "Pengin-logo" T-shirts Get 'em while they're still around. Available in black, blue and original yellow, these shirts were printed up for the CCRMA 2006 newStage festival. Everyone who is anyone is wearing one... especially Nando. |