HW 3, Sequencers

Richard L.

Oct 30, 2023

Music 256a, Stanford University

FINAL: Breakdance Beat Taiko

Demo: VIDEO

Download files (tested on MacOS 12.5.1)

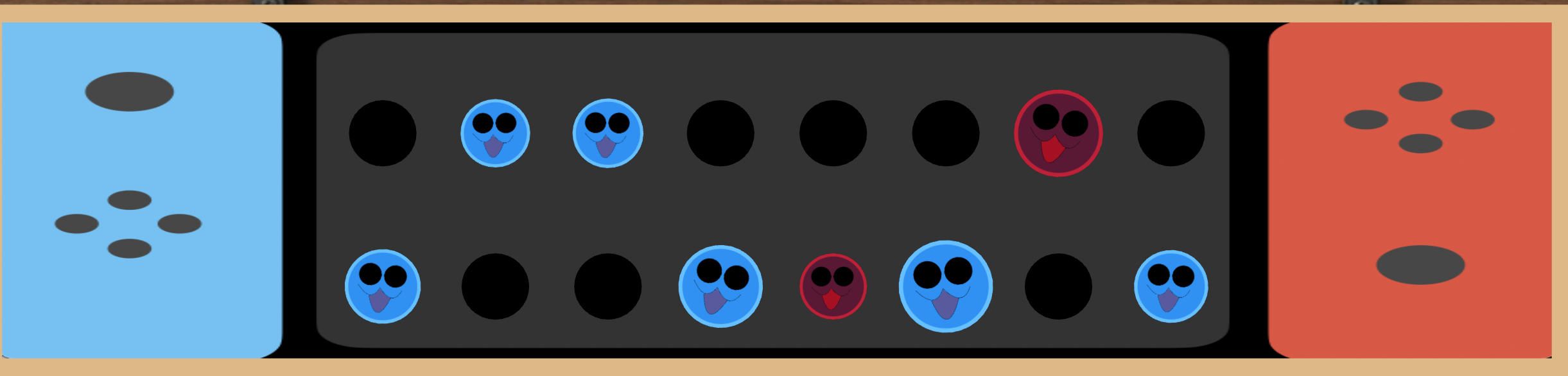

To use this sequencer, download the files, cd to the directory, then run chuck test.ck in terminal. The following commands are important: Use the "Switch" interface to sequence the drums, there will be two drumbeats on the bottom row before a cycle of the top row. Press the "A" and "D" keys to trigger two different drum beats and change the direction of the Taiko, respectively. Then, whenever you're ready to insert melodies, press "W" and "S" to spawn to sax-playing bunnies!

Design Decisions:

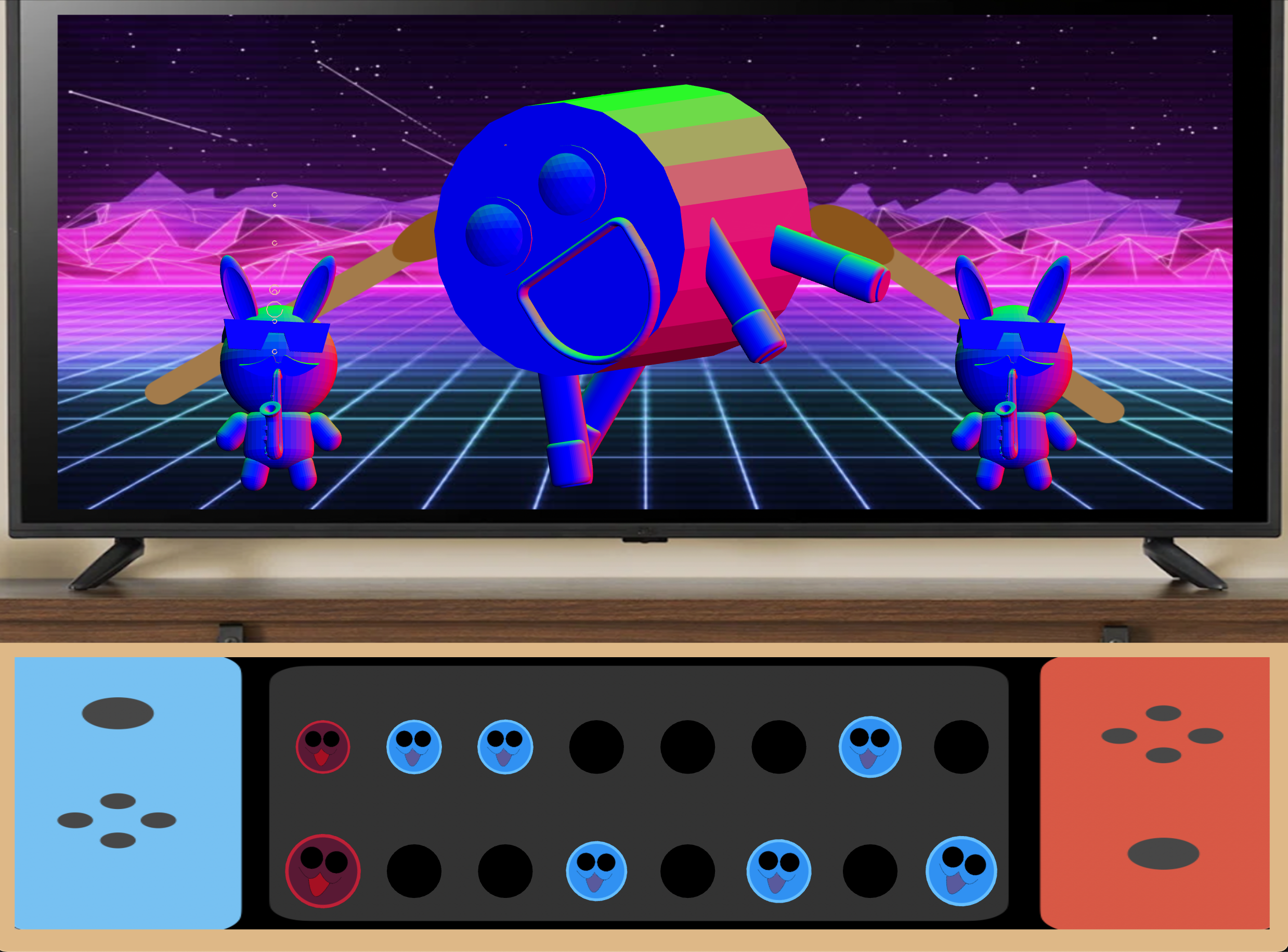

As shown in the above image, the ideas behind the design had changed quite a lot since it's initial details, however I found myself looping back to Milestone 1 with the addition of animals playing instruments! The system is architected quite loosely, unfortunately. I am still getting used to Object Oriented Programming having come from a hardware/signals background, but I am trying to do function calls more. So there is update functions that are triggered/sporked to instantiate visuals and sounds concurrently. Much of the drum sequence was sculpted from Andrew Aday's example, but the sounds were adjusted to be more Taiko-esque. The melodic sequencer was based on arpeggiators tested in WebChucK. This was my first time really focusing on making sounds crisp, so I spent a lot of time finetuning the impulses on the triggered drum beats. Very hyperpop-y!

Milestone 2: Taiko on TV

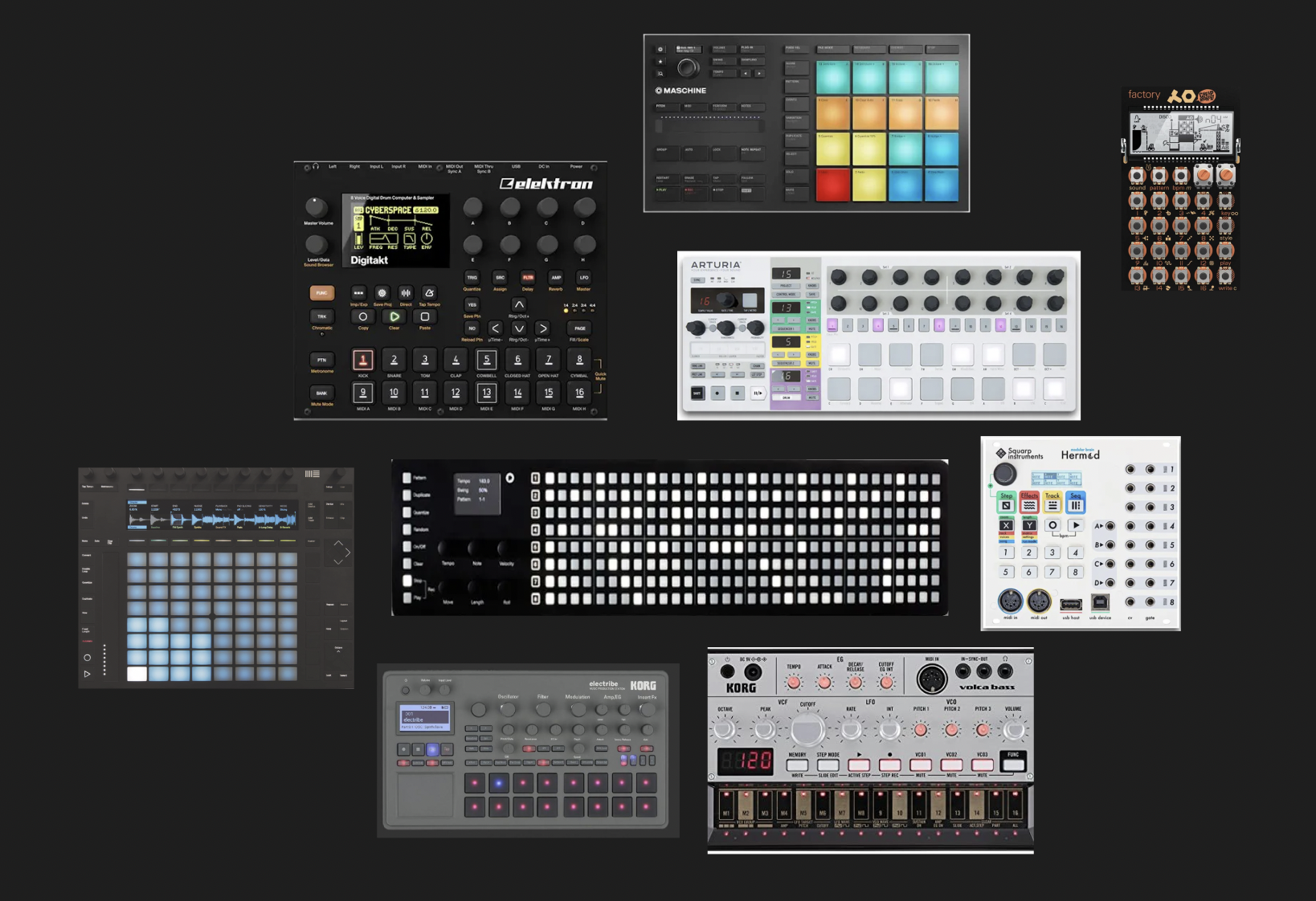

Milestone 1: research on sequencers

Comments on the sequencer landscape: the evolution of sequencers has been what seems like a very fluid and in many cases, inspired, or borrowing process, where many devices had apparent predecessors. Much of the sequencer interface design seems to have originated from electro-mechanical, then analog, and now digital. However, the tables have seemed to turn to an extent, where hardware models are attempting to better visualize what is possible in a visual realm.

Within the world of modular synthesizers, I find it interesting how the UI style informs the operations and compositional purposes of each module. There's classic models that dedicate and differentiate control with separate pots/sliders, while hybrid modes are malleable and not as apparent in distribution of control. Further, xox styles are keyboard-esque with button rows (ie. alike Roland 303) and Cirklon-ish models have push-button UIs that remap meanings depending on what is activated. Overarching is the use of gate handling, where a modular synth relies on envelopes to determine which routings are possible, as well as which steps to pass through.

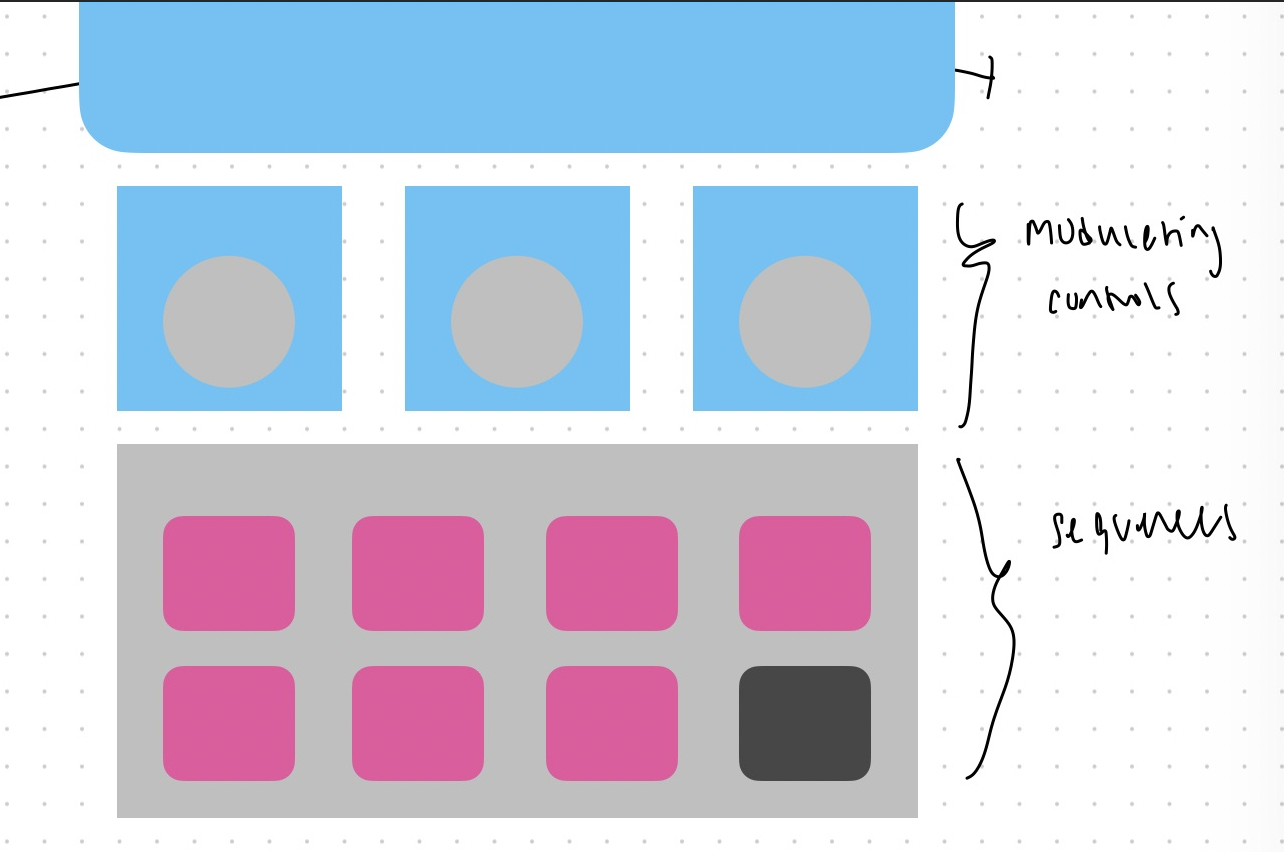

In the context of building out this milestone, I intend to explore the visualization of possibility, and remove decision paralysis by replacing it with visual flow. The intention is to have one initial sound that is rigid enough to not be drowned out, but monotonous enough to be engrained in memory as someone then can interact with UIs to form new rhythms. I believe this model may use synthesis techniques, especially modal, to be able to effectively shift the sound from a droning tone, to a vibrant, effective, and strongly-timed sequence.

Schematics:

Works Cited: