Strange Environments (2018)

At a certain point, I felt stuck working on VRAPL. I felt like I didn't know what to implement next because I wasn't sure why someone would use such a programming language. So, I knew I needed to return to basics and find that out.

I created a series of "strange environments" in VR, focusing on musical interactions that would be impossible in physical reality, and trying to make the virtual environments really "come alive" with a sense that the world would respond to you, but also had its own impetus for existing and acting.

(I also worked on creating "strange instruments" at the same time.)

On this page:

Crab Surfer

This experience was my first strange environment in VR! It explored a few things:

- A "flying" metaphor for getting around. You move where your gaze is pointing, and the further out your hands are from you, the faster you go.

- Background audio feedback for your current speed. This is useful because it's difficult to visually gauge how fast you're going, and because the limit beyond which reaching your hands out further affects anything is kept purposefully close to the body, to avoid exhausting the user's shoulder muscles. The audio feedback lets the user know when they're at that limit, enabling them to hold their arms closer to themself.

- Popping bubbles with your hands (controllers)! It's super satisfying to do this.

- Interacting with an object (still the bubbles) by throwing a projectile. It feels natural to throw something in VR and see it physically come away from your controller, but surprisingly, the instant you add a target into the mix, it starts to feel super unwieldy. It was fun to watch all my crabs milling around and flying through the water, but it was super difficult to actually aim them at any of the bubbles.

- An infinite scrolling landscape.

Wheebox

Wheebox is a scene where you launch projectiles into a box. The projectiles say "whee" when they are launched. When enough projectiles land in the box, it empties. A granular synthesis algorithm sonifies the emotions of the projectiles while they are in the box.

This project had three goals:

- Make a more satisfying launch metaphor than my previous "throwing crabs" metaphor.

- Explore group behavior all collectively informing one sound.

- Use some sound synthesis techniques other than additive synthesis and sampling one file / playback of file.

I determined the project would be successful if:

- Using it is more satisfying than launching crabs was (yup!).

- I like the sound I made and it sounds cool (yup!).

- The granular synthesis is somehow meaningfully different depending on the physical setup of the interior of the box / what’s happening (yup! ish. I just did it based on number of projectiles; that seemed enough).

The box's bottom halves are connected to the walls of the box with hinge joints, which are very stable. The two halves of the bottom are connected to each other via a spring joint. The joint is normally very taught, but when it is time to empty the box, I make the joint very loose, then slowly ratchet it back toward tightness. I was hoping that I would be able to make the two halves be more or less horizontal by making the spring very tight, but no matter what I did, I wasn't able to prevent there being a dip in the middle of the box. Because of this, I had to simplify my ideas around the sound for the box as I wouldn't get very far using the physics data of the projectiles milling around the box (they mostly sit and vibrate a little in the crack).

The box has a minimum and maximum number of projectiles it can hold; after it holds the minimum number, then the likelihood it will open increases until the maximum number, when it opens with 100% probability.

I followed a tutorial for how to show an estimate of the launched path but it ended up being mostly a waste of time as the tutorial was very bad; I reimplemented everything from the tutorial from scratch afterward. The path of the projectile is predicted with ~~math~~.

The sound of the slingshot and the projectile "whee!" sound are both just audio files being played back. The slingshot audio file is a short click, pitched up the further back you pull the slingshot. I recorded my own voice saying "whee!" (and used the first take! :) ).

The sound of the projectiles inside the box is done using granular synthesis, specifically with LiSa. I ended up combining two canonical scripts for LiSa written by Dan Trueman in order to get tight control over all the parameters I wanted to control through ChucK global variables. I simply linearly interpolate over several parameters, depending on how many projectiles are in the box. Since the box usually empties before its maximum capacity, when I quickly switch the parameters to the maximum upon the box opening, it sounds like the projectiles are crying out in shock.

I really like using this. It is very satisfying, and I found myself laughing a lot and saying “whee!” to myself even when I was not using it.

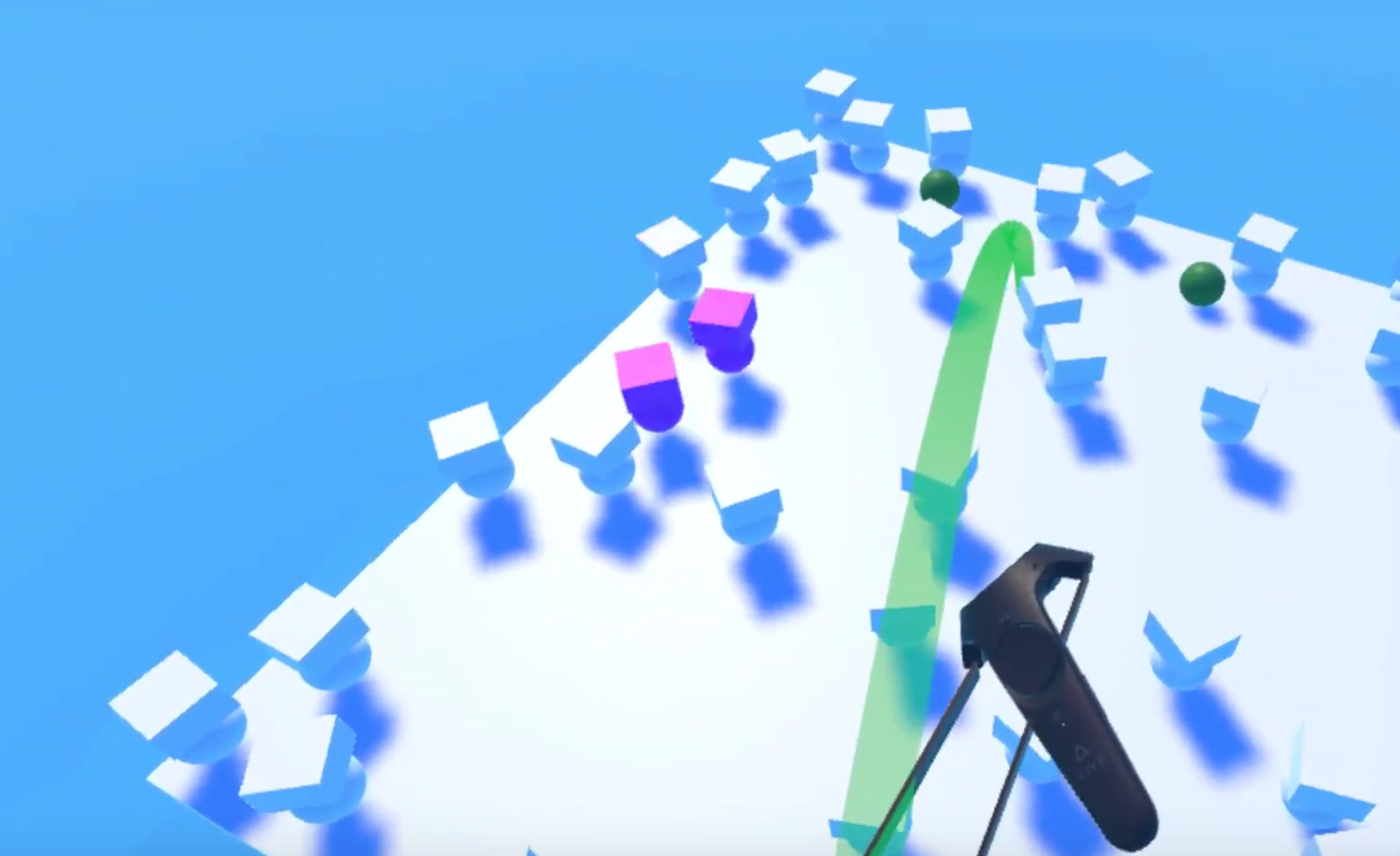

Oh No! Bots

Here I explored a very basic emergent / herd phenomenon with a creature that has its own "animus" (I think of this term as meaning, way of behaving in the world that isn't just doing what you tell it to).

These little "oh no!" bots have only one rule -- if a controller, or a projectile, or another bot hits them, they need to jump back and yell "oops" or "oh no!". (Additionally, they flash pink, and an invisible wall prevents them from falling off the edge of the platform).

It's pretty satisfying to cause chain reactions in the bots. I look forward to making more satisfying environments in the future that have more than one kind of creature, more complicated creatures, etc.