> HCI

• muggling

• the plank

• the box

> Timbral Analysis

• formant analysis

• orchestration

> Music Cognition

> Music Synthesis

HCI projects from Music 250a & 250b

Muggling - ("musical juggling") was a project by

Pascal Stang, Jeffery Bernstein, and myself for music 250a. Our initial concept was

to create a simple hand-held interface for creating or teaching music. Early

design specifications were for a ball type controller which was wireless and

used accelerometers as sensors. We chose juggling as a design metaphor, and decided to

create a set of wireless juggling balls.

The final product was a single wireless ball controller. It consisted of 2 pairs of Linx

radio transmitter receiver chips (TXM-418-LC & RXM-418-LC-S). One set in base controller and

another in the device. The "muggling" ball contained 4 Analog Devices 2-axis accelerometers

used to calculate linear and angular acceleration as well as relative position. The ball also

contained a pair of 3 color LED's for visual feedback. Both the base control and

device were designed around Atmel microcontrollers.

Pure Data (PD) was used to

generate audio from the control signals. Several different mapping schemes were created.

The device was used as a stand alone instrument, with movements directly mapped to sound (velocity

to pitch, magnitude to amplitude, etc...) which produced fairly random and uninteresting output.

A mode for surround sound panning control was implemented, which was quite useful and intuitive.

The most interesting mapping was as a controller for DJ type scratching. Check out our wirte-up for more information or

see a short video clip from our presentation here (15MB).

<top>

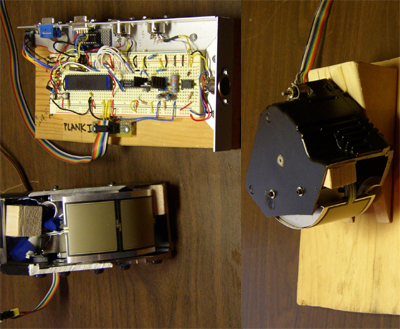

Haptic Feedback for Multimedia Editing - For this ongoing research project I hope to increase efficiency and simplicity for digital non-linear audio editing with the introduction of meaningful and intuitive haptic feedback through an editing control device. The hope is that adding haptic forces to the editing process will provide an increased physical connection with the editing process and enhance user control. I have chosen to use "The Plank" for initial work on this project. The Plank is a simple haptic device designed by Bill Verplank and Michael Gurevich that provides force feedback and can be used to create surface illusions for enhanced device control. The basic functions in an audio editor are zoom, scrub, fade, cut, crop, and mix. Other functions might include volume adjustment, pitch manipulation, time manipulation, positional marking, segments manipulation, and drawing/manipulation of waveforms. I think that many of these operations could benefit form haptic feedback. These operations usually involve a series of repetitive and inefficient mouse movements with the only user feedback from the visual interface of the software.

As a proof of concept, I have implemented a simple application which provides bi-directional

communication and control of the Plank and implements a simple audio scrubber.

The plank forces assist the user in scrubbing speed control. Also, a vibration is generated from the amplitude

envelope of the audio material, which provides a tactile description of the audio being edited.

There is still much work to be done. Ideally the controller should not need a significant amount of CPU power.

Otherwise it will interfere with the multimedia application. However, for the haptic device to be

effective it needs to provide instantaneous feedback to the user as well as instantaneous control signals.

Balancing these two needs is an important and difficult design issue. I hope to have a functional prototype

completed within the next couple months which provides a fully featured and simple control interface.

<top>

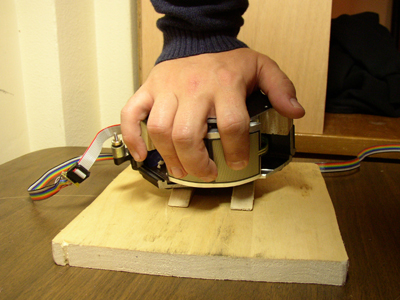

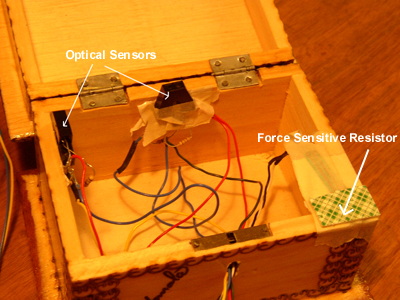

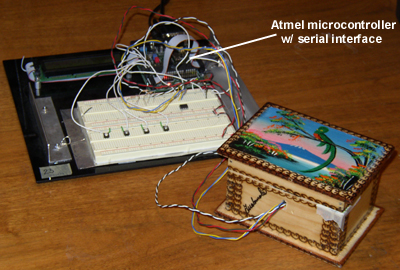

"The Box" a simple exercise in Music Controller Design - The Box was built as an exercise in electronic music controller design. It was an attempt to create a musical controller with expressive control abilities from leftover objects found around the house and workshop. The device itself is quite simple. As the name suggests it is built out of a wooden box with a hinged lid. Mounted inside are 2 optical sensors and a force sensitive resistor (FSR). The optical sensors provide a control signal corresponding to the lid position. The FSR is mounted on the lip of the box, sandwiched between the lid and body of the box, providing a signal when pressure is applied to the lid in a closed position (i.e. tapping on the box). There are also 4 buttons (which can be seen in the photo below) on the circuit board which can be used for instrument control or changing control modes for the box. The device uses an Atmel microcontroller for AD conversion and data transmission. Data is sent to a linux computer via a serial cable. Pure-data (PD) was used to create an electronic instrument.

This device was used to explore the issues involved when mapping control signals

in a musical context. Control information can be used to manipulate music

either directly or indirectly. One control signal can control a single parameter (pitch, modulation, timbre, etc...)

or it can be mapped to control many parameters simultaneously.

For instance an intuitive direct mapping would

be to control volume based on the box lid position. (Lid open equals full volume, Lid

closed equals no sound). An example of indirect mapping might be choosing a random pattern of notes

when the lid position is changed. The controller still effects the music, but the control value has no clear

correspondence to the audio output. Through the buttons on the board, the mappings of the box

can be changed dynamically during a composition or performance as set up in the software. This makes the box

a modal controller. Modality introduces a new set of problems to consider. In a modal

device, which modes are intuitive (is it more intuitive to have sound stop when the lid is

open or closed)? How does the user switch between different modes of operation (automatically, manually)?

What feedback is provided to differentiate the current mode from all others (how can

you tell which mode the controller is in)?

The box was used to create a simple composition for performance in which the control mode changed automatically as the

piece progressed. Initially, the lid position controlled timbre of the instrument. Once the lid was closed

for the first time, the lid position switched modes to control the modulation (vibrato) of the sound. Also,

tapping on the lid produced a melodic sequence of notes. This was a useful exercise to consider these design issues

involved in this type of device before

pursuing somethng more complicated.

<top>