The Sound of Innovation

Stanford and the Computer Music Revolution

Andrew J. Nelson

The MIT Press

Cambridge, Massachusetts

London, England

©2015 Andrew J.

Nelson

This work is licensed to the public under a

Creative Commons Attribution- NonCommercial-NoDerivatives 4.0 license

(international):

http://creativecommons.org/licenses/by-nc-nd/4.0/

http://creativecommons.org/licenses/by-nc-nd/4.0/

All rights reserved except as licensed

pursuant to the Creative Commons license identified above. Any reproduction or

other use not licensed as above, by any electronic or mechanical means

(including but not limited to photocopying, public distribution, online

display, and digital information storage and retrieval) requires permission in

writing from the publisher.

MIT Press books in print format may be

purchased at special quantity discounts for business or sales promotional use.

For information, please email special_sales@mitpress.mit.edu.

This book was set in Stone by the MIT Press.

The print edition of this book was printed and bound in the United States of

America.

Library of Congress Cataloging-in-Publication

Data.

Nelson, Andrew J.

The sound of innovation:

Stanford and the computer music revolution / Andrew J. Nelson.

pages cm.—(Inside technology

series)

Includes bibliographical references and index.

ISBN 978-0-262-02876-9 (hardcover : alk.

paper)

1. Stanford University. Center for Computer

Research in Music and Acoustics. 2. Computer music—History and criticism.

3. Music—Computer programs—History. 4. Music and

technology—History. I. Title.

ML33.S73C464 2015

786.7'60979473—dc23

2014031506

10 9 8 7 6 5 4 3 2 1

Contents

Preface to the Electronic Edition

5 Duet

for Stanford and Yamaha

6 From

Exposition to Development

7 Plucking

the Golden Gate Bridge

8 Recapitulation

and Variations

9 Coda

Appendix:

Interviews Conducted by Author

Index

In 1986, at the age of eleven, I

started a newspaper route. My motivation was singular: To save the roughly

$2,000 required to purchase a Yamaha DX7 synthesizer—the most intriguing

and beautiful musical instrument I had ever encountered. After several months

of progress, my parents lent me the rest of the money needed for the purchase,

on the condition that I pay it back—with interest. I did.

Seven years later, I arrived at Stanford

University as a freshman. One of my first stops was the Center for Computer

Research in Music and Acoustics (CCRMA), where I encountered a dizzying array

of advanced sound technologies. I immediately began coursework for a major in

Music, Science and Technology. Though I didn't know at the time, CCRMA had

developed many of the innovations underlying the computer music revolution,

including the technology at the heart of the DX7. In fact, a decades-long relationship

with Yamaha was essential to the center's existence.

In light of this history, it might be

accurate to say that the origins of this book stretch back nearly thirty years.

More recently, however, the immediate impetus for this book lies in a statement

that Woody Powell made in a 2001 Stanford PhD seminar, during my first year in

the Management Science and Engineering (MS&E) doctoral program: "Few people

recognize that one of Stanford's most lucrative technology licenses stems from

the music department." I recognized immediately that he was referencing the

CCRMA–Yamaha relationship. With Woody's guidance, I produced a term paper

on this history. The term paper became my doctoral qualifying paper, which in

turn became my first peer-reviewed journal article. In many ways, this book is

the product of Woody's continued guidance and encouragement over many years.

I'm indebted to him.

In the fourteen years that I've been

researching various facets of CCRMA, countless other individuals also have

enabled and encouraged my work. The members of my dissertation

committee—Steve Barley, Woody Powell, Kathy Eisenhardt, and Mark

Granovetter—encouraged me to appreciate and explore the complexities of

the relationships between the "technical" and the "social." The Powell "lab

group"—including Jeannette Colyvas, James Evans, Stine Grodal, Jason

Owen-Smith, Kelley Packalen, Kaisa Snellman, and Kjersten Bunker

Whittington—offered a simultaneously challenging and supportive

environment in which to try out many of this book's core themes.

This book never would have materialized

without the support of a wide range of other groups. First and foremost, CCRMA

students, staff, faculty, and alumni have been overly generous in sharing their

time, insights, and even personal collections of historical documents. I'm

indebted to each of the interviewees listed in the appendix—and

especially to John Chowning, Chris Chafe, and Julius Smith, each of whom spent

days guiding me through the intricacies of CCRMA's history and read drafts of

the manuscript. (John also taught a sound synthesis course that I took at CCRMA

in 1994, and Chris served as my undergraduate advisor at Stanford.) A large

number of other CCRMA participants also contributed to this book through

informal conversations and extended email exchanges, including Marina Bosi, Al

Cohen, Les Earnest, John Granzow, Hiro Kato, David Kerr, Don Knuth, Sasha

Leitman, Chryssie Nanou, Nick Porcaro, Jean-Claude Risset, Loren Rush, Craig

Sapp, Gary Scavone, Tricia Schroeter, Carr Wilkerson, Linnea Williams, Patte

Wood, Bill Verplank, and Nette Worthy. Patte Wood, in particular, deserves

tremendous thanks for her foresight in saving several boxes of documents from

her many years as CCRMA's administrator and for depositing these historical

treasures with the Stanford University Special Collections and University

Archives. A reality of any book is that it cannot capture and reflect each

perspective in the richness that it deserves; undoubtedly, each of these

participants would tell CCRMA's story differently, highlighting other aspects

of the center and interpreting the same events in different ways. In fact, as I

argue throughout this text, there is great power in things like books,

histories, computers, and compositions precisely because they afford such

multiple interpretations. I hope, therefore, that my own interpretations will

be received with openness.

At the Stanford Archives, Maggie Kimball,

Polly Armstrong, Jerry McBride, and Paul Mustain offered frequent assistance.

At Stanford's Office of Technology Licensing, Kathy Ku was gracious in sharing

documents and data. At Stanford's Office of Development, Julia Hartung and

Belinda Kuo helped me understand both the composition of CCRMA alumni and the

role of individual and corporate giving. Arthur Patterson of Stanford's News

Service, John Strawn, and Patte Wood worked to identify key photographs. At the

National Association of Music Merchants, Dan Del Fiorentino and Tony Arambarri

enabled access to recorded interviews and other historical materials. Finally,

at the Paris-based IRCAM, Hugues Vinet was a gracious host as I toured

facilities and spoke with participants in an effort to understand how CCRMA

compared to another leading computer music center.

Conducting this research over great distances

and many years required substantial financial resources. I am particularly

grateful to the Kauffman Foundation, which provided generous financial support

and early encouragement through the award of a Kauffman Junior Faculty

Fellowship.

At MIT Press, editor Margy Avery and series

editors Wiebe Bijker, W. Bernard Carlson, and Trevor Pinch shared my vision in

this project and encouraged its further development into the present version.

Paul Leonardi, Jonathan Sterne, and Steve Kahl each read the full manuscript and

provided valuable guidance in this process.

Closer to home, my University of Oregon

colleagues supported a curiosity-driven research environment, while Jon Bellona

and Stephan Nance offered valuable research assistance.

And closest to home, Ann, thank you for your

ceaseless encouragement to write this book, despite the many evenings,

weekends, and "vacations" sacrificed. To Elizabeth, who was born in the midst

of this project, thank you for introducing me to the wonders of encountering

new sounds and new music for the very first time. And to Mom and

Dad—thanks for the loan and the encouragement. I'll bet you never thought

my fascination with the DX7 would lead to this.

Preface to the Electronic Edition

It has long been my vision to release an electronic version of this book, for two reasons. First, it's rather odd to write about new music-making technologies in the definitively silent format of a paper book. Thus, this electronic edition is linked to several sound examples throughout the text, enabling the reader to better appreciate the music at the center of the Center.

Second, histories such as this book are fundamentally interpretations—exercises in ordering and structuring stories and data. By their very nature, interpretations vary across people and time, morphing in response to individual experiences, social trends, emergent information and new lenses. This observation was behind my initial motivation to release all of the source documents that inform my account—or at least all for which I have permission and that do not violate confidentiality or other concerns—on the website that accompanies this text: http://www.thesoundofinnovation.com. This open availability enables others to construct their own narratives and to bring their own interpretations to the "fossil record" contained in these source documents.

In an electronic format, the links between my own interpretation and these records can be made tighter. Thus, whereas a paper format simply lists sources, the electronic version permits direct links to source documents. When reference is made to an email to Steve Jobs, for example, the reader can click on the link to immediately open a pdf of the original email. By extension, she can then decide what she thinks the email means, conveys, or implies.

How to Read the Electronic Edition

Practically speaking, these linkages demanded some design decisions for this electronic edition, so that the flow of text would not be interrupted with constant notes and linkages. What I've done, therefore, is to indicate external links in brackets. For example, "According to the March 1976 report [link], Yamaha's goals..." indicates that clicking on the word "link" will bring up a PDF of the original 1976 report. To return to the text of the book, simply hit the "back" button on your browser. Where the link is an audio or video clip, it will open in a new window. (Thus, to return to the text of the book, simply close the audio/video window.)

In some cases, the footnotes also contain substantive elaborations on the text. In fact, some of the best anecdotes are hidden in such footnotes. In these cases, I enclosed the footnote number in brackets (e.g., "[42]") and included the footnote text as a hover; hover your mouse over the footnote number, and the text will pop up without changing the position on the page. Conversely, where footnotes contain primarily bibliographic references, the footnotes are indicated without brackets (e.g. "42"). In these cases, clicking on the footnote will jump to the appropriate bibliography entry at the end of the book. (Simply hitting the "back" button on your browser will take you back to your previous place in the text.)

Additions/Corrections

Finally, it was tempting to rewrite portions of this text in response to my own changing interpretations and to reviews and other reactions. First, the history that I recount ends just as another important shift was underway at CCRMA: the emergence, or rather reemergence, of cognitive research and work oriented around the brain, as evident in the work of Prof. Takako Fujioka, Prof. Jonathan Berger and others. Second, although I spoke with a wide array of CCRMA-lites in conducting the research for this book, I missed the opportunity to interview some key players, including longtime CCRMA administrator Patte Wood and co-founder Loren Rush. Perhaps I'll address these points in a future edition, but for now I've opted to preserve the original text for purposes of continuity and comparability between the paper and electronic editions.

Finally, as I acknowledge in the original Introduction, I give far too little attention to the compositions generated at CCRMA. They deserve a book of their own, but I am not the one to write it. My hope is that the current text can serve as inspiration for this task and a foil against which the technological and organizational aspects of CCRMA might be used to inform the musical ones.

Eugene, OR

May 2016

1

Eight musicians filed into the

chamber music hall, dressed in all black and wearing focused expressions.

Silently, they fanned into a semicircle across the front of the room, just feet

away from the closest audience members. The sound of creaking chairs

accompanied fumbling efforts by the last few attendees to turn off their mobile

phones. What happened next, however, resulted from the musicians' failure to

turn off their own phones. It was not

an accident.

The musicians' focus turned to their iPhones,

each held snugly in one hand. Small, amplified speakers hung off each of their

wrists, held in place by fingerless gloves. As the musicians waved their

arms—slowly, deliberately—an otherworldly sound filled the air. The

sound—like the drone of a wet finger rubbing the rim of a glass

bowl—grew louder. It grew denser. It grew higher in pitch. And the

audience grew mesmerized.

As the piece continued, the phone-produced

sounds slowly morphed. New textures developed and swirled about one another,

mimicking the musicians' own movements. A warbled hum imitated one musician's

agitated wrists. A whistling melody rose and fell as another musician's right

arm reached for the ceiling and dropped toward the floor. A sustained metallic

buzz emerged almost unnoticed, but grew thicker, more harmonic, and more

insistent until it nearly overtook the rest of collage. With the final

crescendo, the musicians—eyes locked on the ensemble's

director—dropped their arms in unison. The audience burst into voracious

applause.

The Stanford Mobile Phone Orchestra [video], or

MoPhO, is a special ensemble: A musical group that raises questions as to

whether a phone – or any object, for that matter – is a musical

instrument; a musical group that leverages cutting-edge audio technologies,

available via open source yet also commercialized through a Silicon Valley

startup, Smule; and a musical group whose "instruments" have been provided by

the corporate technology partners of its academic home, Stanford's Center for

Computer Research in Music and Acoustics, or CCRMA (pronounced "karma") [webpage].[1]

Ge Wang is the thirty-something music

professor who directs the MoPhO. Though each CCRMA student, faculty, and staff

member comes to the center via a different route, Wang's background is

representative: He is trained as both a computer scientist and a musician; he

works at the intersection of technology and music; he holds a tenure-track

academic appointment in Stanford's music department while simultaneously

serving, for a period, as cofounder and Chief Technology Officer at Smule; and

he blurs the lines between science, art, engineering, and commerce, passing

almost seamlessly between these different worlds.

Wang's academic home on the Stanford campus,

CCRMA, possesses these same attributes. In fact, CCRMA emerged and thrives at

such diverse intersections. In turn, it has played a vital role not only in

developing the new discipline of computer music, but also in ensuring that

digital audio enjoys a nearly ubiquitous presence in the world today. As

someone plays music on a computer, plunks keys on a keyboard, or streams songs

over the Internet, chances are good that a CCRMA alum or partner is involved in

some way.

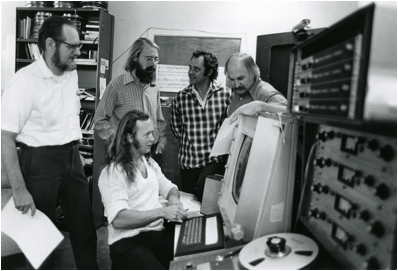

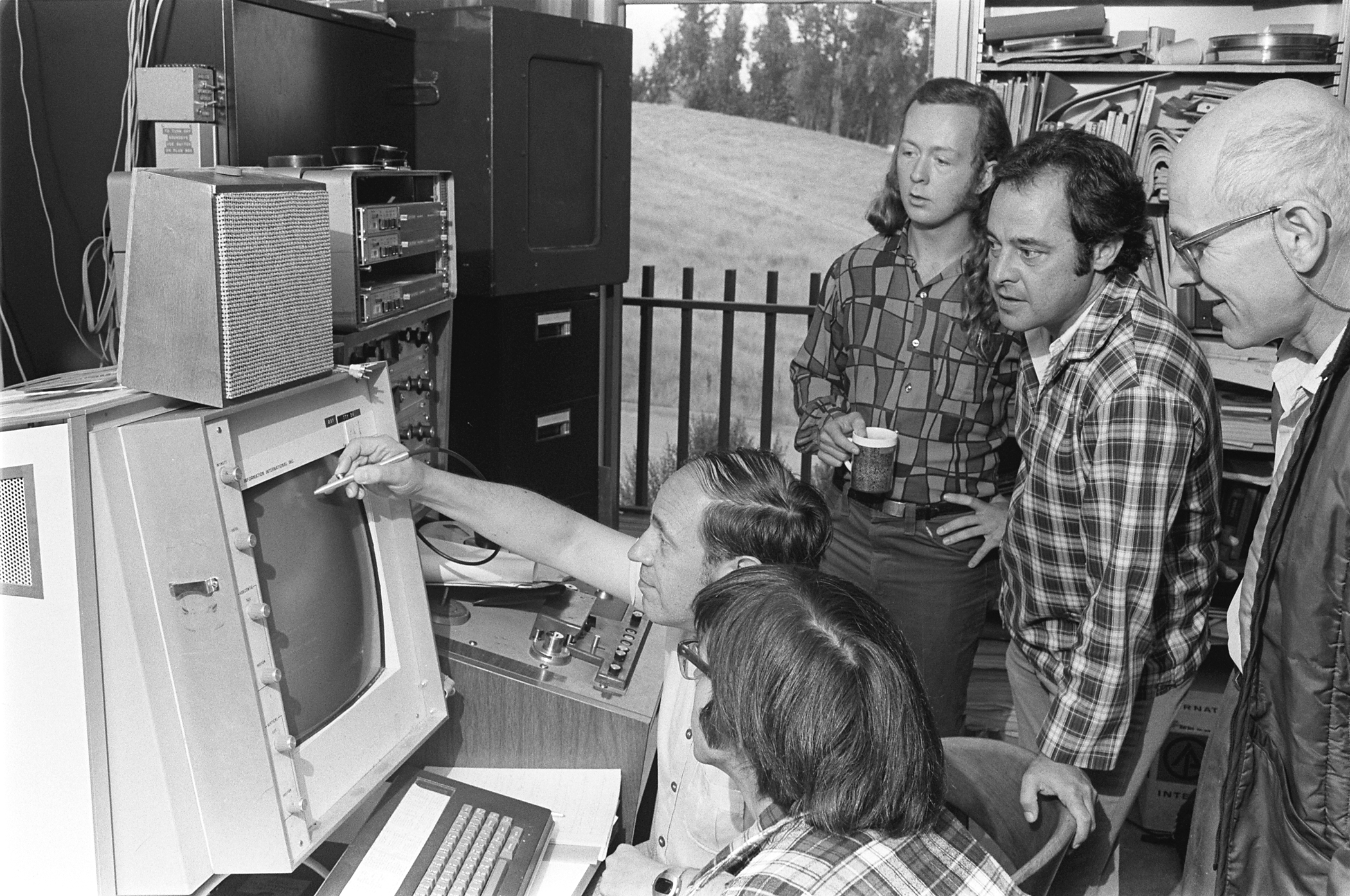

CCRMA originated in the 1960s, when composer

John Chowning and other pioneers latched on to both the equipment and the

people at Stanford's budding Artificial Intelligence Laboratory. There, working

mostly at night and on weekends ("so as not to abuse our hosts," as Chowning

would explain), the team of musicians, engineers, psychologists, and computer

scientists labored to apply the computer in an entirely novel way: to produce

and manipulate sound and, more importantly to them, the sonic basis of new

musical compositions. In the process, they helped to develop a new academic

field, to invent the technologies that would underlie this field, and to

transpose these inventions into broad commercial application, reaching

consumers in every corner of the planet.

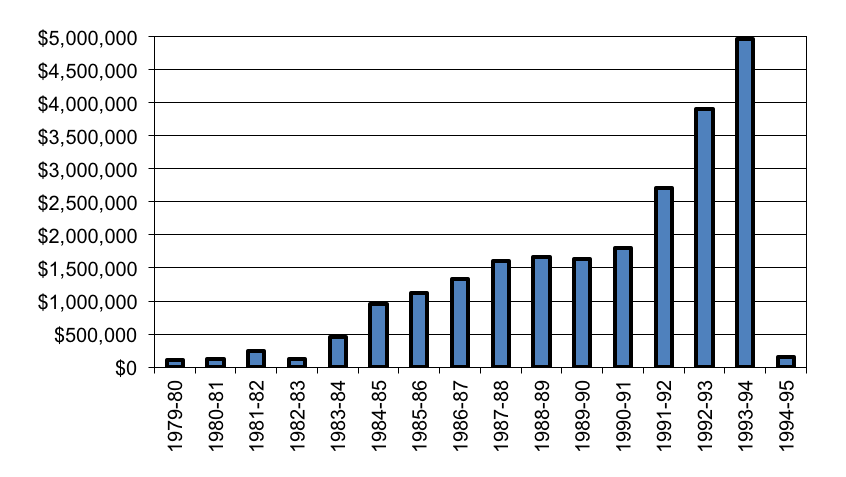

One of CCRMA's first inventions was

Chowning's frequency modulation (FM) synthesis technique, which helped to usher

in the era of digital music. In 1975, Yamaha Corporation of Japan licensed FM

and used it to power one of the best-selling musical instruments in

history—their DX7 synthesizer—along with countless computer

soundcards for multimedia PCs and semiconductor chips that enable mobile phone

ring tones. The FM license, in fact, still remains one of Stanford's most

profitable technology licenses—an impressive achievement in a university

that produced Google, DSL, and recombinant DNA, among other high-profile

inventions. CCRMA, in turn, would plow the financial proceeds into an endowment

fund that continues to sustain the center.

In the 1990s, CCRMA and Yamaha would attempt

to repeat the feat, working to develop a novel type of "physical modeling"

synthesis that promised to nearly eliminate computer memory requirements for

sound generation. Today, CCRMA serves as a hub of free and open-source music

and audio software, and CCRMA researchers apply these tools in settings ranging

from the sonic exploration of archaeological ruins in Peru to the development

of smartphone applications that turn mobile phones into virtual pianos and

rap-music beatboxes. The center is widely recognized as a world leader in

computer music and digital audio research.

Underpinning this technological history is a

musical one. Indeed, CCRMA's technological contributions must be understood,

first and foremost, as facilitators of compositional aims. From its inception,

the center attracted some of avant-garde classical, jazz, and rock music's

biggest names, including Pierre Boulez, Gyorgi Ligeti, Stan Getz, and Phil

Lesh. The center's own students and faculty have composed hundreds of works,

featured on stages around the world and garnering countless awards. Closer to

home, as Stanford prepared in 2012 to open a new $112-million concert hall that

would host Yo-Yo Ma and the San Francisco Symphony in its inaugural season, the

first group to "perform" in the under-construction hall was CCRMA's "Laptop

Orchestra"—a chamber ensemble in which all of the instruments are laptop

computers.

The everyday practices at CCRMA are a lauded,

albeit still unusual, combination: an energized interdisciplinarity that

stimulates creativity and contributions at the intersections of fields; a

fierce commitment to open sharing and to "users"—primarily, musicians and

composers—that defines both priorities and vision; and deep commercial

engagement that has resulted in numerous widely used products and in far-flung

relationships with diverse organizations.

CCRMA is instructive, however, not only as an

example of these activities, but also as a collective at their forefront. Thus,

members of the center embraced interdisciplinarity not when boards of trustees

and government agencies said that such mixing was "good," but rather when

administrators and funders alike questioned whether it was appropriate; CCRMA

focused relentlessly on users and on the broad diffusion of technology not only

in an era of Silicon Valley marketing, but also in a period of Cold War

self-sufficiency that celebrated walled-off engineers and "upstream creators"

over populist tinkerers; and CCRMA developed intellectual property and engaged

with industry not after 1980 US legislation encouraged such moves and new

"technology transfer offices" mushroomed to support it, but rather when

university patents were unusual and efforts to commercialize university

research were sparse and peripheral. CCRMA, therefore, serves as both an

exception and an example: an early outlier that became a model—indeed, an

archetype—for later organizations.

This book focuses on two intertwined

questions: First, why and how did CCRMA emerge, not only crafting success as an

organization but also seeding an entirely new field, computer music, that today

permeates academia, industry, and everyday life? As the account in the chapters

that follow makes clear, this success was neither easy nor preordained.

Second, beyond CCRMA's early success, how has

the center continued to engage in these diverse and creative activities nearly

fifty years later? As countless treatises on organizational renewal and

corporate entrepreneurship highlight, the continued regeneration of innovation

and of an innovative culture is both precious and unusual.2

My analysis of CCRMA draws upon and develops

three broad themes: (1) interdisciplinarity, including the rise of

interdisciplinary programs and the challenges and opportunities associated with

them; (2) open innovation, including user innovation, free and open source

software, and technology standards; and (3) university technology transfer and

research commercialization. In turn, I argue that the center's emergence,

sustenance, and renewal stems from the ability of CCRMA participants to

intertwine and mutually leverage these activities in unique and powerful ways.

Recent years have witnessed a surge in

interest by university administrators, funders, and researchers themselves

around interdisciplinarity—a

concept whose definition varies from author to author and from setting to

setting, but which typically conjures images of "unity and synthesis" among

different fields, perspectives, methods.[3] In turn, scholars have investigated how interdisciplinary work offers new

opportunities, owing to the insights that emerge across boundaries, but also

presents new challenges, particularly in terms of perceived legitimacy among

existing academic disciplines.4

CCRMA reflects a particular approach to

interdisciplinarity that Cyrus Mody and I label radical interdisciplinarity—a partnership in which seemingly

diverse disciplines come together on equal footing and in which the

participants from these disciplines are forever changed as a result of the

interaction.[5] Thus, CCRMA is not simply an

example of infusing a bit of software engineering into music (or vice versa);

instead, it represents a fundamental transformation of disciplines through the

combinations that it engenders. To employ a cooking analogy, radical

interdisciplinarity is like a purée in which each ingredient is critical and in

which neither chef nor diner can pull apart the constituent ingredients again,

even though they may identify the individual influences.

Of course, such interdisciplinarity can fail,

too. It can be difficult to communicate across disciplinary boundaries; it can

be difficult to establish credibility as an individual researcher when one's

work lies between disciplines; and it can be difficult to attract resources,

which often are tied to particular departments and disciplines.6

CCRMA, too, experienced these challenges. The center's history, therefore,

sheds light on the circumstances under which interdisciplinarity may open up

new possibilities or result in failure.

A second theme that runs through CCRMA's

history is open innovation. Henry

Chesbrough's book, Open Innovation,

describes open innovation as a model by which organizations look beyond their

internal R&D labs and capabilities in order to identify and develop

innovations.7 Thus, "open" refers to

organizational boundaries and barriers—of both physical and cognitive

sorts.

Although much of the work on open innovation

focuses on partnerships between organizations, end-users themselves often make

important contributions, too. Thus, whereas a traditional innovation model may

posit that users are mere consumers of offerings from firms, in many cases

users act to modify and co-create products and services. For instance, Trevor

Pinch and colleagues have documented numerous cases in which users suggest new

applications and adaptations that were initially unimagined by firms.8

Eric von Hippel and colleagues have bolstered this "user innovation" argument

by emphasizing how users often create entirely new products in order to serve

their own idiosyncratic needs. In turn, firms only later pick up these

products, facilitating their diffusion into a broader market.9

Similarly, CCRMA composers do not merely apply or use existing technologies

from commercial firms. Instead, their own musical and compositional aims

suggest new technologies that firms may later develop and diffuse.

Of course, open innovation is facilitated by

sharing across boundaries, a point emphasized by the phenomenon of open source

software communities.10 In these communities,

participants share the "products" themselves—typically computer code or,

as with Wikipedia and YouTube, other knowledge assets—openly and freely

with one another. In the CCRMA case, for example, a Bell Labs researcher, Max

Mathews, shared with Chowning his program for generating music with a computer.

In turn, CCRMA shared its enhancements to Mathews's program with IRCAM, a

Paris-based computer music center widely viewed—alongside CCRMA—as

one of the best in the world. CCRMA's sharing not only saved IRCAM years of

development time, but also enabled personnel and further software developments

to move easily between the groups.

As this example highlights, technical

standards thus serve an important role in facilitating open innovation. Indeed,

standards have a number of benefits: By enabling economies of scale and by

encouraging competition on the basis of price, technical standards can drive

down prices and enable broader access. Moreover, standards yield network externalities. These

externalities may be direct, as with email: As more people adopted email, the

value of email increased since each user could reach more people. Or, the

externalities may be indirect. As more users purchase smartphones, for example,

there is greater incentive to produce "apps" and to improve the quality and

availability of service in order to address this increasing user base. Through

these different effects, technical standards thus enable interoperability among

technologies and collaboration among users.11

Standardization, however, also imposes costs.

Standards can restrict customization efforts that are fine tuned to any

particular user's needs, imposing a tyranny of the majority that is insensitive

to important but idiosyncratic needs among a minority. For example, Jonathan

Sterne describes how the MP3 audio standard addresses the desires of the

majority of listeners by reducing file sizes and, therefore, enabling denser

storage and faster transmission. Yet this same standard exhibits artifacts and

quality limitations that a minority of listeners, such as audiophiles, find

objectionable.12

Moreover, once established, standards can be

difficult to change, even if most users would be better off under such a

change. For example, economist Paul David argues that most users remain "locked

in" to the QWERTY keyboard standard—the particular arrangement of keys on

a typewriter or computer keyboard—even though alternative arrangements

would be more efficient.[13] As a group both dependent

upon standards and instrumental in developing them, CCRMA provides insight into

the emergence and management of these tensions around standards and open

innovation.

The commercialization of university research

is a third major theme running through the CCRMA account. Stanford has given

rise to some of the most prominent firms in today's economy, including Google,

Yahoo, Genentech, and Hewlett-Packard. In turn, policy makers and business

leaders alike have not missed the potential connections between university

research and important products and organizations.14

Concurrently, academic investigations into the commercialization of university

research have mushroomed in recent years.15

This literature has wrestled with a number of outstanding questions, including

faculty involvement and perceptions; the role of university technology-transfer

offices; the role of university and government policies; and the processes and

mechanisms underlying commercialization.16

As David Mowery and others have documented, Stanford

was one of the earliest universities to embrace such commercial engagement. It

is an instructive example, therefore, of how market considerations came to be

intertwined with university research activities.17

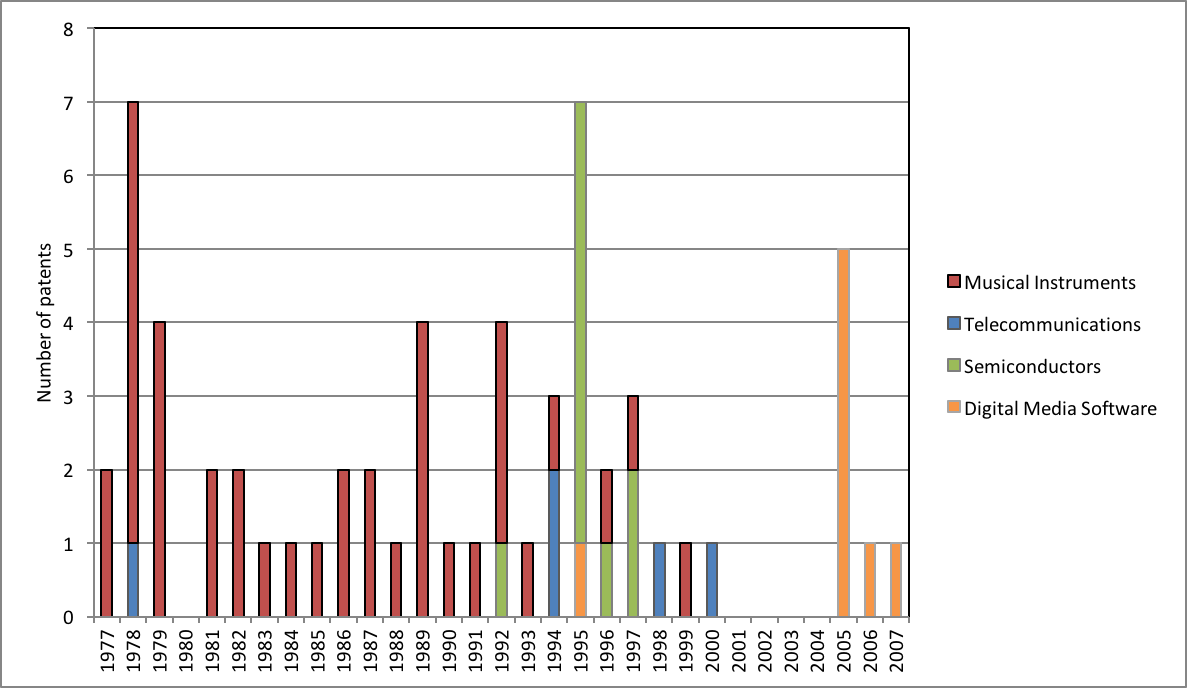

Indeed, CCRMA provided some of the earliest technology disclosures to

Stanford's newly formed Office of Technology Licensing in the 1960s and 1970s,

and the Yamaha FM synthesis license was this office's first big hit. At the

same time, however, much of the commercial engagement at CCRMA transpires

through what might be termed "informal" technology transfer efforts—that

is, activities beyond the formal patenting and licensing of technologies.[18]

Moreover, CCRMA's experience upends the conventional wisdom that firms are

primarily recipients of university technology, instead highlighting cocreation

efforts and instances of firm-to-university technology transfer. Thus,

commercialization at CCRMA is a multifaceted endeavor that both extends and

challenges the existing literature.

These three themes—interdisciplinarity,

open innovation, and commercialization—are threads that wind throughout

the CCRMA account, stitching together diverse people, organizations,

activities, and motivations against the backdrop of a changing and

heterogeneous context. In turn, my central thesis in this book is that they

must be viewed as coevolving—as mutually shaping activities whose

interactions influence one another's trajectories. For example, Chowning's

musically motivated invention of FM enabled Yamaha Corporation to introduce a

low-cost and widely accessible digital music synthesizer. In turn, sales of

this synthesizer provided licensing revenue to support academic activities at

CCRMA, which also enabled a broad group of musician-engineers to generate

further inventions.

As another example, Professor Ge Wang's

desire to test a new music programming language with a broad user base led to

his founding of a startup, Smule. In turn, Smule employs some of the same CCRMA

students who compose music for the Stanford mobile-phone ensemble that Wang

directs. In other words, the ties between diverse people engaged in academic

research, invention, and commercialization run thick; they not only are

difficult to unravel, but also doing so would remove the context in which they

operate. Thus, although there is a substantial literature on each of these

themes independently, as cited throughout the text, my analysis of CCRMA

represents an initial attempt to pull them together into a cohesive account of

how a new academic discipline can emerge at the intersection of new

technologies that provide new capabilities, commercial activities that develop

these technologies and that provide critical resources, and interdisciplinary

engagement that draws together diverse perspectives, communities, and

interests.

To explain how people, resources, activities,

and ideas move across boundaries—and with what effect—I leverage

the concept of multivocality.

Multivocality, in the words of sociologist Woody Powell and his colleagues,

refers to the ability to perform "multiple activities with a variety of

constituents."19 For example, Powell and

colleagues leverage multivocality to describe collaborations in the

biotechnology industry. In their case, universities, dedicated biotechnology

firms, venture capitalists, large pharmaceutical companies, and other

organizations come together through a range of different activities, including

research, marketing, and funding relationships. In fact, Powell and colleagues

argue that it is through these diverse constituencies engaging in multiple

activities that the biotechnology industry emerged and grew; each group found

ways to connect with other groups through a common activity, such that the

pursuit of multiple activities formed a dense network of diverse organizations.20

Multivocality also suggests that these

diverse participants need not interpret the same activity in the same way.

Political scientists John Padgett and Christopher Ansell, for example, use

multivocality to describe how the Medici family of Renaissance-era Florence

maintained power through Cosimo de Medici's "sphinxlike character": Cosimo

arbitrated between and leveraged diverse economic and familial networks by

maintaining ambiguity as to his true desires and intentions. In turn, different

participants interpreted these desires and intentions according to their own

perspectives. Thus, to Padgett and Ansell, multivocality means that "single

actions can be interpreted coherently from multiple perspectives

simultaneously, ... single actions can be moves in many games at once, and ...

public and private motivations cannot be parsed."21

Similarly, my investigation of CCRMA

highlights the ways in which the same activities can be interpreted by

different people and groups in different ways, and it shows that an emergent

center like CCRMA can access resources and legitimacy by leveraging such

multivocality. The CCRMA account moves beyond this point, however, by

underscoring how the success of any given individual or group engaged in this

system may in fact depend upon this

diversity of perspectives, participants, and goals. Moreover, it develops an

essential role for technologies and technological artifacts in facilitating

such multivocality. Ultimately, therefore, the emergence and renewal of CCRMA

shows how interdisciplinarity, open innovation, and commercialization not only

can reinforce one another, but also can form an inseparable web of mutual fate.

My account of CCRMA reflects fourteen years

of research into the center. My data include formal interviews with thirty-one

people, constituting over a thousand pages of transcripts. (See the appendix.)

I also held informal conversations and extended email exchanges with dozens of

other current and former CCRMA students, faculty, and other affiliates. In

addition, I make use of several interviews conducted by journalists and

historians, stretching back to the center's early history. These various

interviews, conversations, and exchanges helped to establish context, to

capture specific events, and to verify and refine facts and perceptions.

I also draw upon thousands of pages of

archival documents, including personal and business correspondence, minutes

from various meetings, concert programs, grant proposals and reviews,

interdepartmental memos, and technology licensing documentation. (These

documents reside in the Stanford University Special Collections and Archives,

in the Stanford Office of Technology Licensing files, and in the private

collections of several CCRMA affiliates.) I quote liberally from these sources,

and from the interviews, in an attempt to provide the reader with a sense of

the conversations and considerations in historic context and in the words of

those people who made this history. By providing a detailed historic account of

a group at the center of the computer music revolution, my intention is to

infuse a grounded qualitative richness into conversations about

interdisciplinarity, open innovation, and commercialization that sometimes

devolve into comparative statistics and rankings.

For the interested reader, the following

website contains electronic copies of hundreds of archival documents, alongside

other resources, organized by their linkage to specific chapters in the text: http://www.thesoundofinnovation.com.

In many cases, therefore, the reader can trace footnotes to freely accessible

electronic images of the source documents. This website also includes lists of

CCRMA publications and patents, along with lists of concerts and performances,

compositions, recordings, and other data. As noted at various points in the

text, I have leveraged these resources for various journal articles on related

aspects of CCRMA, each of which also is available through the website.

The decade over which I've engaged in this

research has enabled me to craft and recraft my understanding and analysis of

CCRMA. It also has raised my awareness as to the many important contributions

and people that are omitted, very regrettably, from this particular account.

Most notably, my attention to the many compositions associated with CCRMA and

to the gifted composers behind them is limited. They deserve a book of their

own.

The

account that I do give is crafted as follows: Chapter 2 describes how broader

institutional environments shape organizational emergence. Thus, it describes

the history of both Stanford University and the Stanford music department, and

it focuses on the tumultuous era of the 1960s and the changes in federal

funding and social priorities that facilitated CCRMA's emergence. Chapter 3

explores the history of computer music, including early activities at

AT&T's Bell Labs and user-driven innovation at Stanford's artificial

intelligence laboratory. Chapter 4 provides a clear sense of the early

uncertainties and tensions surrounding CCRMA and interdisciplinary efforts to

promote computer music, from the "low notes" of faculty dismissals and research

group "divorces," to the "high notes" of faculty reinstatements and the making

of major grants to the center. Chapter 5 focuses on CCRMA's four-decade-long

relationship with Yamaha, exploring how university–industry

collaborations can emerge and evolve to the benefit of both organizations even

as they raise challenges tied to different goals and incentives. Chapter 6

describes creative projects and efforts in the 1980s and 1990s to bolster,

renew and grow the center amidst shifting funding, technological and musical

landscapes. Chapter 7 conveys CCRMA's attempts to commercialize another kind of

sound synthesis, which raised new questions about intellectual property, open

sharing, and the relationship between academic and commercial activities.

Chapter 8 focuses on the new millennium, which is marked by a resurgence of

free and open source sharing, and the extension of CCRMA activity into an

ever-wider array of disciplines. Finally, chapter 9 reconsiders the ways in

which academic disciplines, open innovation, and technology commercialization

can coevolve, pointing to broader lessons for creative organizations that

serve, in Chowning's words, as sites of "intellectual ventilation as well as

coordination."22

Setting the Stage

To understand CCRMA's emergence,

it is useful to step back to World War II. For the first years of that

conflict, the Allies relied overwhelmingly on aerial bombardment as the means

to engage the Germans. The casualties associated with this approach were

overwhelming, with an estimated 2 to 20 percent of planes lost on any given

mission. Germany's electronic air defense system—a network of radars and

antiaircraft guns that enabled them to track, intercept, and destroy Allied

planes—was formidable and lethal.

An intense Allied effort focused, therefore,

on determining details of the German system and on further developing the

Allies' own electronic warfare capabilities. At the center of these efforts was

the Radio Research Laboratory (RRL) at Harvard University. Frederick Terman, a

Stanford professor who would prove important to CCRMA, was the RRL's director.[1]

The RRL was one of several government-funded

large-scale research efforts tied to World War II. Other examples include the

Manhattan Project and the Radiation Laboratory at MIT. These programs aimed to

leverage university (and industry) researchers in order to produce new

technologies that many observers would credit, literally, with winning the war.2

As ticker tape rained down upon parades of

returning US soldiers at the end of World War II, scientists and engineers thus

shared in the glory. Wartime efforts, such as those undertaken at the RRL,

highlighted the critical role of scientific research in addressing "practical"

problems.3 To be sure, US universities

had long engaged in the pursuit of practical problems. The decentralized

control of American universities—as contrasted against the centralization

of many European systems—meant that funding and enrollment of American

universities was dependent upon the interests of the local community. In turn,

these interests tended to be practical in nature.4 The

Morrill Act of 1862 codified this arrangement, explicitly tying public

universities "to the needs of local industries and to the priorities

established by state legislatures."5

Thus, the University of Oklahoma developed expertise in petroleum engineering,

the University of Kentucky worked extensively on tobacco processing, and the

University of Minnesota conducted research on mining, as three examples. The

institutionalization of engineering and applied sciences in American

universities—spurred by the rise of university-trained engineers and

scientists in industry—further reinforced the role of "practical problems"

in university research and teaching.6

In establishing their own university, which

opened in 1891, Leland and Jane Stanford, too, emphasized practical interests.

Their founding document decreed that the university should "qualify students

for personal success and direct usefulness in life."7

Thus, a practical orientation was baked into Stanford's origins, at least to

some extent. What changed with World War II, however, was the orientation of

these efforts, from regional to national, and the level of government support

(which increased dramatically). Indeed, World War II dramatically boosted the

prestige of American science among the public and politicians alike, leading to

significant funding increases.8

The nexus of research activity in World War

II, however, remained on the East Coast—especially around Harvard and

MIT. Stanford, by comparison, played a minor role. This observation was not

lost on Terman, the Stanford professor and director of the RRL. Terman returned

to Stanford and became the dean of the School of Engineering in 1946.9

As dean of the School of Engineering, and as

provost starting in 1955, Terman saw a "wonderful opportunity" in the Cold War

expansion of federal research funding.10

Indeed, the 1950 establishment of the National Science Foundation—which

was proposed and first led by Terman's mentor, Vannevar Bush—provided a

vehicle through which the federal government could support both basic and

applied research at universities. When the Soviets launched Sputnik in 1957,

the widely shared interpretation was that the United States was falling behind

in science and engineering. In turn, federal support for university research

again surged.[11] Building on the growth in

federal funding, Terman oriented Stanford faculty hiring and university budgets

around government grants and contracts.

At the same time, Terman encouraged strong

ties between the university and industry. As early as the 1930s, for example,

he encouraged two of his students, William Hewlett and David Packard, in their

development of a new line of audio oscillators. These audio devices would

become the first product for the Hewlett-Packard Company, and an early model

still sits in a glass display case at Stanford's electrical engineering

building. (Incidentally, multiple CCRMA participants hold joint appointments

with the electrical engineering department.) Through the 1950s and 1960s,

Terman amplified his efforts at industry engagement. He invited local companies

onto Stanford land, in what would become the Stanford Industrial Park; he

encouraged technology development collaborations between Stanford faculty and

company-based researchers; he invited company-based researchers to teach

Stanford courses; and he established the Honors Cooperative Program (or Honors

Co-Op), whereby full-time workers at local companies could take Stanford

courses and earn a Stanford degree. Terman thus established a legacy of

industry engagement.12

Of course, Stanford focused not just on

commercializing established university departments. In an effort to bridge

basic and applied research, the university also established a number of

nondepartmental research centers. The first such center was the Microwave

Laboratory, established in 1944 as the on-campus arm of a local company, Varian

Associates.13 Next came the Applied

Electronics Laboratory, the Systems Techniques Laboratory, the Solid State

Electronics Laboratory, and the Center for Materials Research. Although these

centers performed basic research, they also emphasized short-term

defense-related applications, they drew money from defense related sources, and

they maintained ties with firms in the military-industrial complex.14

A key feature of these centers, Terman

reasoned, was interdisciplinarity. As Terman observed, "The training of

engineers [up to World War II] was inadequate [and] they didn't measure up to

the needs of the war. ... Most of the major advances in electronics were made by

physicists ... rather than by engineers."15

Terman thus revamped undergraduate education to emphasize fundamental math and physics,

and he encouraged interdisciplinary approaches by which physics, chemistry, and

math could contribute to engineering advances.16

This approach was particularly evident in the new centers.

In short, then, Terman demonstrated a model

by which external funding supported a blend of basic and applied research

through a newfound interdisciplinary emphasis. Terman's approach appeared to

meet with great success: fueled, in part, by dramatic increases in federal

research funding, Stanford rose in prominence from a well-respected but

regionally-oriented university to a top-ranked international institution.

In the late 1960s, however, this dramatic

rise in federal funding appeared to stagnate: government support declined and

then remained relatively flat until the late 1970s. Moreover, university ties

to the military, in particular, carried new implications as the conflict in

Vietnam escalated. Across the Stanford campus, and the nation, reformers

questioned the relationships between the military and university research. At

the same time, they encouraged the redirection of "applications" away from

military goals and toward social ones—a goal with which many faculty

agreed.[17] For example, Robert Huggins,

director of the Center for Materials Research (funded by the military's

Advanced Research Projects Agency), spoke of his

desire to make use of the already strong base in materials science to assist progress in some of the civilian technologies that have lain comparatively dormant in recent years, when primary attention was heavily concentrated upon those oriented primarily toward defense- and space-related matters.18

Holt Ashley, a professor of Aeronautics and Astronautics, wrote in an editorial:

Throughout the School [of Engineering] and

especially in a few departments, a conscious move in the direction of more applied subjects is needed. No doubt

there exist topics of fundamental research which are more relevant to urgent

social needs, in the U.S. and the world, than the current favorities [sic] in Stanford Engineering.19

These faculty and others thus encouraged a shift in focus from military applications to social needs.20

Campus protesters also reacted against the

hierarchical and limited interdisciplinarity that seemed to accompany Terman's

vision. Instead, they proposed a radical

interdisciplinarity, as Cyrus Mody and I have labeled it, that required

equal partnerships among the natural sciences, engineering, social science, and

humanities.21 Stephen Kline, who founded

Stanford's Values, Technology and Society program, captured the perspective

well:

The kinds of questions that do and should

concern the students are: Do you build the SST [supersonic transport], and what

is being done about smog? Questions of this sort cannot be seen clearly through

the viewpoint of any single discipline ... [and instead require] various

combinations of scientists, engineers, philosophers, historians,

anthropologists, psychologists, psychiatrists, sociologists, ethicists, and

theologians—all working very closely together.22

Whereas Terman brought together science and

engineering disciplines, the "radical" perspective encouraged incorporation of

humanities, social sciences and other fields, too. As the CCRMA case would

demonstrate, such mixing could not only orient diverse fields toward a common

problem, like the SST or smog, but also reshape these disciplines themselves.

Through the 1960s, therefore, activists,

administrators, policy makers, and faculty alike came to adopt a broader vision

of interdisciplinarity and to equate this vision with applied research.[23]

At the same time, the federal funding picture changed to support this view. As

Stanford's President Lyman remarked in 1971, "If we succeed, as I trust we

shall, in increasing the amount of multi-disciplinary, problem-oriented research

that we do, this will happen in part because money is beginning to become

available for such work from the Congress and from federal agencies."24

Indeed, federal agencies placed increased emphasis on interdisciplinary and

applied research, while simultaneously offering increased skepticism about the

returns on basic research.[25]

One important outcome of these shifts was

recognition of radical interdisciplinary work as a solution to campus unrest

and a means to appeal to a wider range of funders. Accordingly, Stanford

welcomed a dramatic increase in interdisciplinary centers in the late 1960s,

growing from four in 1968 to ten in 1969 to fifteen in 1975, the year of

CCRMA's formal establishment.26

Amid the turmoil, Stanford's music department

seemed to play a small role. Music itself was not new to Stanford. In reporting

the history of Stanford's first twenty-five years, Stanford's first registrar,

Orrin Elliott, writes:

Music perhaps came first [among

extracurricular activities at the university] and was best exemplified before

the public by the Encina Glee Club ... Band and Orchestra were also early

organizations and have contributed much to the serious study of music in the

University. There was a Roble Glee Club the first year ... the Schubert Club,

organized later, represented the more serious efforts of the women students. In

1896 the student body promoted and managed successfully a Paderewski concert in

San Jose, and again in 1908 one in the Assembly Hall.27

Similarly, in their history of Stanford, Margo Davis and Roxanne Nilan describe the role of music in the university's early years:

Music and drama loomed large in Stanford

community life even though there were no academic departments teaching the

subjects, no theater or auditorium, no one in the student body or faculty with

formal ... training. Just as Stanford's first varsity football players quickly

learned a game that only half of them had ever played, so did the actors and

actresses, musicians, directors, and stage managers learn the crafts of dramatics

and musical performance in action.28

All of that is to say that music was an important and visible extracurricular activity. It lacked, however, an obvious academic purpose and integration into a course of study at Stanford.

In fact, Stanford did not offer its first

formal music course until the late 1920s—more than thirty years after the

university's founding—and Stanford did not establish a music department

until 1947, one year after Terman assumed his position as dean of the School of

Engineering. We have limited historical evidence on these first years of the

music department. Department members were undoubtedly influenced, however, by

other examples of humanities departments that were not oriented toward

externally funded research, which was Terman's clear priority. As provost,

Terman stripped the Department of Classics, for example, of faculty lines,

shrank its graduate program, and directed the remaining faculty to teach large,

lower-level undergraduate courses.29 It

could not have helped that the relatively new music department had yet to make

a national impression: Stanford remained unranked in a 1957 survey conducted by

the American Council on Education on "quality of graduate faculty in music."30

When the central figure in CCRMA's emergence,

John Chowning, arrived in 1962 as a Stanford graduate student, he thus faced a

unique environment: a non-top-ranked department situated within a university

that was profoundly shaped by external funding and an orientation toward

Silicon Valley industry, and which was beginning to ferment a new radical

interdisciplinarity. The institutional environment, as it happens, was ripe for

what Chowning later accomplished.

The First Movement

In 1934, as the United States was

deep in the Great Depression, the rural town of Salem, New Jersey welcomed its

newest resident into the world: John Chowning. Chowning held an early interest

in music, playing violin from the age of seven and percussion instruments from

the age of twelve. His talent as a percussionist, in fact, would take him

around the world—literally: after high school, he served a three-year

tour as a musician in the Navy.[1]

Back in the United States, Chowning attended

Wittenberg University in Ohio under the GI Bill, graduating with a Bachelor of

Music in 1959. He then moved to Paris to study with Nadia Boulanger—the

French composer, conductor, and teacher who counted Aaron Copland among her

pupils.2

Post–World War II Paris was the

epicenter for the musique concrète

movement [video]. Pierre Schaeffer, an electronic engineer, played a key role in the

movement. In the 1940s, he employed rudimentary recording equipment, disc

cutters, to isolate and capture naturally produced sound events.[3]

(Schaeffer used the term concrete to

refer to sounds of nature or the "real world.") Schaeffer then experimented

with how to manipulate and isolate portions of these sounds, removing, for

example, the "attack" or initial onset of a sound and playing recordings

backward. Schaeffer's Etude de bruits

(1948) [video], a series of five compositions, is representative of the style: the

various etudes employ modified locomotive sounds, whistling toy tops, spinning

saucepan lids, boats, and other sounds.4

Public concerts of such pieces met with mixed reactions, as some critics

embraced the new style and others dismissed it as valueless noise.5

Musique

concrète compositions and

concerts also presented new technical challenges. Sound engineer Jacques

Poullin, for example, grew particularly interested in the problems of sound

distribution in an auditorium. Taking advantage of new tape recorders that

could manage five independent tracks of sound, he developed a system that

consisted of two loudspeakers in the front of a space, on each side of the

stage; one speaker hanging over the center of the space; and a fourth speaker

on the rear wall. Poullin thus added a spatial dimension to musique concrète (and he offered an

early demonstration of what today's home theater enthusiasts refer to as

"surround sound").6

Meanwhile, the city of Cologne, Germany

served as the epicenter of elektronische

Musik, which arose around the same time as musique concrète. Elektronische

Musik [video] emphasized entirely synthetic means of producing sounds, drawing upon

noise generators, filters, and other devices to provide the raw sounds and to

manipulate these sounds. Thus, whereas composers in the musique concrète tradition used electronic devices to capture

"real" sounds, composers in the elektronische

Musik tradition used such devices to create the sounds themselves. As a

result, elektronische Musik

especially engaged engineers and technologists alongside composers. Author

Peter Manning reports, for example, that a 1951 talk by Werner Meyer-Eppler, an

early and influential player in the movement, reached an audience "of nearly a

thousand technologists."7 Meyer-Eppler himself was a

scientist—director of the Department of Phonetics at Bonn

University—whose interest in the field had been sparked by the

demonstration of a vocoder machine (a speech analyzer and synthesizer) by a

visitor from Bell Labs in New Jersey. To further the development of elektronische Musik, Meyer-Eppler

partnered with Robert Beyer, another scientist, and Herbert Eimert, a composer.8

Elektronische Musik thus sprang from

collaboration between scientists and composers, a feature that would prove

essential for the Stanford computer music project, too.

Despite their different roots, these two

movements—musique concrète and elektronische Musik—rubbed up

against one another, and they increasingly moved away from their dogmatism in

the late 1950s and 1960s. Thus, as Chowning arrived in Paris in 1959, the

electronic music scene was one in which composers actively sought new direction

and new inspiration for musical composition, drawing upon emerging electronic

tools to further their musical visions.9

Indeed, it was in Paris that Chowning discovered electronic music, later citing

Luciano Berio, Herbert Eimert, Henri Pousseur, and Karlheinz Stockhausen [video] as

influences.10

Chowning's time in Paris solidified his

interest in pursuing a DMA in music composition. He decided to apply to schools

on the West Coast of the United States. As Chowning recalled in a 1983

interview, "I had grown up in the East and gone to school in the Midwest, ...

[so] Elisabeth [his then-wife] and I decided we'd go to California. So there

was [the University of California at] Berkeley and Stanford."11

Both schools offered Chowning a scholarship (as did Michigan). A friend

familiar with the music program at UC Berkeley had encouraged Chowning to

attend there rather than Stanford; UC Berkeley (or "Cal") had more going on in

the area of new music. Yet as Chowning recalled, "There was a little more money

in the Stanford grant than Cal's. So, we came here [Stanford]."12

At Stanford, Chowning immediately became

involved in the Society for the Performance of Contemporary Music—a small

group of students who put on concerts of new music. In describing the

composition environment at Stanford at the time, Chowning recalled:

There was a lot of interest in—in

post–World War II, post-Webern music. [Anton Webern was an Austrian

composer and a student of Arnold Schoenberg.] And we did, you know, maybe the

first performance here of [Karlheinz Stockhausen's] Kontakte. And we did [Stockhausen's] Refrain, and—[Luciano] Berio's pieces, chamber music always,

or solo pieces, and—I don't know, lots—[Henri] Pousser.

In other words, the major serialist and postserialist classical composers had a major influence on Stanford composers. Continuing, however, Chowning notes:

But there was a—oh, these curiosities,

too, like we had this composer, Peter Ford, who was an—really a

philosopher as much as a composer, and—with a heavy interest in French

existentialism. And—well, he was far out. He wrote pieces like double

fugue for solo contrabassoon that [music professor] Leland [Smith] had played,

and wrote an opera called Buddha and

subtitled Dry Dung, and wanted it

done in the church. The department wouldn't allow it. It was—oh, there

were some fun times.13

As Chowning describes it, therefore, the music department continued to embrace a wide range of musical styles, not only performing "traditional" classical music but also experimenting with a variety of new music.

Chowning's active role in new music extended

beyond Stanford, too. By virtue of his jazz percussion skills, which allowed

him to play complicated styles that "straight-trained symphonic percussionists

can't handle very well," Chowning had significant contact with other players in

the San Francisco Bay Area new music scene.14 In

particular, he got to know musicians and performers involved with the San

Francisco Tape Music Center and Mills College.15

Founded in 1962 by composers Morton Subotnick [video]

and Ramon Sender [video interview], the San Francisco Tape Music Center (SFTMC) served as a forum

to present concerts and to learn from and collaborate with others active in

tape music—similar to what Chowning had encountered in Paris. The SFTMC

traced its origins to a group of composers who had assembled an improvised

electronic music studio in the attic of the San Francisco Conservatory of

Music. Subotnick, who was teaching at Mills College at the time, joined this

group and together they formed the SFTMC. The group was highly successful in

the 1960s and undertook regional and national tours that offered broad exposure

for the tape music medium and the associated composers. They also influenced

technological developments: one project involved a collaboration between

Subotnick, Sender, and engineer Donald Buchla, who wanted to create an

electronic instrument that would meet the demands of composers. The result was

the first Buchla analog synthesizer [website]. In 1966, the SFTMC moved to Mills College,

where it became the Mills Tape Music Center (later renamed the Center for

Contemporary Music, or CCM) [website].[16]

At Stanford, however, Chowning was growing discouraged.

The university had neither facilities for electronic music nor an interest in

creating them.17 Indeed, as far as Chowning

could tell, Columbia was the only U.S. university with a studio, and electronic

music certainly was not a priority in Stanford's fledgling department. As

Chowning recalled in a 1987 article [link] in Keyboard

magazine, "An electronic music studio would have been very expensive so there

was no possibility of getting the administration to support that."18

With his composition interests increasingly oriented toward electronic music,

Chowning considered stopping his studies at Stanford: "You know, get the

Master's and split," as he put it in a 1983 interview.19

Bell Labs and the Computer

Chowning's fellow members of the

Stanford Symphony Orchestra were well aware of his growing interest in

electronic music. In January 1964, one of these members, Joan Mansour, passed

him a copy of an article [link] from Science

that described how a computer could be used as a musical instrument.20

(Mansour was a biologist and her husband, Tag, was on the faculty at Stanford

Medical School.)

The author of the Science article, Max Mathews, based his publication on years of

work that he had conducted at Bell Telephone Laboratories in Murray Hill, New

Jersey, a New York City suburb at the opposite end of the state from Chowning's

hometown of Salem. There, in 1957, Mathews created some of the first

computer-generated sounds.[21] As Mathews recalled in a 2005

interview, "The computer was on Madison Avenue in New York City. There were

only two of them in the world at that time, one in Poughkeepsie in their

research lab, and this commercial one there."22

Mathews, who worked for Bell's Acoustic Research Department, explained the

company's motivation: Bell wanted to compress speech so that they could get

more voices over the same transatlantic cable. Mathews worked with converters

and software to research "encoding" or compressing, which—it so

happened—could also be applied to music.23

(Indeed, this same research led to the development of the MP3 standard by

Karlheinz Brandenberg at the Fraunhofer Institute and Jim Johnston at Bell Labs

in the late 1980s. Brandenberg and Johnston's algorithm would facilitate the

transmission and storage of digital music on an unprecedented scale, underlying

services like Napster that challenged the entire record industry.[24])

Mathews, in fact, described music as one of

his "great interests." In the same 2005 interview [link], he quipped, "I still play

the violin, and enjoy it very much, although I'm not a good violin player.

Probably if I were a better player, I wouldn't have bothered with computer

music."25 At Bell, Mathews worked for

John Pierce, a well-known engineer who, among other accomplishments, showed

that satellites could be used for communication. Pierce also shared Mathews's

interest in music. As Mathews recalled:

We were at a concert together, and we liked

some of it and didn't like other parts of it. In the intermission, we turned to

each other and said, "The computer could do better than this." And so he said

to me, "Maxwell, I know you're supposed to be working on telephones, but take a

little time on the side and write a computer program—you've already made

the equipment to get computer numbers converted to sound—let's see what you

can get out of it in the way of music."26

Mathews's article in Science begins with an explanation of the process by which numbers,

which are the language of the computer, can be converted to sound, which is the

basis of music. Fundamentally, computer music uses the numbers as "samples" of

a sound pressure wave, with each sample corresponding to the state of the wave

at a particular instant. The generation of sound signals, however, requires a

very high sampling rate, corresponding to a large number of samples for a given

period of time. The work that Mathews described in Science, for

example, used sampling rates of 10,000 and 30,000 numbers per second, such that

ten seconds of sound required specifying 100,000 to 300,000 individual numbers.[27]

In turn, a "digital-to-analog" converter connected to the computer converts the

sequence of numbers into a sequence of electric pulses with amplitudes that are

proportional to the numbers. A filter then "smoothes" these electric pulses and

a loudspeaker plays the resultant tone.

Of course, as Mathews notes in his article [link],

"To specify individually 10,000 to 30,000 numbers for each second of music is

inconceivable."28 Thus, Mathews wrote a series

of computer programs that enabled the computation of samples from a simple set

of parameters, such as start time, duration, loudness, and frequency (pitch).

Mathews wrote his first program, Music I, in assembly code—a low-level

programming language—for an IBM 704 mainframe computer. It had a single

digital oscillator, a wave generator that produced a triangle wave (a

particular kind of sound wave). The following year, in 1958, Mathews wrote

Music II, which had four triangle oscillators. Two years later, Mathews

completed Music III, designed for the transistor-based IBM 7094 mainframe

computer, a more advanced machine than the IBM 704. Music IV followed in 1962,

the version that most directly informed Mathews's article in Science.29

Pierce and Mathews were interested in

involving musicians in their work, and in 1961 Pierce hired the American composer

James Tenney to work at Bell Labs.[30] In the time Tenney spent at Bell, from 1961 to 1964, he completed several

compositions that used the computer both as a compositional tool and to make

sounds directly.31

Chowning did not read Mathews's article right

away. Instead, as he recalled in a 2008 interview:

I stuck it in my pocket [after Joan Mansour

passed it to him]. Then maybe three or four weeks later I was sending my jacket

to the dry cleaner and I pulled it out and read it. And this is what I saw: [reading

from the article] "There are no theoretical limitations ... The range of computer

music is limited principally by the cost ... These limits are rapidly receding."

So that was what caught my attention.32

Chowning reasoned that Mathews's insights provided a way to pursue electronic music in spite of the resource constraints he faced at Stanford. As he recalled in 1987 [interview link] , "Computers are general-purpose devices, and the idea of using them for music was very attractive because the computers were already there."33 An electronic music studio required a great deal of single-purpose equipment, which Stanford would not support; a computer, however, was already in place and justified on the basis of nonmusical applications. In many ways, in fact, the roots of the Stanford computer music program would grow from repurposing nonmusical entities—equipment, programs, people, and funding agencies—in the service of musical aims. Such repurposing lies at the heart of multivocality because it takes advantage of the multiple interpretations of an activity or tool in order to facilitate novel activity and acquire resources and support for that activity.

As a first step, Chowning reasoned that he

needed to learn to program. As he recalled in a 2005 interview [link]:

I looked in the course catalog, and there was

a course for non-engineers in Algol [a computer language developed in the

1950s]. So I took the course. It was amazingly easy for me, because they taught

this course not around programming engineering problems, which was the way most

every programming course was taught in those days, but rather they looked for

ways to engage this population of people—it was a small group of

us—in terms of solving problems that we posed.

In other words, the course adopted the practical-problem orientation that emerged at Stanford more generally during this time period. Chowning, not surprisingly, identified a musical problem:

I thought, "I'll generate a whole bunch of

12-tone rows." [Twelve-tone music uses all twelve notes of the chromatic scale

equally, thus avoiding an association with any particular key.] Although I

wasn't so much interested in that kind of music, it was a tractable problem. So

I solved the problem, and in doing so I learned how to program, at least the

basics of programming. Then when I really wanted to do something, the following

summer, I was prepared.34

Chowning followed his spring course with a visit to Mathews in the summer of 1964. Given his own interest in music, Mathews, of course, was happy to receive another composer. Mathews provided Chowning not only with crucial direction, but also with the Music IV program on a set of punch cards.

In a 2008 interview, Mathews adopted the more

recent language of "open source" to characterize his sharing:

They [Mathews's Music programs] were all open

source. That's right. We released the source code and there were no

restrictions. We didn't even have a nice contract like open source programs

have that says, "If you do something, you've got to give it out."35

In fact, Mathews later coauthored a book, The Technology of Computer Music (1969), intended as an instruction manual to facilitate diffusion of the subsequent Music V program.36 As he shared in a 2008 interview:

I felt that one of the important problems in

computer music was how to train musicians to use this new medium and

instrument. ... [visits were] a relatively slow way of getting the media into as

broad a usage as we thought was appropriate. So one of the things that I did

was to write a book.37

Mathews's primary interest lay in encouraging

the emergence of computer music and in the broad diffusion of resources that

would facilitate this emergence. Thus, he engaged in free and open source

sharing that enabled interested individuals—engineers and musicians, from

universities and firms—to build on his own work.

SAIL and the Origins of CCRMA

The next step for Chowning was to

implement this program at Stanford. In the early 1960s, computers were not

common on the Stanford campus. Chowning's search for a machine quickly led him

to the facilities of the Stanford Artificial Intelligence Project (Lab) or

SAIL. SAIL itself was new on campus, established in 1963. Thus, SAIL appeared

alongside many of the other centers from Terman's era as provost, as Terman

leveraged the center model to encourage research that blended "basic" and

"applied" characteristics. John McCarthy, the lab's founder, had arrived at

Stanford in 1962 and immediately initiated an artificial intelligence project

that built on his work at MIT in the 1950s. With support from the US military's

Advanced Research Projects Agency (ARPA) [website], SAIL rapidly grew from six people in

1963, to fifteen people in 1965, to over a hundred people in 1968.[38]

The SAIL computer system, as Chowning

recalled in his characteristic dry humor, "comprised an IBM 7090 [website] that had an

enormous memory of 36k 36-bit words and a hard disk whose capacity was well

over 500k words and about the size of a large refrigerator. The hard disk was

shared by a DEC PDP-1 computer [website]."[39] Chowning's

graduate school advisor, Leland Smith, recalled an equipment failure a few

years later at SAIL, which underscored the very early state of computer

technology at the time:

The first disk drive for these IBM things,

they were like wash[ing] machines. This big. [Gestures with outstretched arms around

the room.] We had this thing called the Librascope. It was a giant disk. It was

in a thing not quite as big as this room, but almost. [Laughter.] ...

The whole system, of course, was very flaky

because of all the crazy things we were doing with the PDP-10 there at the AI

lab. [The DEC PDP-10 was a successor to the IBM 7090.] ... [Once], there was a

power failure. ... This disk, which was running twenty-four hours a day, had a

system where if it shut down, it was supposed to open some valves to shoot in

nitrogen gas to keep the heads away from the surface of the disk so they

wouldn't scratch it. ...

[When the power failure happened] you could

hear this disk going [Smith makes a "whoo" descending tone]. You know, slowing

down [another "whoo" descending tone]. And it would go [another "whoo"

descending tone]. And then we heard [Smith makes a scratching sound].

[Laughter.] ... And we were like, "My God, what's happening." [Laughter.] It