Njbml

CS229 Machine Learning Project Proposal

To implement and use Hidden-Markov Models (HMMs) for the for the purpose of understanding, transcribing, and modeling the musical parameters of an acoustic drum set.

The focus of the project will consist of surveying current techniques of HMMs used in automatic speech recognition and apply similar methods to model each sub-instrument of the drum set including the bass drum, floor tom, snare, mid-toms, hi-hat, crash cymbal, and ride cymbal. The training of the instrument models will be performed using time and frequency parameters of pre-recorded isolated drum sounds, which can easily be obtained via drum-sample libraries used in music production for negligible cost. The first-stage results should be able to accurately and independently identify each sub-instrument.

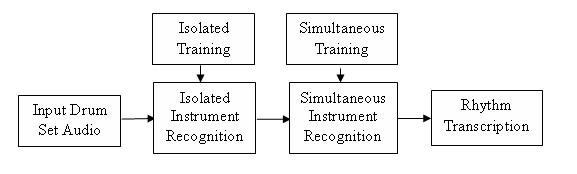

Once successful models of each sub-instrument are obtained, a higher-level set of HMMs can be used to model a rhythmic pattern, where a single state of the HMM model would represent a single musical beat or duration of time. The output of the first level can help train the second and eventually vice versa. Multiple levels of HMMs can be used to break down the larger problem of instrument/rhythm transcription, similar to speech recognition where one level is used to model the multiple phonemes of the vocal tract and the next models individual words of a given language. A single musical beat can be represented as a linear combination of the sub-instruments and trained on entire measures of prerecorded material as opposed to single instrument samples. Because of the almost unlimited variability of rhythmic patterns, training should focus on a single genre of pattern as proof of concept and then move towards a more generalized set of pattern models. The second-stage results should be able to simultaneously identify combinations of the drum sub-instruments in reference to time. See figure below.

The motivation behind understanding and transcribing acoustic drum set sounds and patterns can be found throughout music information retrieval, music education, music production, and live human-machine music interaction performance. With respect to music information retrieval, this work could eventually be used for an entire model of a pop song, genre classification, and more. For music education purposes, students could play alongside a software program using the model and obtain feedback on their performance. In music production, large databases of audio samples and recordings could be more easily sorted and automatically identified knowing the rhythmic patterns. With respect to live human-machine music interaction, the rhythm model could be used in suggesting an accompanying drum pattern for a given piece of work, help compose new rhythmic variations automatically, or improvise alongside a human performer.

People

Students: Nicholas J. Bryan

Advisors

Professors: Ge Wang, Andrew Ng

Due Dates

Milestone: 12:00pm Friday, 11/16 Poster Presentation: Morning of Wednesday, 12/12 Final Writeup: 12:00am Friday 12/14

Papers to Read/Concepts to Understand

HMMs and how they are used for speech recognition

How the knowledge of HMMs/speech recognition can be applied to rhythm understanding

A Tutorial on Hidden Markov Models and Selected Applications in Speech Recognition

Hidden Markov Models for Speech Recognition OGI School of Science & Engineering Course Website

Dan Ellis's Speech and Audio Processing and recognition Class

ISMIR Papers of Relevance

Music Retrieval By Rhythmic Similarity Applied on Greek and African Traditional Music

Pattern Discovery Techniques for Music Audio

Understanding Search Performance in Query-By-Humming Systems

Casual Tempo Tracking of Audio

Eigenrhythms: Drum Pattern Basis Sets For Classification and Generation

Audio Retrieval by Rhythmic Similarity

Beat and Meter Extraction Using Gaussified Onsets

Drum Track Transcription of Polyphonic Music Using Noise Subspace Projection

Supervised and Unsupervised Sequence Modeling for Drum Transcription

ISMIR 2006 Tutorial: Computational Rhythm Description

Extraction of Drum Patterns and Their Description within the MPEG-7 High-Level Framework

Continuous HMM and its Enhancement for Signing/Humming Query Retrieval

Rhythm-Based Segmentation of Popular Chinese Music

Indexing Hidden Markov Models for Music Retrieval

New Music Interfaces for Rhythm-Based Retrieval

MIR in Matlab (II): A Toolbox for Musical Feature Extraction from Audio

Pattern Matching in Polyphonic Music as a WEighted Geometric Translation Problem

The dangers of parsimony in query-by-humming applications

Rhythmic Similarity through Elaboration

Drum Transcription in Polyphonic Music Using Non-Negative Matrix Factorisation

A Drum Pattern Retrieval Method By Voice Percussion

From Rhythm Patterns to Perceived Tempo

Joint Beat & Tatum Tracking From Music Signals

Percussion Classification in Polyphonic Audio Recordings Using Localized Sound Models

Rhythm and Tempo Recognition of music Performance From a Probabilistic Approach

A comparison of Rhythmic Similarity Measures

Query-by-beat -boxing: Music Retreival for the DJ

Applying Rhythmic Similarity Based on Inner Metric Analysis to Folksong Research