MakerFaire

Contents

Introduction

The Center for Computer Research in Music and Acoustics (CCRMA -- pronounced "karma") is an interdisciplinary center at Stanford University dedicated to artistic and technical innovation at the intersection of music and technology. We are a place where musicians, engineers, computer scientists, designers, and researchers in HCI and psychology get together to develop technologies and make art. In recent years, the question of how we interact physically with electronic music technologies has fostered a growing new area of research that we call Physical Interaction Design for Music. We emphasize practice-based research, using DIY physical prototying with low-cost and open source tools to develop new ways of making and interacting with sound. At the Maker Faire, we will demonstrate the low-cost hardware prototyping kits and our customized open source Linux software distribution that we use to develop new sonic interactions, as well as some exciting projects that have been developed using these tools. Below you will find photos and descriptions of the projects and tools we will demonstrate.

Maker Faire website: [1]

Touchboard

The Touchboard is a hand-made music controller fashioned out of a solid block of Padauk. It was motivated by an interest in the interaction with physical objects and the types of gestures these objects invite. In my experience, objects that possess external beauty generate a presence unto themselves, and thus influence the resulting musical interactions. In addition to providing fifteen touch-sensitive sensors, the Touchboard's objective is to inspire the creation of customized and satisfying software performance systems.

The Touchboard is based on sensors that detect position and pressure applied by the performers fingertips. The controller also sports eight LED lights and an encoder. Using an Arduino microcontroller board, sensor data is transmitter between the touchboard and a computer via USB.

OOCSCC

The ooscc is an open source/hardware controller that communicates over ethernet via the open sound control protocol. It is meant to be an open platform for computer music control.

ooscc: stands for open open sound control controller. is an open source/hardware controller that communicates via the open sound control protocol. is intended as a replacement for common MIDI controllers. is meant to help DIYers transition from MIDI to better methods of communication. communicates via ethernet (via wi-fi is a future goal). can be powered by USB or a 5V DC adapter. Battery power is also a possibility. is open-ended, and will eventually allow interfacing with both analog and digital inputs and outputs. is currently very much in beta stages. http://www.experimentalistsanonymous.com/ooscc/

Lattice Harp

The Lattice Harp is a two-dimensional stringed instrument which simultaneously functions as a musical instrument and controller.

https://ccrma.stanford.edu/~craffel/hardware/latticeharp/

ReactPad

The ReactPad is a music app for the iPhone based on the ReacTable. By adding pieces to the ReactPad, you can create different types of sounds and explore different shapes of music synthesis. Each piece has assigned one different function, that you can modify by rotating it. The ReactPad can also use your voice as an input, save and load patches over the Internet, and even use the accelerometer to move the pieces around the pad! The ReactPad makes use of the accelerometer, audio, and OpenGL, STK and the Mopho API.

https://ccrma.stanford.edu/~urinieto/256b/ReactPad/

Growl Hero

Growl Hero is a beta game that detects your Growls/Screams while you sing a metal song. Like Singstar/Rockband but it detects screams. It is a a free, open-source app for Mac (Linux version coming soon) developed by Uri Nieto as the final project for the course Music-256a, at CCRMA, Stanford University.

https://ccrma.stanford.edu/~urinieto/256/GrowlHero/

Edgar Robothands

In 1963, Max Mathews wrote that any perceivable sound could be produced using digital sound synthesis. However, any perceivable sound can also be produced using mechanical sound synthesis: states of the digital sound synthesis algorithm can be implemented mechanically. In other words, some of the digital bits used by the algorithm can be represented by mechanical vibrating objects rather than purely electronic bits. Today implementing these mechanical connections is expensive, so in practice, we are limited to only a few points of mechanical/haptic interconnection.

Edgar Robothands explores mechanical sound synthesis by employing robots to play physical percussion instruments. There are five points of mechanical interconnection. At the first four points, a shaker, egg, tambourine, and snare drum are played by "slave" robots. The fifth point is the "master" robot that Edgar holds in his hand. He can play any of the physical instruments by teleoperating it through the master robot with force feedback. During teleoperation, the trajectory of the master robot is recorded into wavetable loops in Max/MSP. At later times during the piece, these loops are played back, providing for mechanical synthesis with an especially human feel, even when the loops are played back irregularly or at superhuman speeds.

Edgar Berdahl is a visiting scholar at CCRMA. He has been studying how force feedback can be incorporated into the design of novel musical instruments.

USING SOUND FEEDBACK TO IDENTIFY AND COMMUNICATE 2D SHAPES

This work shows a technique for allowing a user to "see" a 2D shape without any visual feedback. The user gestures with any universal pointing tool, as a mouse, a pen tablet, or the touch screen of a mobile device, and receives auditory feedback. This allows the user to experiment and eventually learn enough of the shape to effectively trace it out in 2D. The proposed system is based on the idea of relating spatial representations to sound, which allows the user to have a sound perception of a 2D shape. The shapes are predefined and the user has no access to any visual information.

kat 6

kat 6 is an interactive art installation and data visualization/sonification project which at its core is a musical instrument. It sources a local or network based meteorological database from the National Oceanographic and Atmospheric Administration (NOAA) consisting of weather data (wind speed, direction, atmospheric density and geographic location) for Hurricane Katrina. The user 'plays' the instrument by moving through the hurricane with a special 3 dimensional mouse, analogous to flying through the storm and experiencing what it might be like at that location and elevation in the hurricane itself, not unlike a 'Hurricane Hunter' aircraft from which some of the data originates.

A sound and video system surrounds the player who interacts with the database using a haptic 3D mouse/controller typically used for gaming. The storm characteristics at the area pointed to by the mouse are rendered by an actual physical model of the atmosphere in that spot. The storm experience is simultaneously represented with sound (noise/storm sounds), visuals (violent cloud movement), and haptics (vibration/jerking) from the controller. With the controller, the user can move from the calm extremities of the storm into the more violent areas near the eye and experience a physically modeled representation of what it might sound, look and feel like.

GRIP MAESTRO

The GRIP MAESTRO is a hand-exerciser re-imagined as a musical instrument via its augmentation with sensors and communication with software mappings. Magnet/Hall effect sensor pairs are placed under each of four finger-pads and two beneath a palm-pad, to measure their displacement by the performer's hand, and an accelerometer is used to capture the performer's gestures with the instrument in space. Connected to a computer through an Arduino nano microcontroller, the signals from these sensors are carefully mapped using Max/MSP and then passed to ChucK for sound (or video) creation and manipulation.

El Dinosaurio

El Dinosaurio is an analog synthesizer I designed and built from scratch a long time ago (it was finished in 1981). It stayed silent for a long time but after adding a MIDI to CV interface a couple of years ago it is now being used live in concert in some of my pieces.

El Dinosaurio is an analog synthesizer I designed and built from scratch a long time ago (it was finished in 1981). It stayed silent for a long time but after adding a MIDI to CV interface a couple of years ago it is now being used live in concert in some of my pieces.

Sounds of mimes

This project incorporates graphics and sound with the use of an innovative controller by madcatz. The controller consists of a box on the floor with wires that come out and connect to gloves that the player wears. While wearing the glove and watching the screen, a player can imitate actions such as bowling, fishing, and bow and arrow to make the appropriate sonic response through multiple speakers. There is even a sonic wall where someone can act like a mime and knock on the invisible wall.

Software Tools

Planet CCRMA at Home is a collection of open source programs that you can add to a computer running Fedora Linux to transform it into an audio/multi-media workstation with a low-latency kernel, current audio drivers and a nice set of music, midi, audio and video applications (with an emphasis on real-time performance). It replicates most of the Linux environment we have been using for years here at CCRMA for our daily work in audio and computer music production and research. Planet CCRMA is easy to install and maintain, and can be upgraded from our repository over the web. Bootable CD and DVD install images are also available. This software is free.

http://ccrma.stanford.edu/planetccrma/software

Ardour - Multitrack Sound Editor

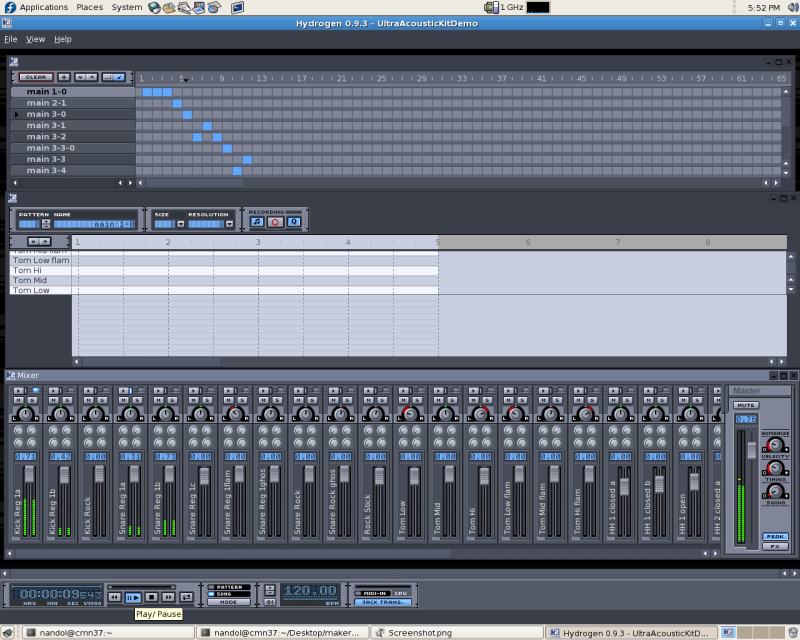

Hydrogen - Drum Sequencer

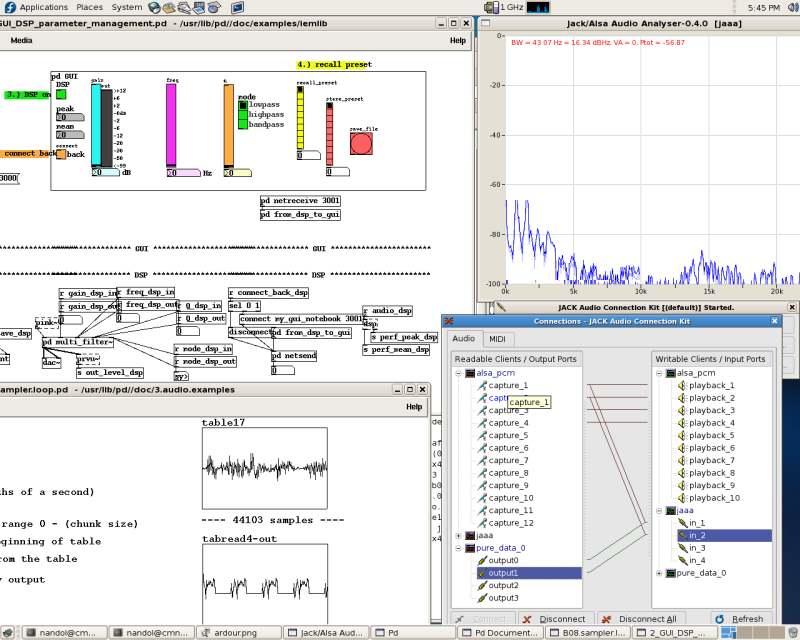

Pd, Jack and Jaaa - Real-time audio tools