Difference between revisions of "MakerFaire"

| Line 1: | Line 1: | ||

==NewPicture== | ==NewPicture== | ||

| − | [[Image: | + | [[Image:MuthaBoard.jpg]] |

Revision as of 00:04, 12 March 2008

Contents

NewPicture

Introduction

The Center for Computer Research in Music and Acoustics (CCRMA -- pronounced "karma") is an interdisciplinary center at Stanford University dedicated to artistic and technical innovation at the intersection of music and technology. We are a place where musicians, engineers, computer scientists, designers, and researchers in HCI and psychology get together to develop technologies and make art. In recent years, the question of how we interact physically with electronic music technologies has fostered a growing new area of research that we call Physical Interaction Design for Music. We emphasize practice-based research, using DIY physical prototying with low-cost and open source tools to develop new ways of making and interacting with sound. At the Maker Faire, we will demonstrate the low-cost hardware prototyping kits and our customized open source Linux software distribution that we use to develop new sonic interactions, as well as some exciting projects that have been developed using these tools. Below you will find photos and descriptions of the tools and projects we will demonstrate.

Software Tools

Planet CCRMA at Home is a collection of open source programs that you can add to a computer running Fedora Linux to transform it into an audio/multi-media workstation with a low-latency kernel, current audio drivers and a nice set of music, midi, audio and video applications (with an emphasis on real-time performance). It replicates most of the Linux environment we have been using for years here at CCRMA for our daily work in audio and computer music production and research. Planet CCRMA is easy to install and maintain, and can be upgraded from our repository over the web. Bootable CD and DVD install images are also available. This software is free.

http://ccrma.stanford.edu/planetccrma/software

Ardour - Multitrack Sound Editor

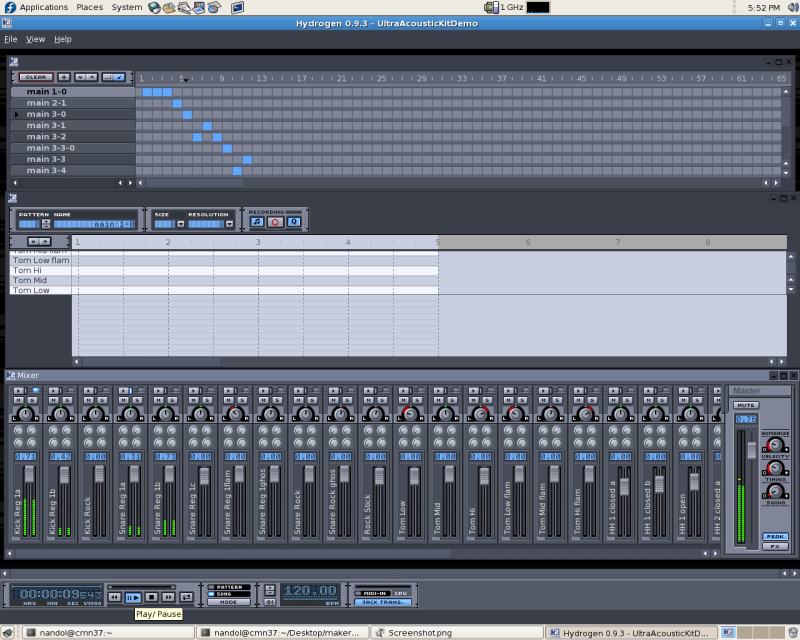

Hydrogen - Drum Sequencer

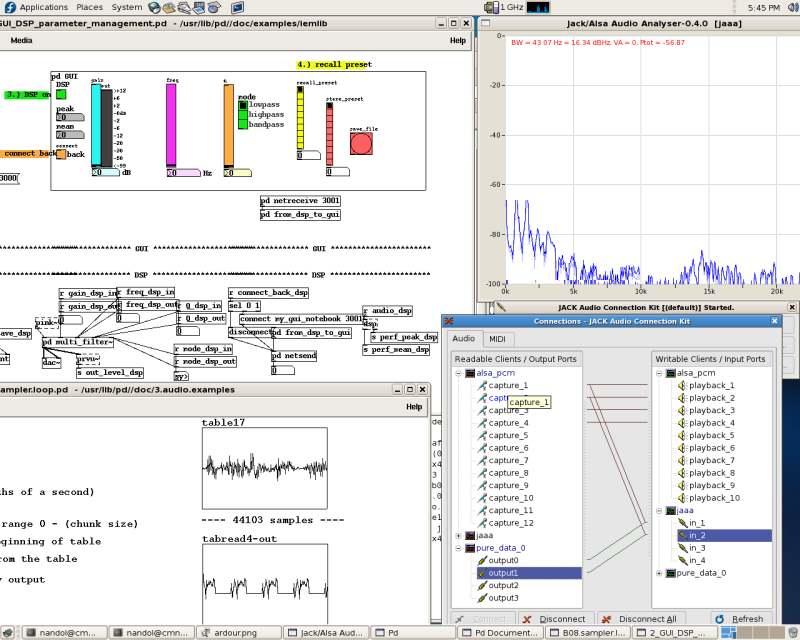

Pd, Jack and Jaaa - Real-time audio tools

Hardware Tools

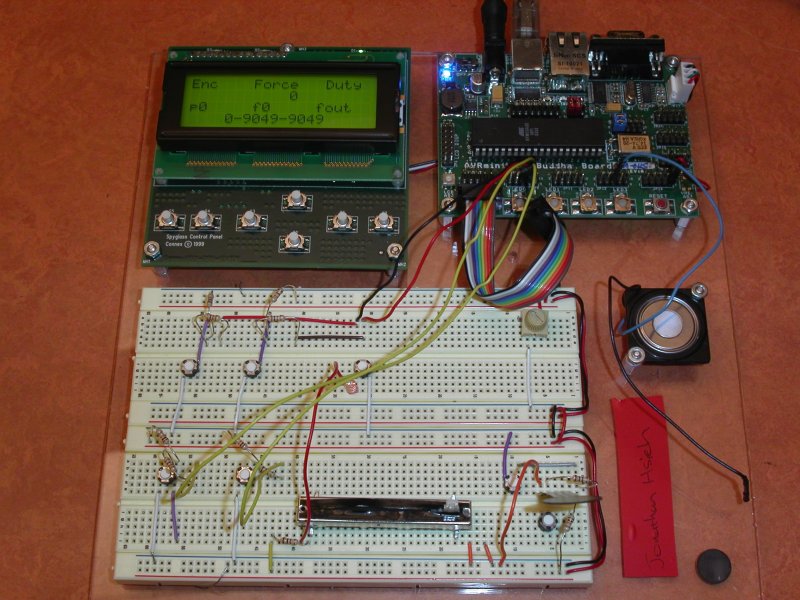

In our courses, we use a prototyping kit based on Atmel AVR microcontrollers, with Pascal Stang's AVRmini at the core. To the AVRmini, we attach an I2C LCD display, solderless breadboard strips, a loudspeaker and sometimes a MIDI jack. In student lab exercises and for prototyping, we hook up sensor circuits on the breadboard and send control signals to a Linux PC over USB, serial, MIDI or Ethernet in order to control open source real-time sound synthesis and processing software. These prototypes are then often built into larger-scale music and interactive sound art projects like the ones below that we will demonstrate at the Maker Faire.

WaveSaw

Most commercial electronic instruments limit the control of sound to one-dimensional controls, such as knobs or faders, whose settings are mapped through various levels of abstraction to create a resulting waveform or timbre. The WaveSaw is inspired by a desire to control sound in a direct and physical way. We want to touch a sound, to manipulate it with our hands as if it is a physical object. The WaveSaw is an instrument whose physical shape is mapped directly to the shape of a waveform or spectrum, and by changing the shape of the instrument we change the sound.

The WaveSaw is made of a long, flat, saw-like strip of flexible metal with wooden handles on each end with which the user can bend, twist, and rotate the instrument. The shape of the blade is measured by flex sensors along its length. The flex sensor values are sent via a microcontroller to a computer, where a custom Puredata (Pd) object recreates the lengthwise shape of the saw blade as a table. This table is then used as the basis of either scanned synthesis or spectral filtering. In the case of scanned synthesis, the table is used as a wavetable that is scanned at audio rates to generate a pitched tone whose waveform, and hence spectrum, varies with the shape of the saw blade. Similarly, the table can be used as the spectral shape of a multi-band filter through which any signal can be passed. Additionally, the WaveSaw has flex sensors oriented width-wise on the saw that are used to measure the amount of twist applied to the blade, an accelerometer for sensing orientation in space, and a pressure sensitive resistor on one handle to measure how hard the handle is squeezed.

Myrtle

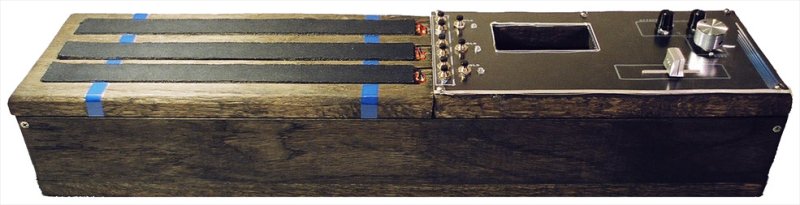

Myrtle is a music controller that communicates with a computer via OSC (Open Sound Control, an open-ended machine communication protocol) and MIDI simultaneously. The interface is primarily designed for controlling the pitch, amplitude envelope, and rhythm of three sound sources in real-time. Designed in conjunction with the Pd environment, Myrtle currently controls a bank of FM synthesizers via OSC, and can transmit 12 different user selectable MIDI notes via a standard MIDI out port. These notes are triggered real-time using a fader.

Myrtle was designed to be used in a live-performance environment, played solo or as part of an ensemble. Instead of an "all-in-one" design, the functions of Myrtle are fairly specific, giving it a unique sound and feel. However, since it is only a controller and not a stand-alone instrument, it can be mapped to any number of different sounds or devices, limited only by the numerical data it puts out. The typical usage of the controller is with the left hand controlling pitch via the foam strips (see below), and the right hand manipulating the various controls on the right side. There are many ways to use the controller differently than this, however. The goal was to create a new and unique tool for musical expression, and integrated into that goal was the idea that Myrtle would have the ability to control audio synthesis in complicated ways, using an intuitive and easy-to-use design. The combination of 3 different controls - a fader, optical sensors, and a series of buttons, used in conjunction with one another , were all integral in achieving this goal.

Please see the detailed Myrtle project page here: http://ccrma.stanford.edu/~breeder/projects/myrtle/myrtle.html

Trees of Pythagoras

The Trees of Pythagoras is an acoustic, electromagnetically-actuated, computer-controlled, long-stringed instrument, with the important distinction of being a single instrument composed of three, physically separate parts. Each piece is, in essence, constructed like a square, extra-large member of the violin family. Each piece consists of a large soundbox connected to a long steel string about ten feet long. Each piece sounds acoustically with a wide dynamic range, but only one unit is intended to be played by a musician. The other two pieces are actuated using electromagnets, which are controlled through a Max/MSP patch. Additionally, all three pieces have piezo-electric transducers which feeds the sound of each unit back to the computer. The Trees of Pythagoras is a concert instrument intended for live performance.

The three soundboxes are all similar in construction to a member of the violin family. They are constructed using a variety of plywoods, eliminating differences in wood stiffness due to grain direction, thus allowing for the square shape. Different thicknesses and sometimes different cuts of plywood are used for the top and back plates allowing for two different sets of plate resonances. Each has an internal architecture with a soundpost and bass bar. Each unit uses a standard contrabass bridge. An important difference to the violin family, is that the top and back plates of these soundboxes are considerably more flexible allowing for greater coupling with the internal air column resonance. Steel signpost bar is employed as a suitable neck and standard 18 gauge steel wire from the local hardware store is used as a string. The string is freestanding, not unlike an Erhu, and connects to the steel bar at the bottom, lays over the bridge, and is attached at the top of the unit to a tuning peg inserted directly into the steel bar.

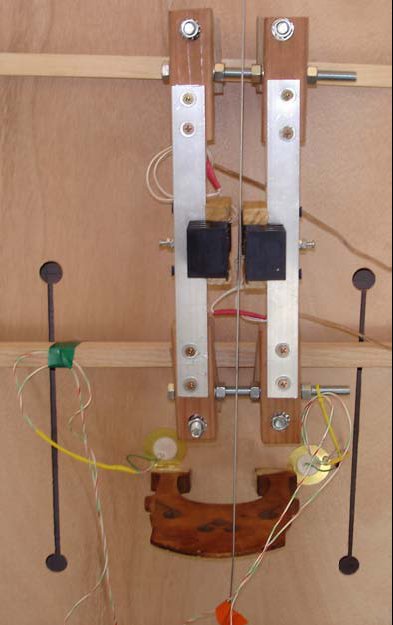

Two of the three units are actuated using an electromagnet assembly. This assembly is then powered using a standard audio amplifier. I acquired fairly powerful electromagnets which have resistances of around 4 ohms at 0 Hz, like many small speakers. For this instrument, the priority was force. I needed to be able to create large, low-frequency waves on the steel strings at a respectable amplitude. Because I am interested in complex sounds, issues concerning distortion are not important. After much research and experimentation, I created an assembly using two electromagnets, facing each other on either side of the steel string. Using ideas developed by Edgar Berdahl and Steven Backer (see http://ccrma.stanford.edu/~sbacker/empp/berdahl_backer.pdf), I added two rare-earth magnets on either side of each electromagnet which intensifies the magnetic field. A stereo audio amplifier is then used to feed the same signal to both electromagnets with the polarity reversed for one so that while one magnet is pushing the other is pulling. This design provides ample force while still being powered by a small wattage amplifier. With too much power, the electromagnets will overheat. To provide additional assistance with this, each electromagnet is attached to a heat sink. For all three units, I constructed a basic piezo-electric transducer using piezo discs and an op-amp based impedance buffer as a pre-amplifier.

Accordiatron

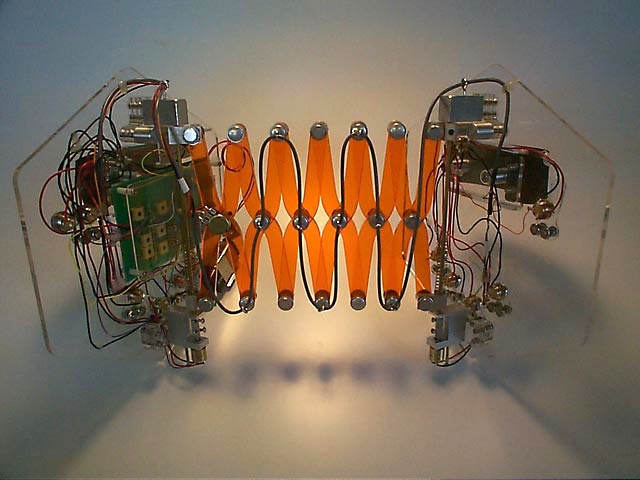

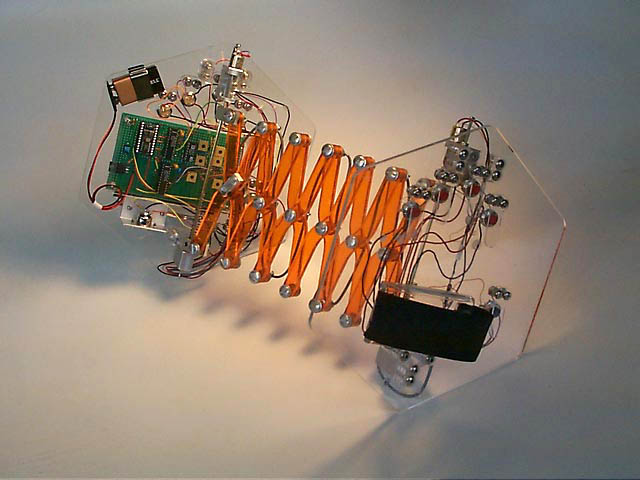

The Accordiatron is a new MIDI controller for real-time performance based on the paradigm of a conventional squeeze box or concertina. It translates the gestures of a performer to the standard communication protocol of MIDI, allowing for flexible mappings of performance data to sonic parameters. When used in conjunction with a real-time signal processing environment, the Accordiatron becomes an expressive, versatile musical instrument. A combination of sensory outputs providing both discrete and continuous data gives the subtle expressiveness and control necessary for interactive music.

The Accordiatron detects the rotation and distance between the hands, the latter by means of a potentiometer embedded in the scissor linkage that connects the two end panels. Buttons on either end panel can be used for triggering notes, samples, or any other discrete input. The Accordiatron is based on the premise building a new interface to capture what are known to be expressive performance gestures, but divorcing those gestures from a particular sound source. The Accordiatron is gathering a growing repertoire of compositions using a variety of mappings.