Difference between revisions of "MakerFaire"

(→Introduction) |

(→Leap the Dips) |

||

| (84 intermediate revisions by 6 users not shown) | |||

| Line 1: | Line 1: | ||

| − | [[ | + | [[File:Granuleggs.jpg|750px]] |

| + | |||

| + | |||

==Introduction== | ==Introduction== | ||

The [http://ccrma.stanford.edu Center for Computer Research in Music and Acoustics] (CCRMA -- pronounced "karma") is an interdisciplinary center at Stanford University dedicated to artistic and technical innovation at the intersection of music and technology. We are a place where musicians, engineers, computer scientists, designers, and researchers in HCI and psychology get together to develop technologies and make art. In recent years, the question of how we interact physically with electronic music technologies has fostered a growing new area of research that we call Physical Interaction Design for Music. We emphasize practice-based research, using DIY physical prototying with low-cost and open source tools to develop new ways of making and interacting with sound. At the Maker Faire, we will demonstrate the low-cost hardware prototyping kits and our customized open source Linux software distribution that we use to develop new sonic interactions, as well as some exciting projects that have been developed using these tools. Below you will find photos and descriptions of the projects and tools we will demonstrate. | The [http://ccrma.stanford.edu Center for Computer Research in Music and Acoustics] (CCRMA -- pronounced "karma") is an interdisciplinary center at Stanford University dedicated to artistic and technical innovation at the intersection of music and technology. We are a place where musicians, engineers, computer scientists, designers, and researchers in HCI and psychology get together to develop technologies and make art. In recent years, the question of how we interact physically with electronic music technologies has fostered a growing new area of research that we call Physical Interaction Design for Music. We emphasize practice-based research, using DIY physical prototying with low-cost and open source tools to develop new ways of making and interacting with sound. At the Maker Faire, we will demonstrate the low-cost hardware prototyping kits and our customized open source Linux software distribution that we use to develop new sonic interactions, as well as some exciting projects that have been developed using these tools. Below you will find photos and descriptions of the projects and tools we will demonstrate. | ||

| − | |||

| − | |||

| − | |||

| − | |||

| − | == | + | ==The Blade Axe== |

| − | [[Image: | + | Romain Michon |

| + | |||

| + | The BladeAxe is an iPad-based musical instrument leveraging the concepts of “augmented mobile device” and “hybrid physical model controller.” By being almost fully standalone, it can be used easily on stage in the frame of a live performance by simply plugging it to a traditional guitar amplifier or to any sound system. Its acoustical plucking system provides the performer with an extended expressive potential compared to a standard controller | ||

| + | |||

| + | [[File:BladeAx2016.jpg|500px]] | ||

| + | |||

| + | |||

| + | |||

| + | ==Granuleggs == | ||

| + | Alison Rush, David Grunzweig, and Trijeet Mukhopadhyay | ||

| + | |||

| + | The Granuleggs is a new music controller for granular synthesis which allows a musician to explore the textural potential of their samples in a unique and intuitive way, with a focus on creating large textures instead of distinct notes. Each controller is egg shaped, designed to fit the curve of your palm as you gyrate the eggs and tease your fingers to find yourself the perfect soundscape. | ||

| + | |||

| + | [[File:Granuleggs.jpg|500px]] | ||

| + | |||

| + | |||

| + | ==BelugaBeats== | ||

| + | Jack Atherton | ||

| + | |||

| + | BelugaBeats is a whale-based step sequencer. You can add whales to 8 rows of a grid, and when a wave washes over them, they will sound their blowholes and play their notes. Changing a whale's size alters the pitch it sings. Occasionally, a whale will get distracted by a fish and play its note while underwater. Unfortunately, there is nothing you can do about this. | ||

| + | |||

| + | [[File:Belugabeats.png|500px]] | ||

| + | |||

| + | ==Chorest== | ||

| + | Jack Atherton | ||

| + | |||

| + | Welcome to your personal Chorest! Walk around, plant seeds, grow trees, and hear the wind in the air! Look down from a bird's eye view, or move through the trees on the chorest floor. When you breathe on your trees, they'll play a chord for you. Or, try singing to them! Trees need a noisy sound to grow -- try stroking your microphone! As you grow your trees, their sound will mature. Don’t forget, it’s always possible to plant new seeds and start anew. Occasionally, you may see a ghost from the past. Please, do not be alarmed. | ||

| + | |||

| + | [[File:Chorest.png|500px]] | ||

| + | |||

| + | |||

| + | ==Leap the Dips== | ||

| + | Jack Atherton | ||

| + | |||

| + | This rolling ball sculpture invites participants to test their skill at "leaping the dips" on a copper model of the world's oldest operating roller coaster. The project's aesthetic draws from a practice certainly much older than the roller coaster -- teenage rebellion, and the ensuing adult panic over the activities of "kids these days." Marbles roll over tracks and supports that are fashioned out of soldered copper wire. The tracks feature dips that cause the marbles to lift off the track and crash back down, as was possible in early roller coasters without up-stop wheels on the underside of the track. Take care in the placement of your marble not to cause the marbles to completely fly off the track! The dips are fitted with sensors that drive an algorithm in Max/MSP for giving aural feedback and a cultural experience to the users. | ||

| + | |||

| + | [[File:LeapTheDipsPicture.jpg|500px]] | ||

| + | |||

| + | == Music Maker== | ||

| + | Sasha Leitman, John Granzow | ||

| + | |||

| + | Music Maker (https://ccrma.stanford.edu/musicmaker) is a free online resource that provides files for 3D printing woodwind and brass mouthpieces and tutorials for using those mouthpieces to learn about acoustics and music. The mouthpieces are designed to fit into standard plumbing and automobile parts that can be easily purchased at home improvement and automotive stores. The goal is to make a musical tool that can be used as simply as a set of building blocks but that aims to bridge the gap between our increasingly digital world of fabrication and the real-world materials that make up our daily lives. | ||

| + | An increasing number of schools, libraries and community groups are purchasing 3D printers but many are still struggling to create engaging and relevant curriculum that ties into academic subjects. Making new musical instruments is a fantastic way to learn about acoustics, physics and mathematics. | ||

| + | |||

| + | |||

| + | [[File:P1000118.jpg|500px]] [[File:TrumpetWithBell.jpg|500px]] | ||

| + | |||

| + | |||

| + | ==Cetacant== | ||

| + | Alison Rush | ||

| + | |||

| + | The cetacant is a musical instrument inspired by whales and designed to accompany a performance of Vela 6911, a piece by Victor Gama. The cetacant emulates features of the cetacean vocal apparatus, using tubes and chambers full of air, water, and oil to produce and amplify sounds. The attached photo is of a prototype; the instrument's final form will resemble a suspended sphere, evoking the bubbles produced by a vocalizing whale, or our watery planet as seen from space. | ||

| + | |||

| + | [[File:Cetacant-diagram1.jpg|500px]] | ||

| + | |||

| + | |||

| + | |||

| + | ==Mephisto== | ||

| + | Romain Michon | ||

| + | |||

| + | Mephisto is a small battery powered open source Arduino based device. Up to five sensors can be connected to it using simple 1/8" stereo audio jacks. The output of each sensor is digitized and converted to OSC messages that can be streamed on a WIFI network to control any Faust generated app. | ||

| + | The goal of Mephisto is to provide an easy way for musicians to interact with the different parameters of a Faust object or any other OSC compatible software during a live performance. | ||

| + | As a "DIY" open source project, Mephisto only uses open source hardware (Arduino, etc.) and was designed to be easily built by anyone. | ||

| + | |||

| + | |||

| + | [[File:Mephisto1.jpg|500px]] | ||

| + | [[File:Mephisto2.jpg|500px]] | ||

| + | |||

| + | |||

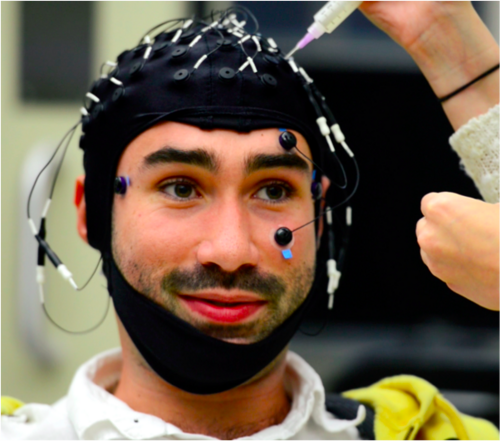

| + | == Hearing Polyphony - A Game and Experiment! == | ||

| + | Madeline Huberth | ||

| + | |||

| + | I work in the Neuromusic lab at CCRMA, whose goal on the whole is to investigate phenomena related to understanding music. Specifically, I've been doing work this past year in how our brain processes polyphony (hearing multiple melodies at once), and will present a game I created that uses the stimuli used in our experiment as a way of understanding the experiment. The experiment and our findings will also be on a poster that I can bring. | ||

| + | |||

| + | Our experiment shows that your brain can detect changes in polyphonic patterns automatically - how easy is it for you to do it consciously? Play and find out! | ||

| + | |||

| + | |||

| + | [[File:Romain_cap.png|500px]] | ||

| + | |||

| + | |||

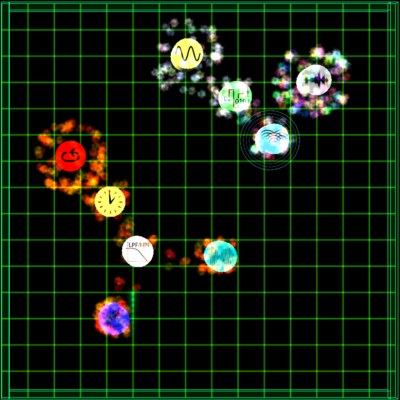

| + | ==CollideFx== | ||

| + | Chet Gnegy | ||

| + | |||

| + | CollideFx is a real-time audio effects processor that integrates the physics of real objects into the parameter space of the signal chain. Much like in a traditional signal chain, a user can choose a series of effects and offer realtime control to their various parameters. In this work, we introduce a means of creating tree-like signal graphs that dynamically change their routing in response to position changes of the unit generators. The unit generators are easily controllable using the click and drag interface and respond using familiar physics, including conservation of linear and angular momentum and friction. With little difficulty, users can design interesting effects, or alternatively, can fling a unit generator into a cluster of several others to obtain more surprising results, letting the physics engine do the decision making. | ||

| + | |||

| + | [[Image:Chet.png|400px]] | ||

| + | |||

| + | |||

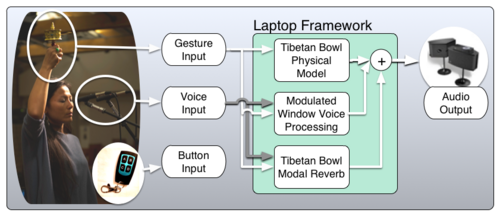

| + | ==The Processed Typewriter== | ||

| + | Andrew Watts | ||

| + | |||

| + | Other than the human voice, musical instruments convey primarily | ||

| + | abstraction through sound content. We interpret these sounds as music to | ||

| + | varying degrees, but if one were to step away from the cultural | ||

| + | associations, the noise would remain highly ambiguous. With a typewriter | ||

| + | the sounds inherent in the machine's use also contain linguistic meaning. | ||

| + | Having this added layer to work with, a composer could pair the text and | ||

| + | the sounds in a multitude of ways, even utilizing the ambiguity of | ||

| + | semantic meaning with the ill-defined meaning of typewriter sounds. For | ||

| + | this project I am specifically thinking towards a performance in the late | ||

| + | spring during a residency with famed soprano Tony Arnold. Rather than a | ||

| + | typical accompaniment for a solo soprano piece, like as a piano, it would | ||

| + | be much more interesting and musically fertile to have her singing lyrics | ||

| + | which are actively being typed in the background. Not only is the text | ||

| + | being transformed into sound through the vocal line, but also the | ||

| + | hammering away of the typewriter. Furthermore, these sounds and the images | ||

| + | of the text appearing on the page would be processed, enabling a wide | ||

| + | range of articulations, imagery, references, and audio sculpting. | ||

| + | |||

| + | [[File:Typewriter1.jpg|500px]] [[File:Typewriter2.png|500px]] | ||

| + | |||

| − | + | ==String== | |

| − | + | Joshua Coronado | |

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | String is controller used to generate waveforms, curves, and envelopes using a camera, coloured string, and Max/MSP. Users draw curves representing objects such as a filter envelope using coloured string. The coloured curve is then captured by a camera and deciphered into a digital curve to be rendered out to audio by Max/MSP. | |

| − | + | ||

| − | + | [[File:Strings.JPG|500px]] | |

| + | [[File:Strings_2.JPG|500px]] | ||

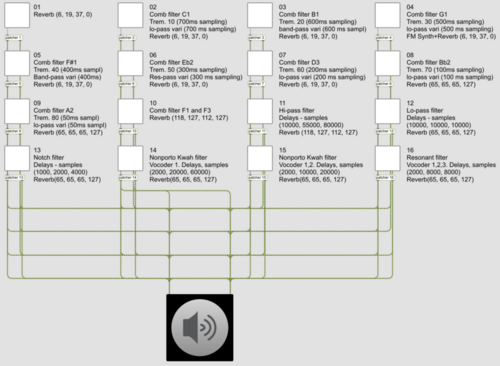

| − | == | + | ==Tibetan Singing Prayer Wheel== |

| − | + | Yoo-yoo Yeh | |

| + | Inspired by the traditional Tibetan prayer wheel and Tibetan singing bowl, we present the Tibetan Singing Prayer Wheel, a physical motion sensing controller that allows you to play virtual Tibetan singing bowls as well as processes your voice when you perform several gestures - spinning the wheel at different speeds, raising and lowering your arm, and tapping a button on the outside. A separate RF transmitter allows you to transition between the three distinct sound design layers: (1) a Faust-STK physical model of a Tibetan singing bowl, (2) a delayed and windowed voice processing layer, and (3) a novel modal reverb model of an actual Tibetan singing bowl, that takes the voice as input. The system is designed to be easy for anyone to pick up and improvise with - go ahead and try it! | ||

| − | + | [[File:NIME_System_Architecture_v2.png|500px]] | |

| − | == | + | ==Mariah== |

| − | + | Mathew Horton | |

| − | + | Mariah sonifies the "diva finger wave." Mariah is a letter of love to women like Whitney Houston, Christina Aguilera, and its namesake, Mariah Carey. Simple draw on the screen with your finger and sing a note. Instant riffs and trills just like the great divas of the 80's, 90's, and 00's! | |

| − | on your | + | |

| − | + | But the amazing, unexpected outcome of creating Mariah was a really interesting feedback instrument. Mariah takes in audio, pitch shifts it, and plays it What you end up with at low levels of sounds is a "self-generating" feedback instrument that creates some really crazy effects. | |

| − | + | ||

| − | + | ||

| − | + | [[File:2015-02-10_11.58.33.png|500px]] | |

| − | + | ||

| − | + | ==Hill== | |

| − | + | Mathew Horton | |

| − | + | ||

| − | + | Hill is a software application for musical and visual accompaniment of spoken word poetry. It is inspired by the minimalist video game, Mountain, as well as Lauren Zuniga's poem, "World's Tallest Hill". Hill builds a scene through which the text of a poem can move. The view of the scene can shift, and depending on the particular place at which the scene is viewed, the accompanying audio is transformed in different ways. Hill allows users to "compose" an accompaniment for a poem by adhering to a sort of "score." | |

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | ||

| − | + | [[File:Hill.png|500px]] | |

| − | + | ||

| − | + | ||

| − | |||

| − | |||

| + | ==Tower of Power== | ||

| + | Graham Davis, Connor Kelley | ||

| − | + | Tower of Power (ToP for short) is an interactive tower of wood that generates sound and sweet LED's. Inspired by the Hunchback of Notre Dame and 1970s funk, ToP is the auditory column for our generation. Tact is a project designed to make sound design and beat construction more intuitive. The instrument is a glove mounted with contact microphones that allows the wearer to record, transform and perform natural sounds at the touch of a finger. A wireless iPad interface provides the wearer with sound-shaping controls, playback effects and glove feedback. Amplify your interaction with the world via tactile sampling and contact playback with Tact. String is controller used to generate waveforms, curves, and envelopes using a camera, coloured string, and Max/MSP. Users draw curves representing objects such as a filter envelope using coloured string. The coloured curve is then captured by a camera and deciphered into a digital curve to be rendered out to audio by Max/MSP. | |

| − | + | ||

| − | + | [[File:Tower_of_power.png|500px]] | |

| − | + | [[File:Tower_of_power2.png|500px]] | |

| − | + | ==Sonic Anxiety== | |

| + | Victoria Grace, Joel Chapman | ||

| + | Sonic Anxiety is an ironic twist on performance anxiety, where the performance is the sound of my anxiety while locked in a cage. Sensors track my breathing to control the harmony and timbre while my pulse sets the pace and drum rhythms of the piece. | ||

| − | |||

| − | + | [[File:Cage.png|500px]] | |

| + | ==lovelyStepSequencer== | ||

| + | Micah Arvey | ||

| − | + | 3 dimensional step sequencer. | |

| − | + | ||

| − | + | [[File:BSsWorking.png|500px]] | |

| + | ==Velokeys== | ||

| + | Austin Whittier | ||

| + | Velokeys is a velocity-sensitive QWERTY keyboard for desktop jamming. Millions of people spend every day training their brains with a QWERTY key layout – at work, at school, and at home. This project is meant to meld the expressivity | ||

| − | [[ | + | [[File:Qwerty.png|500px]] |

| − | |||

| − | == | + | == Busk Box == |

| − | + | Sasha Leitman | |

| − | |||

| + | The Busk Box is a street performance system that combines the traditions of wandering street performers and musicians with the modern technologies. Inside of a 1911 wooden trunk, 2 6" speakers, 1 10" subwoofer, 2 class-T amplifiers and a portable mixer are all powered by lithium-ion batteries. In addition, the box is supported by folding wheels and legs which enable the box to be set up and torn down in less than 3 minutes. This platform was designed to bring experimental and electronic music to the San Francisco Fisherman's Wharf district. | ||

| − | [[ | + | [[Image:BuskBox.jpg|400px]] |

| − | + | ||

Latest revision as of 23:40, 4 May 2016

Contents

- 1 Introduction

- 2 The Blade Axe

- 3 Granuleggs

- 4 BelugaBeats

- 5 Chorest

- 6 Leap the Dips

- 7 Music Maker

- 8 Cetacant

- 9 Mephisto

- 10 Hearing Polyphony - A Game and Experiment!

- 11 CollideFx

- 12 The Processed Typewriter

- 13 String

- 14 Tibetan Singing Prayer Wheel

- 15 Mariah

- 16 Hill

- 17 Tower of Power

- 18 Sonic Anxiety

- 19 lovelyStepSequencer

- 20 Velokeys

- 21 Busk Box

Introduction

The Center for Computer Research in Music and Acoustics (CCRMA -- pronounced "karma") is an interdisciplinary center at Stanford University dedicated to artistic and technical innovation at the intersection of music and technology. We are a place where musicians, engineers, computer scientists, designers, and researchers in HCI and psychology get together to develop technologies and make art. In recent years, the question of how we interact physically with electronic music technologies has fostered a growing new area of research that we call Physical Interaction Design for Music. We emphasize practice-based research, using DIY physical prototying with low-cost and open source tools to develop new ways of making and interacting with sound. At the Maker Faire, we will demonstrate the low-cost hardware prototyping kits and our customized open source Linux software distribution that we use to develop new sonic interactions, as well as some exciting projects that have been developed using these tools. Below you will find photos and descriptions of the projects and tools we will demonstrate.

The Blade Axe

Romain Michon

The BladeAxe is an iPad-based musical instrument leveraging the concepts of “augmented mobile device” and “hybrid physical model controller.” By being almost fully standalone, it can be used easily on stage in the frame of a live performance by simply plugging it to a traditional guitar amplifier or to any sound system. Its acoustical plucking system provides the performer with an extended expressive potential compared to a standard controller

Granuleggs

Alison Rush, David Grunzweig, and Trijeet Mukhopadhyay

The Granuleggs is a new music controller for granular synthesis which allows a musician to explore the textural potential of their samples in a unique and intuitive way, with a focus on creating large textures instead of distinct notes. Each controller is egg shaped, designed to fit the curve of your palm as you gyrate the eggs and tease your fingers to find yourself the perfect soundscape.

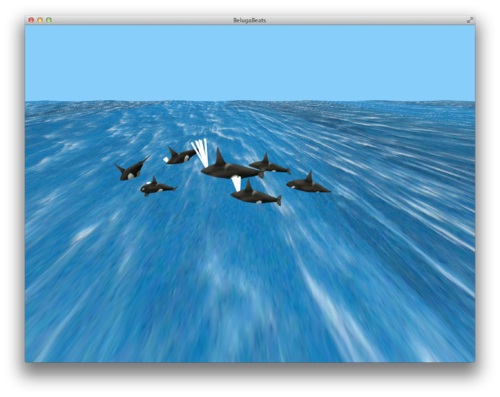

BelugaBeats

Jack Atherton

BelugaBeats is a whale-based step sequencer. You can add whales to 8 rows of a grid, and when a wave washes over them, they will sound their blowholes and play their notes. Changing a whale's size alters the pitch it sings. Occasionally, a whale will get distracted by a fish and play its note while underwater. Unfortunately, there is nothing you can do about this.

Chorest

Jack Atherton

Welcome to your personal Chorest! Walk around, plant seeds, grow trees, and hear the wind in the air! Look down from a bird's eye view, or move through the trees on the chorest floor. When you breathe on your trees, they'll play a chord for you. Or, try singing to them! Trees need a noisy sound to grow -- try stroking your microphone! As you grow your trees, their sound will mature. Don’t forget, it’s always possible to plant new seeds and start anew. Occasionally, you may see a ghost from the past. Please, do not be alarmed.

Leap the Dips

Jack Atherton

This rolling ball sculpture invites participants to test their skill at "leaping the dips" on a copper model of the world's oldest operating roller coaster. The project's aesthetic draws from a practice certainly much older than the roller coaster -- teenage rebellion, and the ensuing adult panic over the activities of "kids these days." Marbles roll over tracks and supports that are fashioned out of soldered copper wire. The tracks feature dips that cause the marbles to lift off the track and crash back down, as was possible in early roller coasters without up-stop wheels on the underside of the track. Take care in the placement of your marble not to cause the marbles to completely fly off the track! The dips are fitted with sensors that drive an algorithm in Max/MSP for giving aural feedback and a cultural experience to the users.

Music Maker

Sasha Leitman, John Granzow

Music Maker (https://ccrma.stanford.edu/musicmaker) is a free online resource that provides files for 3D printing woodwind and brass mouthpieces and tutorials for using those mouthpieces to learn about acoustics and music. The mouthpieces are designed to fit into standard plumbing and automobile parts that can be easily purchased at home improvement and automotive stores. The goal is to make a musical tool that can be used as simply as a set of building blocks but that aims to bridge the gap between our increasingly digital world of fabrication and the real-world materials that make up our daily lives. An increasing number of schools, libraries and community groups are purchasing 3D printers but many are still struggling to create engaging and relevant curriculum that ties into academic subjects. Making new musical instruments is a fantastic way to learn about acoustics, physics and mathematics.

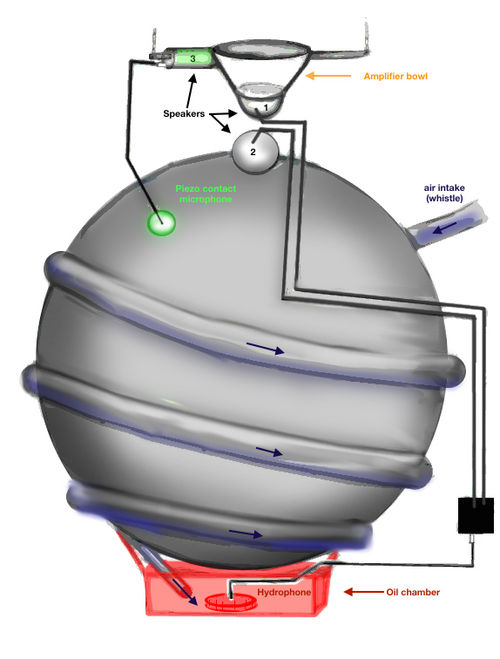

Cetacant

Alison Rush

The cetacant is a musical instrument inspired by whales and designed to accompany a performance of Vela 6911, a piece by Victor Gama. The cetacant emulates features of the cetacean vocal apparatus, using tubes and chambers full of air, water, and oil to produce and amplify sounds. The attached photo is of a prototype; the instrument's final form will resemble a suspended sphere, evoking the bubbles produced by a vocalizing whale, or our watery planet as seen from space.

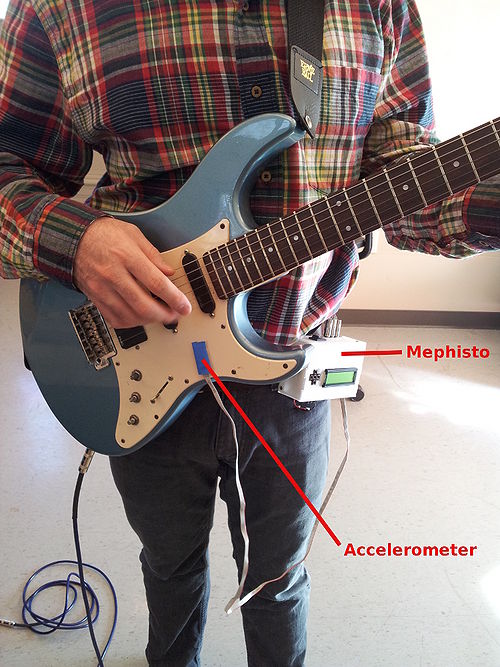

Mephisto

Romain Michon

Mephisto is a small battery powered open source Arduino based device. Up to five sensors can be connected to it using simple 1/8" stereo audio jacks. The output of each sensor is digitized and converted to OSC messages that can be streamed on a WIFI network to control any Faust generated app. The goal of Mephisto is to provide an easy way for musicians to interact with the different parameters of a Faust object or any other OSC compatible software during a live performance. As a "DIY" open source project, Mephisto only uses open source hardware (Arduino, etc.) and was designed to be easily built by anyone.

Hearing Polyphony - A Game and Experiment!

Madeline Huberth

I work in the Neuromusic lab at CCRMA, whose goal on the whole is to investigate phenomena related to understanding music. Specifically, I've been doing work this past year in how our brain processes polyphony (hearing multiple melodies at once), and will present a game I created that uses the stimuli used in our experiment as a way of understanding the experiment. The experiment and our findings will also be on a poster that I can bring.

Our experiment shows that your brain can detect changes in polyphonic patterns automatically - how easy is it for you to do it consciously? Play and find out!

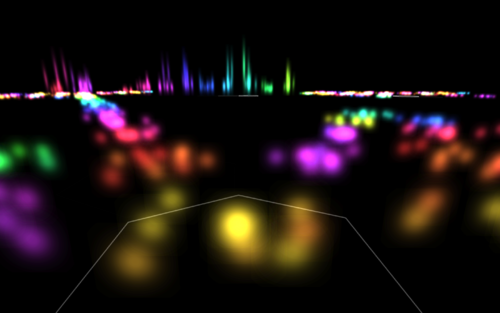

CollideFx

Chet Gnegy

CollideFx is a real-time audio effects processor that integrates the physics of real objects into the parameter space of the signal chain. Much like in a traditional signal chain, a user can choose a series of effects and offer realtime control to their various parameters. In this work, we introduce a means of creating tree-like signal graphs that dynamically change their routing in response to position changes of the unit generators. The unit generators are easily controllable using the click and drag interface and respond using familiar physics, including conservation of linear and angular momentum and friction. With little difficulty, users can design interesting effects, or alternatively, can fling a unit generator into a cluster of several others to obtain more surprising results, letting the physics engine do the decision making.

The Processed Typewriter

Andrew Watts

Other than the human voice, musical instruments convey primarily abstraction through sound content. We interpret these sounds as music to varying degrees, but if one were to step away from the cultural associations, the noise would remain highly ambiguous. With a typewriter the sounds inherent in the machine's use also contain linguistic meaning. Having this added layer to work with, a composer could pair the text and the sounds in a multitude of ways, even utilizing the ambiguity of semantic meaning with the ill-defined meaning of typewriter sounds. For this project I am specifically thinking towards a performance in the late spring during a residency with famed soprano Tony Arnold. Rather than a typical accompaniment for a solo soprano piece, like as a piano, it would be much more interesting and musically fertile to have her singing lyrics which are actively being typed in the background. Not only is the text being transformed into sound through the vocal line, but also the hammering away of the typewriter. Furthermore, these sounds and the images of the text appearing on the page would be processed, enabling a wide range of articulations, imagery, references, and audio sculpting.

String

Joshua Coronado

String is controller used to generate waveforms, curves, and envelopes using a camera, coloured string, and Max/MSP. Users draw curves representing objects such as a filter envelope using coloured string. The coloured curve is then captured by a camera and deciphered into a digital curve to be rendered out to audio by Max/MSP.

Tibetan Singing Prayer Wheel

Yoo-yoo Yeh

Inspired by the traditional Tibetan prayer wheel and Tibetan singing bowl, we present the Tibetan Singing Prayer Wheel, a physical motion sensing controller that allows you to play virtual Tibetan singing bowls as well as processes your voice when you perform several gestures - spinning the wheel at different speeds, raising and lowering your arm, and tapping a button on the outside. A separate RF transmitter allows you to transition between the three distinct sound design layers: (1) a Faust-STK physical model of a Tibetan singing bowl, (2) a delayed and windowed voice processing layer, and (3) a novel modal reverb model of an actual Tibetan singing bowl, that takes the voice as input. The system is designed to be easy for anyone to pick up and improvise with - go ahead and try it!

Mariah

Mathew Horton

Mariah sonifies the "diva finger wave." Mariah is a letter of love to women like Whitney Houston, Christina Aguilera, and its namesake, Mariah Carey. Simple draw on the screen with your finger and sing a note. Instant riffs and trills just like the great divas of the 80's, 90's, and 00's!

But the amazing, unexpected outcome of creating Mariah was a really interesting feedback instrument. Mariah takes in audio, pitch shifts it, and plays it What you end up with at low levels of sounds is a "self-generating" feedback instrument that creates some really crazy effects.

Hill

Mathew Horton

Hill is a software application for musical and visual accompaniment of spoken word poetry. It is inspired by the minimalist video game, Mountain, as well as Lauren Zuniga's poem, "World's Tallest Hill". Hill builds a scene through which the text of a poem can move. The view of the scene can shift, and depending on the particular place at which the scene is viewed, the accompanying audio is transformed in different ways. Hill allows users to "compose" an accompaniment for a poem by adhering to a sort of "score."

Tower of Power

Graham Davis, Connor Kelley

Tower of Power (ToP for short) is an interactive tower of wood that generates sound and sweet LED's. Inspired by the Hunchback of Notre Dame and 1970s funk, ToP is the auditory column for our generation. Tact is a project designed to make sound design and beat construction more intuitive. The instrument is a glove mounted with contact microphones that allows the wearer to record, transform and perform natural sounds at the touch of a finger. A wireless iPad interface provides the wearer with sound-shaping controls, playback effects and glove feedback. Amplify your interaction with the world via tactile sampling and contact playback with Tact. String is controller used to generate waveforms, curves, and envelopes using a camera, coloured string, and Max/MSP. Users draw curves representing objects such as a filter envelope using coloured string. The coloured curve is then captured by a camera and deciphered into a digital curve to be rendered out to audio by Max/MSP.

Sonic Anxiety

Victoria Grace, Joel Chapman

Sonic Anxiety is an ironic twist on performance anxiety, where the performance is the sound of my anxiety while locked in a cage. Sensors track my breathing to control the harmony and timbre while my pulse sets the pace and drum rhythms of the piece.

lovelyStepSequencer

Micah Arvey

3 dimensional step sequencer.

Velokeys

Austin Whittier

Velokeys is a velocity-sensitive QWERTY keyboard for desktop jamming. Millions of people spend every day training their brains with a QWERTY key layout – at work, at school, and at home. This project is meant to meld the expressivity

Busk Box

Sasha Leitman

The Busk Box is a street performance system that combines the traditions of wandering street performers and musicians with the modern technologies. Inside of a 1911 wooden trunk, 2 6" speakers, 1 10" subwoofer, 2 class-T amplifiers and a portable mixer are all powered by lithium-ion batteries. In addition, the box is supported by folding wheels and legs which enable the box to be set up and torn down in less than 3 minutes. This platform was designed to bring experimental and electronic music to the San Francisco Fisherman's Wharf district.