256a-fall-2009/shiweis-project

Contents

Pictone - Vision Based Music System

Music 256A Fall 2009 Final Project. Shiwei Song (shiweis)

Introduction

Modern computers have enabled novel ways for people to interact with and generate sound. My project is to build an instrument where the inputs are pictures drawn with pen and paper. The system takes video feed of simple shapes and lines drawn on paper and converts them into notes of different instruments. The audience sees the performer's drawings, visualization of notes, and hears the corresponding sonic results.

My initial inspiration came from the Birds on the Wires video. The creator converted a picture of birds on wires to musical notes and produced a short piece of music that is both simple and beautiful. I felt that creating sound through drawings has the following advantages:

- Most people can write or draw simple shapes. This system invites anyone to try and make some music/noise (and have fun!).

- The pictorial music language is novel to the audience, so they will be constantly surprised or left wondering what the next output will be.

- The characters/pictures in the music language can itself have some sort of meaning (perhaps when combined together) so there will be both visual and sonic messages conveyed.

- "Live coding" by drawing is fairly unconventional and this itself may be an interesting experiment to perform or observe.

- Drawing has a lot of freedom that cannot be mapped to traditional hardware devices. The performer can further influence the system by change the way images are taken by the system such as by rotating the paper. Combined, the performer has a lot of room for interaction with the instrument.

I hope that my project will serve as the basis for a system that will eventually demonstrate the advantages listed above.

System

Although the majority of the work for this project was on creating the software, the project can be viewed as having two disjoint parts: the picture language and the software system.

Language

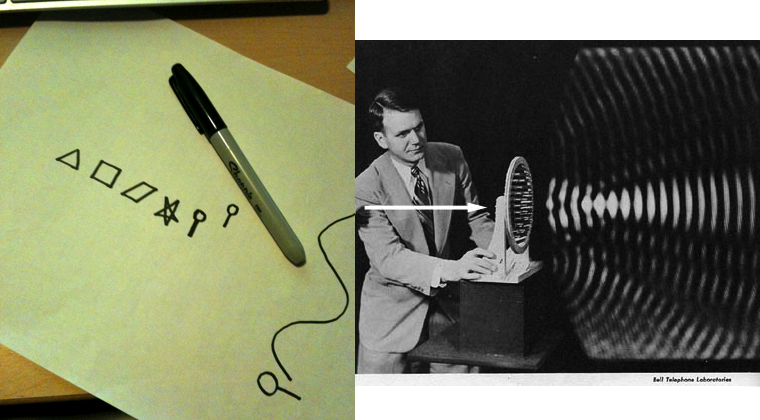

The first component of the project is to figure out what type of things the user can draw and what type of results they might produce. Because of the time constraints, the language supported so far is fairly simple/limited. The language consists of the following symbols.

Although the interpretation of the language is flexible in the system, below is the description of what its mappings are as of now.

- Triangle: plucked string note.

- Square: saxophone note.

- Slant: clarinet note.

- Star: short pause/silence.

- Pin/Lollipop: blowhole note.

- Arbitrary Curve: this is a modifier symbol that does nothing by itself. When combined with other symbols, it modifies the pitch of the previous note depending on its curvature. It's as if the previous note is riding a roller coaster.

The orientation that the above shapes are drawn is irrelevant. Their y-positions control the pitch of the notes.

Software System

The software is designed to be extensible with swappable parts. It includes the vision system for capturing and recognizing drawings, the synthesis system that parses detected shapes and generates sound, and the main routine for tying everything up and display visualization. When idle, the software simply captures camera input and displays the video feed. When the user presses the capture key, the software goes through the following steps:

- Camera stores the picture and pass it to the vision system for recognition.

- Vision system passes the results back to main routine.

- The picture with detection results is displayed. The results are also passed to the synthesis system for parsing and storage.

- The synthesis system converts the vision results into internal music commands and starts playing them in a loop.

- Meanwhile, the main routine syncs with the synthesis system and displays visualizations based on what is currently playing.

The user can repeat the above process. The hope is that one can modify or upgrade one of these systems without breaking the "loop."

The software uses the following libraries/technologies:

- OpenGL for display and rendering visualizations.

- OpenCV for vision/symbol detection.

- STK for instrument sound synthesis.

- SOIL for loading png textures.

Each component of the software is described below.

Vision

The vision system is powered by OpenCV and converts a still image of the user's drawing into detected shapes. It takes an image as input and returns a vector of results, each consisting of: shape type, bounding rectangle, and (for curves) points along the contour. The system consists of the 'Detector' class and its subclasses. Each subclass can implement its own method for detection as long as it still returns the results in the same format.

I tried several detection methods and ended up using Hu moments of contours for detection. At the initialization of the detector, templates of shapes to detect are loaded and their Hu moments are calculated. To detect shapes in a new image, first, the input image is converted to a binary edge image using the Canny edge detector. From this, contours (shapes) are detected and stored in an hierarchal tree structure. The bounding boxes for the contours are extracted and stored in the result vector. Shape types are identified by picked the templates with the closest Hu moment to the contours. If a shape is labeled as a curve, then its path is extracted and also stored in the results vector. Finally this vector is returned.

Experience

Milestones & Extensions

- 11/16/2009: Basic language ideas. Ability to recognize some characters in the vision component.

- 11/30/2009: Can recognize most of the characters and convert to internal representation. Can produce sound.

- 12/10/2009: Some visuals. Make the other components more robust. Ready for presentation.

Results

Picture Music Language

This project will construct a simple form of the picture music language. The primary concern is to have a good mapping of the pictures to representations and also have it easily recognizable by the software (so perhaps sacrificing picture meaning/aesthetics for ease of recognition.

The language will control two aspect of the music:

- The controls/flow of the sound produced. Such as indicating loops, tracks, speed, etc.

- Actual notes/instruments and pauses (lack of notes).

Some design questions I'm still debating:

- Whether to have notes from same instrument or selected notes from several instruments.

- Whether to allow polyphony, and if so throw what means? Either having the user input different tracks (probably easier) or have use some spacial mapping in the language (such as position on paper).

- What should color and shape map to? Should vertical position on paper be used?

Software System

The software system will consist of several parts:

- The vision system that uses OpenCV and digitalizes the characters.

- The internal controller that takes the digitalized representation and produces the synthesized sound (maybe through STK) and also display the visualization.

- The visual system that shows the camera feed and also some sort of visualization (perhaps simply the notes to be played and the organization of different tracks).

There are currently no plans to have any networked component to this.