Center for Computer Research in Music and Acoustics

COVID Policies

See CCRMA's COVID policies for 2023.

Upcoming Events

Pulse Audition: Leveraging DNN-Based Speech Enhancement for Improved Communication in Hearing Aids

This talk explores the evolution of Deep Neural Network (DNN) based approaches in speech enhancement and source separation. Beginning with a historical overview, it traces the progression from traditional methods to the current state-of-the-art techniques. Emphasizing the persistent challenge of speech intelligibility in noisy environments, the discussion transitions to the contemporary issue of inadequate performance in conventional hearing aids. The approach adopted by Pulse Audition of integrating DNN-based technologies into hearing assistance systems is then highlighted, presenting a promising avenue for significantly enhancing communication for individuals with hearing impairments.

Open House Concert

FREE and Open to the Public | In Person + Livestream

Caroline Davis: Liberative Joy

FREE and Open to the Public | In Person + Livestream

John Chowning & Friends

FREE and Open to the Public | In Person + Livestream

Stanford Graduate Composers Present: Manuela Freua & The TANK

FREE and Open to the Public | In Person

Recent Events

CCRMA's 2024 MA/MST Cohort: In Coherence

X Meoarks the Spot

FREE and Open to the Public | In Person + Livestream

In Coherence World Premiere & Watch Party

"In Coherence" is an audiovisual collage representing the incredibly varied musical interests and talents of CCRMA's 2024 MA/MST cohort. Each artist completed a minute-long audiovisual piece, given only the last six seconds of a previous artist's work for inspiration. While each artist's gesture may appear in coherent at first glance, this sequential juxtaposition places them "in coherent" conversation with each other, a celebration of creative and interdisciplinary audiovisual expression.

Shannon Hayden: Electric Strings

FREE and Open to the Public | In Person + Livestream

Past Live Streamed Events

Recent News

Hearables Will Monitor Your Brain and Body to Augment Your Life, by Poppy Crum

Quote from the article:

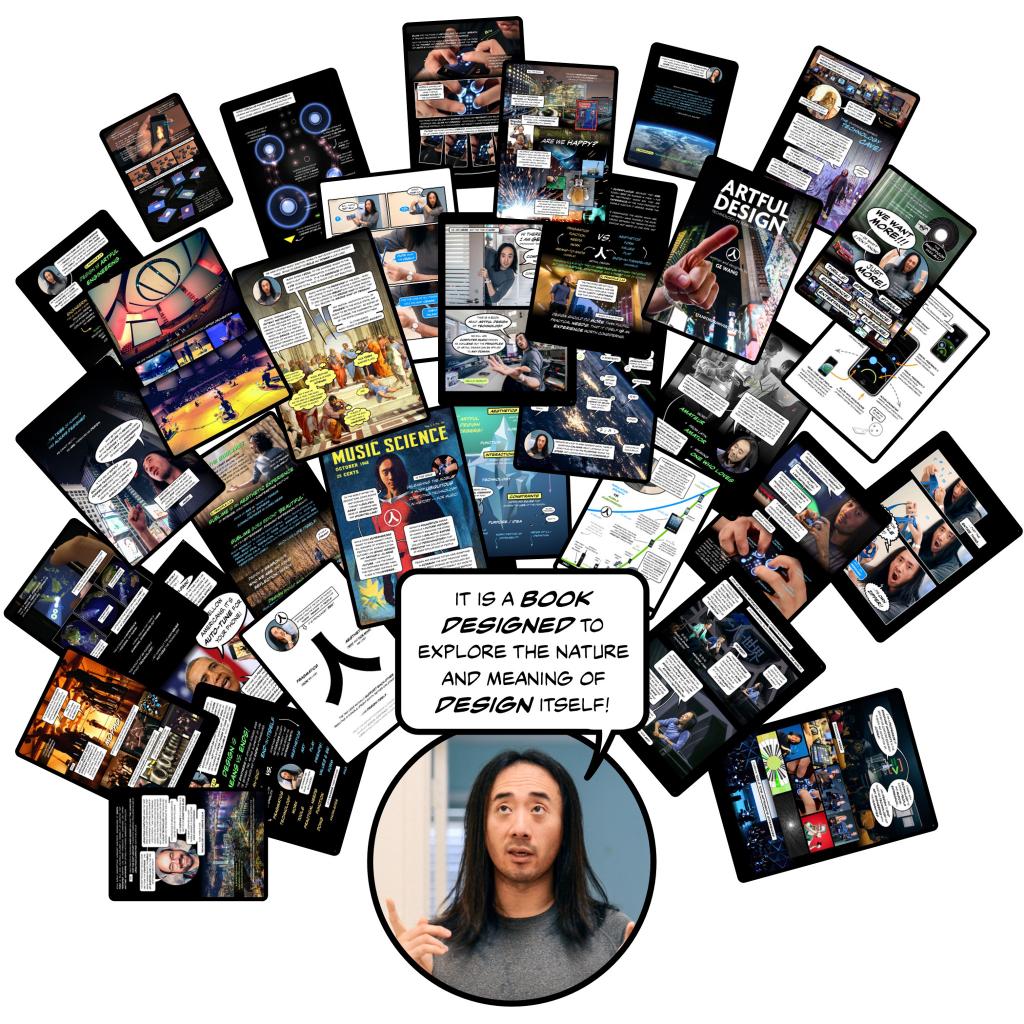

ARTFUL DESIGN — A new (comic) book by Ge Wang!

What is the nature of design, and the meaning it holds in human life? What does it mean to design well -- to design ethically? How can the shaping of technology reflect our values as human beings? These are the questions addressed in Ge Wang's new book, ARTFUL DESIGN (check it out: https://artful.design/).

Technology that Knows What You're Feeling: TED2018 Talk Featuring Dr. Poppy Crum

What happens when technology knows more about us than we do? Poppy Crum studies how we express emotions -- and she suggests the end of the poker face is near, as new tech makes it easy to see the signals that give away how we're feeling. In a talk and demo, she shows how "empathetic technology" can read physical signals like body temperature and the chemical composition of our breath to inform on our emotional state. For better or for worse. "If we recognize the power of becoming technological empaths, we get this opportunity where technology can help us bridge the emotional and cognitive divide," Crum says.

CCRMA's SLOrk Featured in Wired Magazine

The Aural Magic of Stanford's Laptop Orchestra

CCRMA: Award-winning Faculty!

Way to go, Poppy!

CTA Honors Five for Outstanding Contributions to Tech Industry Initiatives and Standards